Journal Description

Analytics

Analytics

is an international, peer-reviewed, open access journal on methodologies, technologies, and applications of analytics, published quarterly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- Rapid Publication: first decisions in 16 days; acceptance to publication in 5.8 days (median values for MDPI journals in the first half of 2023).

- Recognition of Reviewers: APC discount vouchers, optional signed peer review, and reviewer names published annually in the journal.

- Analytics is a companion journal of Mathematics.

Latest Articles

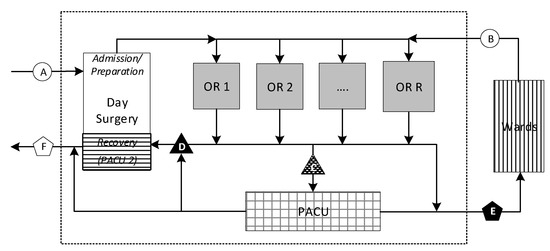

Surgery Scheduling and Perioperative Care: Smoothing and Visualizing Elective Surgery and Recovery Patient Flow

Analytics 2023, 2(3), 656-675; https://doi.org/10.3390/analytics2030036 - 21 Aug 2023

Abstract

►

Show Figures

This paper addresses the practical problem of scheduling operating room (OR) elective surgeries to minimize the likelihood of surgical delays caused by the unavailability of capacity for patient recovery in a central post-anesthesia care unit (PACU). We segregate patients according to their patterns

[...] Read more.

This paper addresses the practical problem of scheduling operating room (OR) elective surgeries to minimize the likelihood of surgical delays caused by the unavailability of capacity for patient recovery in a central post-anesthesia care unit (PACU). We segregate patients according to their patterns of flow through a multi-stage perioperative system and use characteristics of surgery type and surgeon booking times to predict time intervals for patient procedures and subsequent recoveries. Working with a hospital in which 50+ procedures are performed in 15+ ORs most weekdays, we develop a constraint programming (CP) model that takes the hospital’s elective surgery pre-schedule as input and produces a recommended alternate schedule designed to minimize the expected peak number of patients in the PACU over the course of the day. Our model was developed from the hospital’s data and evaluated through its application to daily schedules during a testing period. Schedules generated by our model indicated the potential to reduce the peak PACU load substantially, 20-30% during most days in our study period, or alternatively reduce average patient flow time by up to 15% given the same PACU peak load. We also developed tools for schedule visualization that can be used to aid management both before and after surgery day; plan PACU resources; propose critical schedule changes; identify the timing, location, and root causes of delay; and to discern the differences in surgical specialty case mixes and their potential impacts on the system. This work is especially timely given high surgical wait times in Ontario which even got worse due to the COVID-19 pandemic.

Full article

Open AccessArticle

Cyberpsychology: A Longitudinal Analysis of Cyber Adversarial Tactics and Techniques

Analytics 2023, 2(3), 618-655; https://doi.org/10.3390/analytics2030035 - 11 Aug 2023

Abstract

►▼

Show Figures

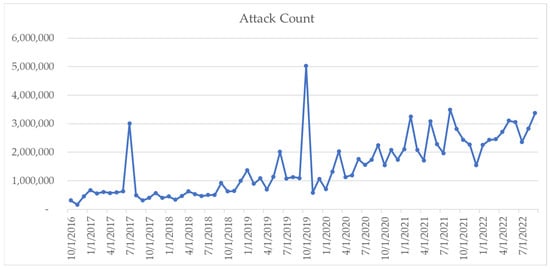

The rapid proliferation of cyberthreats necessitates a robust understanding of their evolution and associated tactics, as found in this study. A longitudinal analysis of these threats was conducted, utilizing a six-year data set obtained from a deception network, which emphasized its significance in

[...] Read more.

The rapid proliferation of cyberthreats necessitates a robust understanding of their evolution and associated tactics, as found in this study. A longitudinal analysis of these threats was conducted, utilizing a six-year data set obtained from a deception network, which emphasized its significance in the study’s primary aim: the exhaustive exploration of the tactics and strategies utilized by cybercriminals and how these tactics and techniques evolved in sophistication and target specificity over time. Different cyberattack instances were dissected and interpreted, with the patterns behind target selection shown. The focus was on unveiling patterns behind target selection and highlighting recurring techniques and emerging trends. The study’s methodological design incorporated data preprocessing, exploratory data analysis, clustering and anomaly detection, temporal analysis, and cross-referencing. The validation process underscored the reliability and robustness of the findings, providing evidence of increasingly sophisticated, targeted cyberattacks. The work identified three distinct network traffic behavior clusters and temporal attack patterns. A validated scoring mechanism provided a benchmark for network anomalies, applicable for predictive analysis and facilitating comparative study of network behaviors. This benchmarking aids organizations in proactively identifying and responding to potential threats. The study significantly contributed to the cybersecurity discourse, offering insights that could guide the development of more effective defense strategies. The need for further investigation into the nature of detected anomalies was acknowledged, advocating for continuous research and proactive defense strategies in the face of the constantly evolving landscape of cyberthreats.

Full article

Figure 1

Open AccessArticle

Prediction of Stroke Disease with Demographic and Behavioural Data Using Random Forest Algorithm

Analytics 2023, 2(3), 604-617; https://doi.org/10.3390/analytics2030034 - 02 Aug 2023

Abstract

►▼

Show Figures

Stroke is a major cause of death worldwide, resulting from a blockage in the flow of blood to different parts of the brain. Many studies have proposed a stroke disease prediction model using medical features applied to deep learning (DL) algorithms to reduce

[...] Read more.

Stroke is a major cause of death worldwide, resulting from a blockage in the flow of blood to different parts of the brain. Many studies have proposed a stroke disease prediction model using medical features applied to deep learning (DL) algorithms to reduce its occurrence. However, these studies pay less attention to the predictors (both demographic and behavioural). Our study considers interpretability, robustness, and generalisation as key themes for deploying algorithms in the medical domain. Based on this background, we propose the use of random forest for stroke incidence prediction. Results from our experiment showed that random forest (RF) outperformed decision tree (DT) and logistic regression (LR) with a macro F1 score of 94%. Our findings indicated age and body mass index (BMI) as the most significant predictors of stroke disease incidence.

Full article

Figure 1

Open AccessArticle

Identification of Patterns in the Stock Market through Unsupervised Algorithms

Analytics 2023, 2(3), 592-603; https://doi.org/10.3390/analytics2030033 - 27 Jul 2023

Abstract

►▼

Show Figures

Making predictions in the stock market is a challenging task. At the same time, several studies have focused on forecasting the future behavior of the market and classifying financial assets. A different approach is to classify correlated data to discover patterns and atypical

[...] Read more.

Making predictions in the stock market is a challenging task. At the same time, several studies have focused on forecasting the future behavior of the market and classifying financial assets. A different approach is to classify correlated data to discover patterns and atypical behaviors in them. In this study, we propose applying unsupervised algorithms to process, model, and cluster related data from two different data sources, i.e., Google News and Yahoo Finance, to identify conditions in the stock market that might help to support the investment decision-making process. We applied principal component analysis (PCA) and a k-means clustering approach to group data according to their principal characteristics. We identified four conditions in the stock market, one comprising the least amount of data, characterized by high volatility. The main results show that, regularly, the stock market tends to have a steady performance. However, atypical conditions are conducive to higher volatility.

Full article

Figure 1

Open AccessArticle

Streamflow Estimation through Coupling of Hieararchical Clustering Analysis and Regression Analysis—A Case Study in Euphrates-Tigris Basin

by

and

Analytics 2023, 2(3), 577-591; https://doi.org/10.3390/analytics2030032 - 13 Jul 2023

Abstract

►▼

Show Figures

In this study, the resilience of designed water systems in the face of limited streamflow gauging stations and escalating global warming impacts were investigated. By performing a regression analysis, simulated meteorological data with observed streamflow from 1971 to 2020 across 33 stream gauging

[...] Read more.

In this study, the resilience of designed water systems in the face of limited streamflow gauging stations and escalating global warming impacts were investigated. By performing a regression analysis, simulated meteorological data with observed streamflow from 1971 to 2020 across 33 stream gauging stations in the Euphrates-Tigris Basin were correlated. Utilizing the Ordinary Least Squares regression method, streamflow for 2020–2100 using simulated meteorological data under RCP 4.5 and RCP 8.5 scenarios in CORDEX-EURO and CORDEX-MENA domains were also predicted. Streamflow variability was calculated based on meteorological variables and station morphological characteristics, particularly evapotranspiration. Hierarchical clustering analysis identified two clusters among the stream gauging stations, and for each cluster, two streamflow equations were derived. The regression analysis achieved robust streamflow predictions using six representative climate variables, with adj. R2 values of 0.7–0.85 across all models, primarily influenced by evapotranspiration. The use of a global model led to a 10% decrease in prediction capabilities for all CORDEX models based on R2 performance. This study emphasizes the importance of region homogeneity in estimating streamflow, encompassing both geographical and hydro-meteorological characteristics.

Full article

Figure 1

Open AccessArticle

Hierarchical Model-Based Deep Reinforcement Learning for Single-Asset Trading

Analytics 2023, 2(3), 560-576; https://doi.org/10.3390/analytics2030031 - 11 Jul 2023

Abstract

►▼

Show Figures

We present a hierarchical reinforcement learning (RL) architecture that employs various low-level agents to act in the trading environment, i.e., the market. The highest-level agent selects from among a group of specialized agents, and then the selected agent decides when to sell or

[...] Read more.

We present a hierarchical reinforcement learning (RL) architecture that employs various low-level agents to act in the trading environment, i.e., the market. The highest-level agent selects from among a group of specialized agents, and then the selected agent decides when to sell or buy a single asset for a period of time. This period can be variable according to a termination function. We hypothesized that, due to different market regimes, more than one single agent is needed when trying to learn from such heterogeneous data, and instead, multiple agents will perform better, with each one specializing in a subset of the data. We use k-meansclustering to partition the data and train each agent with a different cluster. Partitioning the input data also helps model-based RL (MBRL), where models can be heterogeneous. We also add two simple decision-making models to the set of low-level agents, diversifying the pool of available agents, and thus increasing overall behavioral flexibility. We perform multiple experiments showing the strengths of a hierarchical approach and test various prediction models at both levels. We also use a risk-based reward at the high level, which transforms the overall problem into a risk-return optimization. This type of reward shows a significant reduction in risk while minimally reducing profits. Overall, the hierarchical approach shows significant promise, especially when the pool of low-level agents is highly diverse. The usefulness of such a system is clear, especially for human-devised strategies, which could be incorporated in a sound manner into larger, powerful automatic systems.

Full article

Figure 1

Open AccessArticle

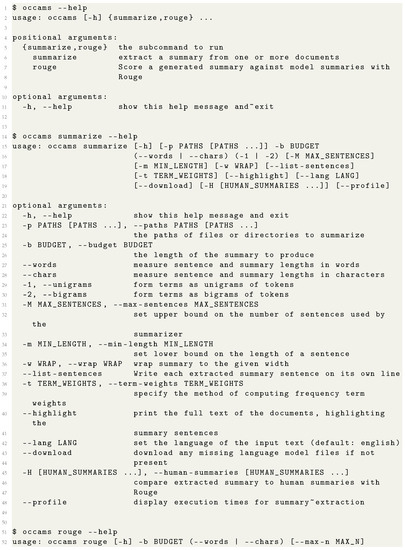

occams: A Text Summarization Package

Analytics 2023, 2(3), 546-559; https://doi.org/10.3390/analytics2030030 - 30 Jun 2023

Abstract

Extractive text summarization selects asmall subset of sentences from a document, which gives good “coverage” of a document. When given a set of term weights indicating the importance of the terms, the concept of coverage may be formalized into a combinatorial optimization problem

[...] Read more.

Extractive text summarization selects asmall subset of sentences from a document, which gives good “coverage” of a document. When given a set of term weights indicating the importance of the terms, the concept of coverage may be formalized into a combinatorial optimization problem known as the budgeted maximum coverage problem. Extractive methods in this class are known to beamong the best of classic extractive summarization systems. This paper gives a synopsis of thesoftware package occams, which is a multilingual extractive single and multi-document summarization package based on an algorithm giving an optimal approximation to the budgeted maximum coverage problem. The occams package is written in Python and provides an easy-to-use modular interface, allowing it to work in conjunction with popular Python NLP packages, such as nltk, stanza or spacy.

Full article

(This article belongs to the Special Issue Selected Papers from the 2022 Summer Conference on Applied Data Science (SCADS))

►▼

Show Figures

Figure A1

Open AccessFeature PaperArticle

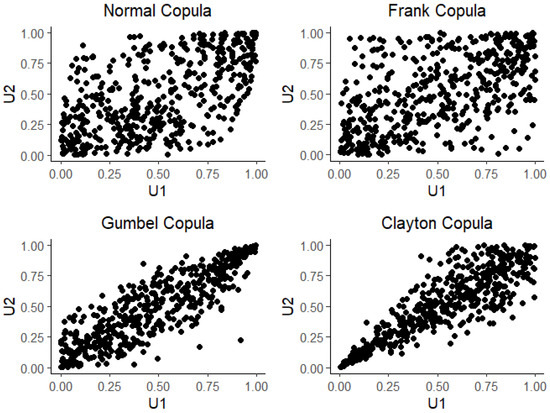

Bayesian Mixture Copula Estimation and Selection with Applications

Analytics 2023, 2(2), 530-545; https://doi.org/10.3390/analytics2020029 - 15 Jun 2023

Abstract

►▼

Show Figures

Mixture copulas are popular and essential tools for studying complex dependencies among variables. However, selecting the correct mixture models often involves repeated testing and estimations using criteria such as AIC, which could require effort and time. In this paper, we propose a method

[...] Read more.

Mixture copulas are popular and essential tools for studying complex dependencies among variables. However, selecting the correct mixture models often involves repeated testing and estimations using criteria such as AIC, which could require effort and time. In this paper, we propose a method that would enable us to select and estimate the correct mixture copulas simultaneously. This is accomplished by first overfitting the model and then conducting the Bayesian estimations. We verify the correctness of our approach by numerical simulations. Finally, the real data analysis is performed by studying the dependencies among three major financial markets.

Full article

Figure 1

Open AccessArticle

Preliminary Perspectives on Information Passing in the Intelligence Community

by

, , , , , , , and

Analytics 2023, 2(2), 509-529; https://doi.org/10.3390/analytics2020028 - 15 Jun 2023

Abstract

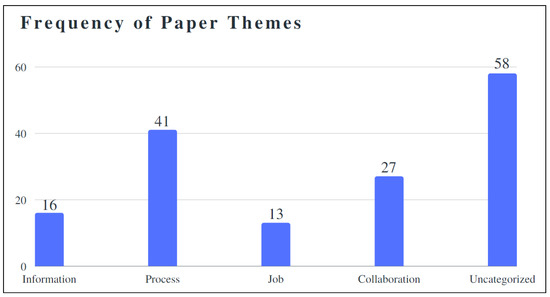

Analyst sensemaking research typically focuses on individual or small groups conducting intelligence tasks. This has helped understand information retrieval tasks and how people communicate information. As a part of the grand challenge of the Summer Conference on Applied Data Science (SCADS) to build

[...] Read more.

Analyst sensemaking research typically focuses on individual or small groups conducting intelligence tasks. This has helped understand information retrieval tasks and how people communicate information. As a part of the grand challenge of the Summer Conference on Applied Data Science (SCADS) to build a system that can generate tailored daily reports (TLDR) for intelligence analysts, we conducted a qualitative interview study with analysts to increase understanding of information passing in the intelligence community. While our results are preliminary, we expect that this work will contribute to a better understanding of the information ecosystem of the intelligence community, how institutional dynamics affect information passing, and what implications this has for a TLDR system. This work describes our involvement in and work completed during SCADS. Although preliminary, we identify that information passing is both a formal and informal process and often follows professional networks due especially to the small population and specialization of work. We call attention to the need for future analysis of information ecosystems to better support tailored information retrieval features.

Full article

(This article belongs to the Special Issue Selected Papers from the 2022 Summer Conference on Applied Data Science (SCADS))

►▼

Show Figures

Figure 1

Open AccessReview

Spatiotemporal Data Mining Problems and Methods

Analytics 2023, 2(2), 485-508; https://doi.org/10.3390/analytics2020027 - 14 Jun 2023

Abstract

►▼

Show Figures

Many scientific fields show great interest in the extraction and processing of spatiotemporal data, such as medicine with an emphasis on epidemiology and neurology, geology, social sciences, meteorology, and a great interest is also observed in the study of transport. Spatiotemporal data differ

[...] Read more.

Many scientific fields show great interest in the extraction and processing of spatiotemporal data, such as medicine with an emphasis on epidemiology and neurology, geology, social sciences, meteorology, and a great interest is also observed in the study of transport. Spatiotemporal data differ significantly from spatial data, since spatiotemporal data refer to measurements, which take into account both the place and the time in which they are received, with their respective characteristics, while spatial data refer to and describe information related only to place. The innovation brought about by spatiotemporal data mining has caused a revolution in many scientific fields, and this is because through it we can now provide solutions and answers to complex problems, as well as provide useful and valuable predictions, through predictive learning. However, combining time and place in data mining presents significant challenges and difficulties that must be overcome. Spatiotemporal data mining and analysis is a relatively new approach to data mining which has been studied more systematically in the last decade. The purpose of this article is to provide a good introduction to spatiotemporal data, and through this detailed description, we attempt to introduce descriptive logic and gain a complete knowledge of these data. We aim to introduce a new way of describing them, aiming for future studies, by combining the expressions that arise by type of data, using descriptive logic, with new expressions, that can be derived, to describe future states of objects and environments with great precision, providing accurate predictions. In order to highlight the value of spatiotemporal data, we proceed to give a brief description of ST data in the introduction. We describe the relevant work carried out to date, the types of spatiotemporal (ST) data, their properties and the transformations that can be made between them, attempting, to a small extent, to introduce constraints and rules using descriptive logic, introducing descriptive logic into spatiotemporal data by type, when initially presenting the ST data. The data snapshots by species and similarities between the cases are then described. We describe methods, introducing clustering, dynamic ST clusters, predictive learning, pattern mining frequency, and pattern emergence, and problems such as anomaly detection, identifying time points of changes in the behavior of the observed object, and development of relationships between them. We describe the application of ST data in various fields today, as well as the future work. We finally conclude with our conclusions, with the representation and study of spatiotemporal data can, in combination with other properties which accompany all natural phenomena, through their appropriate processing, lead to safe conclusions regarding the study of problems, and also with great precision in the extraction of predictions by accurately determining future states of an environment or an object. Thus, the importance of ST data makes them particularly valuable today in various scientific fields, and their extraction is a particularly demanding challenge for the future.

Full article

Figure 1

Open AccessArticle

A Novel Zero-Truncated Katz Distribution by the Lagrange Expansion of the Second Kind with Associated Inferences

by

, , , and

Analytics 2023, 2(2), 463-484; https://doi.org/10.3390/analytics2020026 - 01 Jun 2023

Abstract

►▼

Show Figures

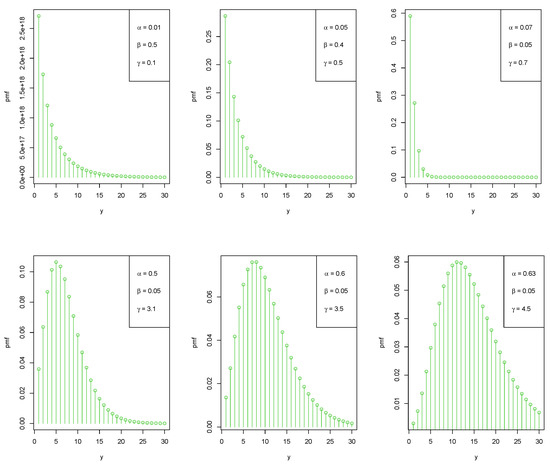

In this article, the Lagrange expansion of the second kind is used to generate a novel zero-truncated Katz distribution; we refer to it as the Lagrangian zero-truncated Katz distribution (LZTKD). Notably, the zero-truncated Katz distribution is a special case of this distribution. Along

[...] Read more.

In this article, the Lagrange expansion of the second kind is used to generate a novel zero-truncated Katz distribution; we refer to it as the Lagrangian zero-truncated Katz distribution (LZTKD). Notably, the zero-truncated Katz distribution is a special case of this distribution. Along with the closed form expression of all its statistical characteristics, the LZTKD is proven to provide an adequate model for both underdispersed and overdispersed zero-truncated count datasets. Specifically, we show that the associated hazard rate function has increasing, decreasing, bathtub, or upside-down bathtub shapes. Moreover, we demonstrate that the LZTKD belongs to the Lagrangian distribution of the first kind. Then, applications of the LZTKD in statistical scenarios are explored. The unknown parameters are estimated using the well-reputed method of the maximum likelihood. In addition, the generalized likelihood ratio test procedure is applied to test the significance of the additional parameter. In order to evaluate the performance of the maximum likelihood estimates, simulation studies are also conducted. The use of real-life datasets further highlights the relevance and applicability of the proposed model.

Full article

Figure 1

Open AccessArticle

Generalized Unit Half-Logistic Geometric Distribution: Properties and Regression with Applications to Insurance

Analytics 2023, 2(2), 438-462; https://doi.org/10.3390/analytics2020025 - 16 May 2023

Abstract

►▼

Show Figures

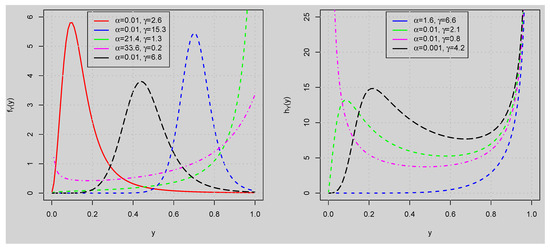

The use of distributions to model and quantify risk is essential in risk assessment and management. In this study, the generalized unit half-logistic geometric (GUHLG) distribution is developed to model bounded insurance data on the unit interval. The corresponding probability density function plots

[...] Read more.

The use of distributions to model and quantify risk is essential in risk assessment and management. In this study, the generalized unit half-logistic geometric (GUHLG) distribution is developed to model bounded insurance data on the unit interval. The corresponding probability density function plots indicate that the related distribution can handle data that exhibit left-skewed, right-skewed, symmetric, reversed-J, and bathtub shapes. The hazard rate function also suggests that the distribution can be applied to analyze data with bathtubs, N-shapes, and increasing failure rates. Subsequently, the inferential aspects of the proposed model are investigated. In particular, Monte Carlo simulation exercises are carried out to examine the performance of the estimation method by using an algorithm to generate random observations from the quantile function. The results of the simulation suggest that the considered estimation method is efficient. The univariate application of the distribution and the multivariate application of the associated regression using risk survey data reveal that the model provides a better fit than the other existing distributions and regression models. Under the multivariate application, we estimate the parameters of the regression model using both maximum likelihood and Bayesian estimations. The estimates of the parameters for the two methods are very close. Diagnostic plots of the Bayesian method using the trace, ergodic, and autocorrelation plots reveal that the chains converge to a stationary distribution.

Full article

Figure 1

Open AccessFeature PaperArticle

Clustering Matrix Variate Longitudinal Count Data

Analytics 2023, 2(2), 426-437; https://doi.org/10.3390/analytics2020024 - 05 May 2023

Abstract

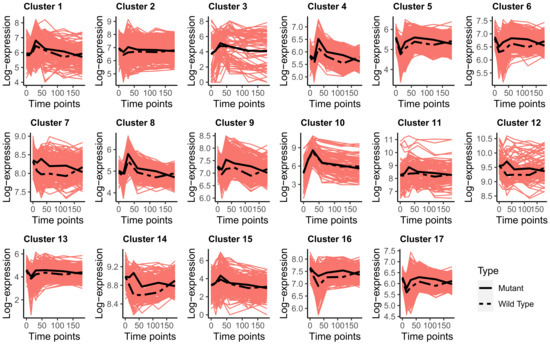

Matrix variate longitudinal discrete data can arise in transcriptomics studies when the data are collected for N genes at r conditions over t time points, and thus, each observation

Matrix variate longitudinal discrete data can arise in transcriptomics studies when the data are collected for N genes at r conditions over t time points, and thus, each observation

(This article belongs to the Special Issue Feature Papers in Analytics)

►▼

Show Figures

Figure 1

Open AccessArticle

Wavelet Support Vector Censored Regression

Analytics 2023, 2(2), 410-425; https://doi.org/10.3390/analytics2020023 - 04 May 2023

Abstract

►▼

Show Figures

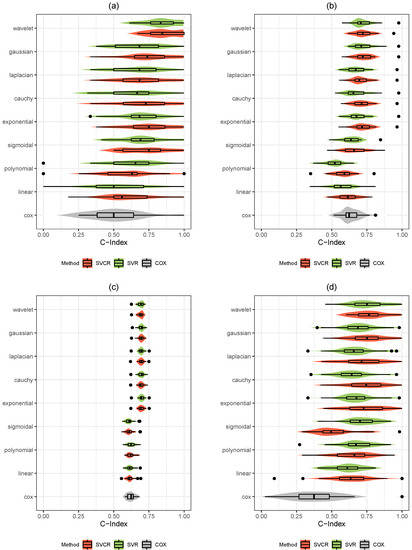

Learning methods in survival analysis have the ability to handle censored observations. The Cox model is a predictive prevalent statistical technique for survival analysis, but its use rests on the strong assumption of hazard proportionality, which can be challenging to verify, particularly when

[...] Read more.

Learning methods in survival analysis have the ability to handle censored observations. The Cox model is a predictive prevalent statistical technique for survival analysis, but its use rests on the strong assumption of hazard proportionality, which can be challenging to verify, particularly when working with non-linearity and high-dimensional data. Therefore, it may be necessary to consider a more flexible and generalizable approach, such as support vector machines. This paper aims to propose a new method, namely wavelet support vector censored regression, and compare the Cox model with traditional support vector regression and traditional support vector regression for censored data models, survival models based on support vector machines. In addition, to evaluate the effectiveness of different kernel functions in the support vector censored regression approach to survival data, we conducted a series of simulations with varying number of observations and ratios of censored data. Based on the simulation results, we found that the wavelet support vector censored regression outperformed the other methods in terms of the C-index. The evaluation was performed on simulations, survival benchmarking datasets and in a biomedical real application.

Full article

Figure 1

Open AccessFeature PaperArticle

Building Neural Machine Translation Systems for Multilingual Participatory Spaces

Analytics 2023, 2(2), 393-409; https://doi.org/10.3390/analytics2020022 - 01 May 2023

Abstract

►▼

Show Figures

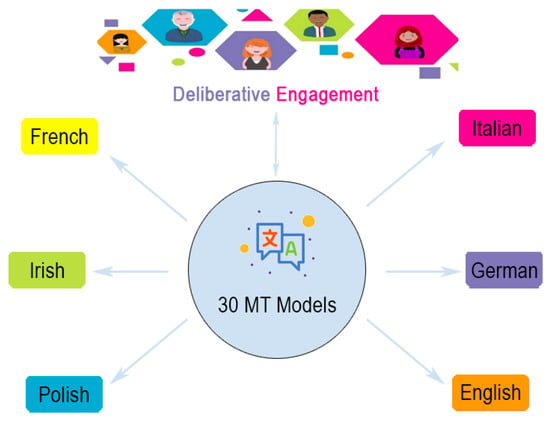

This work presents the development of the translation component in a multistage, multilevel, multimode, multilingual and dynamic deliberative (M4D2) system, built to facilitate automated moderation and translation in the languages of five European countries: Italy, Ireland, Germany, France and Poland. Two main topics

[...] Read more.

This work presents the development of the translation component in a multistage, multilevel, multimode, multilingual and dynamic deliberative (M4D2) system, built to facilitate automated moderation and translation in the languages of five European countries: Italy, Ireland, Germany, France and Poland. Two main topics were to be addressed in the deliberation process: (i) the environment and climate change; and (ii) the economy and inequality. In this work, we describe the development of neural machine translation (NMT) models for these domains for six European languages: Italian, English (included as the second official language of Ireland), Irish, German, French and Polish. As a result, we generate 30 NMT models, initially baseline systems built using freely available online data, which are then adapted to the domains of interest in the project by (i) filtering the corpora, (ii) tuning the systems with automatically extracted in-domain development datasets and (iii) using corpus concatenation techniques to expand the amount of data available. We compare our results produced by the domain-adapted systems with those produced by Google Translate, and demonstrate that fast, high-quality systems can be produced that facilitate multilingual deliberation in a secure environment.

Full article

Figure 1

Open AccessArticle

Investigating Online Art Search through Quantitative Behavioral Data and Machine Learning Techniques

Analytics 2023, 2(2), 359-392; https://doi.org/10.3390/analytics2020021 - 26 Apr 2023

Abstract

►▼

Show Figures

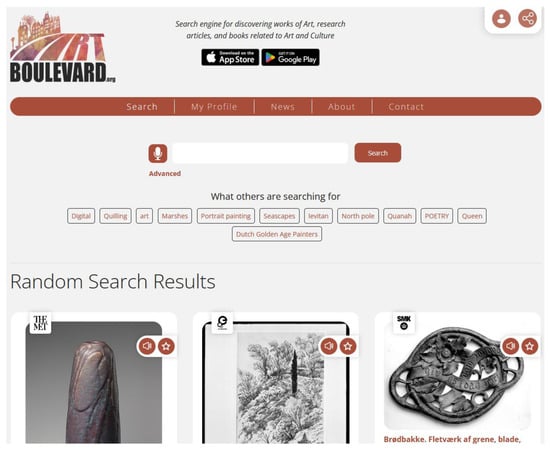

Studying searcher behavior has been a cornerstone of search engine research for decades, since it can lead to a better understanding of user needs and allow for an improved user experience. Going beyond descriptive data analysis and statistics, studies have been utilizing the

[...] Read more.

Studying searcher behavior has been a cornerstone of search engine research for decades, since it can lead to a better understanding of user needs and allow for an improved user experience. Going beyond descriptive data analysis and statistics, studies have been utilizing the capabilities of Machine Learning to further investigate how users behave during general purpose searching. But the thematic content of a search greatly affects many aspects of user behavior, which often deviates from general purpose search behavior. Thus, in this study, emphasis is placed specifically on the fields of Art and Cultural Heritage. Insights derived from behavioral data can help Culture and Art institutions streamline their online presence and allow them to better understand their user base. Existing research in this field often focuses on lab studies and explicit user feedback, but this study takes advantage of real usage quantitative data and its analysis through machine learning. Using data collected by real world usage of the Art Boulevard proprietary search engine for content related to Art and Culture and through the means of Machine Learning-powered tools and methodologies, this article investigates the peculiarities of Art-related online searches. Through clustering, various archetypes of Art search sessions were identified, thus providing insight on the variety of ways in which users interacted with the search engine. Additionally, using extreme Gradient boosting, the metrics that were more likely to predict the success of a search session were documented, underlining the importance of various aspects of user activity for search success. Finally, through applying topic modeling on the textual information of user-clicked results, the thematic elements that dominated user interest were investigated, providing an overview of prevalent themes in the fields of Art and Culture. It was established that preferred results revolved mostly around traditional visual Art themes, while academic and historical topics also had a strong presence.

Full article

Figure 1

Open AccessArticle

The AI Learns to Lie to Please You: Preventing Biased Feedback Loops in Machine-Assisted Intelligence Analysis

Analytics 2023, 2(2), 350-358; https://doi.org/10.3390/analytics2020020 - 18 Apr 2023

Cited by 1

Abstract

Researchers are starting to design AI-powered systems to automatically select and summarize the reports most relevant to each analyst, which raises the issue of bias in the information presented. This article focuses on the selection of relevant reports without an explicit query, a

[...] Read more.

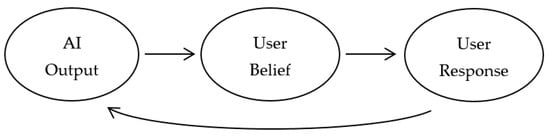

Researchers are starting to design AI-powered systems to automatically select and summarize the reports most relevant to each analyst, which raises the issue of bias in the information presented. This article focuses on the selection of relevant reports without an explicit query, a task known as recommendation. Drawing on previous work documenting the existence of human-machine feedback loops in recommender systems, this article reviews potential biases and mitigations in the context of intelligence analysis. Such loops can arise when behavioral “engagement” signals such as clicks or user ratings are used to infer the value of displayed information. Even worse, there can be feedback loops in the collection of intelligence information because users may also be responsible for tasking collection. Avoiding misalignment feedback loops requires an alternate, ongoing, non-engagement signal of information quality. Existing evaluation scales for intelligence product quality and rigor, such as the IC Rating Scale, could provide ground-truth feedback. This sparse data can be used in two ways: for human supervision of average performance and to build models that predict human survey ratings for use at recommendation time. Both techniques are widely used today by social media platforms. Open problems include the design of an ideal human evaluation method, the cost of skilled human labor, and the sparsity of the resulting data.

Full article

(This article belongs to the Special Issue Selected Papers from the 2022 Summer Conference on Applied Data Science (SCADS))

►▼

Show Figures

Figure 1

Open AccessEditorial

Data Stream Analytics

Analytics 2023, 2(2), 346-349; https://doi.org/10.3390/analytics2020019 - 14 Apr 2023

Abstract

The human brain works in such a complex way that we have not yet managed to decipher its functional mysteries [...]

Full article

(This article belongs to the Special Issue Feature Papers in Analytics)

Open AccessArticle

Development of a Dynamically Adaptable Routing System for Data Analytics Insights in Logistic Services

by

, , , , and

Analytics 2023, 2(2), 328-345; https://doi.org/10.3390/analytics2020018 - 13 Apr 2023

Abstract

►▼

Show Figures

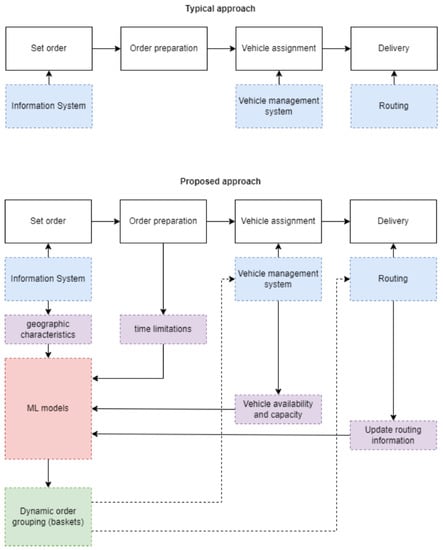

This work proposes an effective solution to the Vehicle Routing Problem, taking into account all phases of the delivery process. When compared to real-world data, the findings are encouraging and demonstrate the value of Machine Learning algorithms incorporated into the process. Several algorithms

[...] Read more.

This work proposes an effective solution to the Vehicle Routing Problem, taking into account all phases of the delivery process. When compared to real-world data, the findings are encouraging and demonstrate the value of Machine Learning algorithms incorporated into the process. Several algorithms were combined along with a modified Hopfield network to deliver the optimal solution to a multiobjective issue on a platform capable of monitoring the various phases of the process. Additionally, a system providing viable insights and analytics in regard to the orders was developed. The results reveal a maximum distance saving of 25% and a maximum overall delivery time saving of 14%.

Full article

Figure 1

Open AccessArticle

Metric Ensembles Aid in Explainability: A Case Study with Wikipedia Data

by

and

Analytics 2023, 2(2), 315-327; https://doi.org/10.3390/analytics2020017 - 07 Apr 2023

Abstract

In recent years, as machine learning models have become larger and more complex, it has become both more difficult and more important to be able to explain and interpret the results of those models, both to prevent model errors and to inspire confidence

[...] Read more.

In recent years, as machine learning models have become larger and more complex, it has become both more difficult and more important to be able to explain and interpret the results of those models, both to prevent model errors and to inspire confidence for end users of the model. As such, there has been a significant and growing interest in explainability in recent years as a highly desirable trait for a model to have. Similarly, there has been much recent attention on ensemble methods, which aim to aggregate results from multiple (often simple) models or metrics in order to outperform models that optimize for only a single metric. We argue that this latter issue can actually assist with the former: a model that optimizes for several metrics has some base level of explainability baked into the model, and this explainability can be leveraged not only for user confidence but to fine-tune the weights between the metrics themselves in an intuitive way. We demonstrate a case study of such a benefit, in which we obtain clear, explainable results based on an aggregate of five simple metrics of relevance, using Wikipedia data as a proxy for some large text-based recommendation problem. We demonstrate that not only can these metrics’ simplicity and multiplicity be leveraged for explainability, but in fact, that very explainability can lead to an intuitive fine-tuning process that improves the model itself.

Full article

(This article belongs to the Special Issue Selected Papers from the 2022 Summer Conference on Applied Data Science (SCADS))

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Conferences

Special Issues

Special Issue in

Analytics

Visual Analytics: Techniques and Applications

Guest Editors: Katerina Vrotsou, Kostiantyn KucherDeadline: 30 November 2023

Special Issue in

Analytics

New Insights in Learning Analytics

Guest Editors: Vivekanandan Suresh Kumar, KinshukDeadline: 31 December 2023