-

Investigating the Accuracy of Autoregressive Recurrent Networks Using Hierarchical Aggregation Structure-Based Data Partitioning

Investigating the Accuracy of Autoregressive Recurrent Networks Using Hierarchical Aggregation Structure-Based Data Partitioning -

Unsupervised Deep Learning for Structural Health Monitoring

Unsupervised Deep Learning for Structural Health Monitoring -

Massive Parallel Alignment of RNA-seq Reads in Serverless Computing

Massive Parallel Alignment of RNA-seq Reads in Serverless Computing -

Blockchain Technology Adoption Barriers and Enablers for Smart and Sustainable Agriculture

Blockchain Technology Adoption Barriers and Enablers for Smart and Sustainable Agriculture

Journal Description

Big Data and Cognitive Computing

Big Data and Cognitive Computing

is an international, scientific, peer-reviewed, open access journal of big data and cognitive computing published quarterly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), dblp, Inspec, Ei Compendex, and other databases.

- Journal Rank: CiteScore - Q1 (Management Information Systems)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 16.4 days after submission; acceptance to publication is undertaken in 3.9 days (median values for papers published in this journal in the first half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.7 (2022)

Latest Articles

Innovative Robotic Technologies and Artificial Intelligence in Pharmacy and Medicine: Paving the Way for the Future of Health Care—A Review

Big Data Cogn. Comput. 2023, 7(3), 147; https://doi.org/10.3390/bdcc7030147 - 30 Aug 2023

Abstract

►

Show Figures

The future of innovative robotic technologies and artificial intelligence (AI) in pharmacy and medicine is promising, with the potential to revolutionize various aspects of health care. These advances aim to increase efficiency, improve patient outcomes, and reduce costs while addressing pressing challenges such

[...] Read more.

The future of innovative robotic technologies and artificial intelligence (AI) in pharmacy and medicine is promising, with the potential to revolutionize various aspects of health care. These advances aim to increase efficiency, improve patient outcomes, and reduce costs while addressing pressing challenges such as personalized medicine and the need for more effective therapies. This review examines the major advances in robotics and AI in the pharmaceutical and medical fields, analyzing the advantages, obstacles, and potential implications for future health care. In addition, prominent organizations and research institutions leading the way in these technological advancements are highlighted, showcasing their pioneering efforts in creating and utilizing state-of-the-art robotic solutions in pharmacy and medicine. By thoroughly analyzing the current state of robotic technologies in health care and exploring the possibilities for further progress, this work aims to provide readers with a comprehensive understanding of the transformative power of robotics and AI in the evolution of the healthcare sector. Striking a balance between embracing technology and preserving the human touch, investing in R&D, and establishing regulatory frameworks within ethical guidelines will shape a future for robotics and AI systems. The future of pharmacy and medicine is in the seamless integration of robotics and AI systems to benefit patients and healthcare providers.

Full article

Open AccessCommunication

Enhancing Speech Emotions Recognition Using Multivariate Functional Data Analysis

Big Data Cogn. Comput. 2023, 7(3), 146; https://doi.org/10.3390/bdcc7030146 - 25 Aug 2023

Abstract

►▼

Show Figures

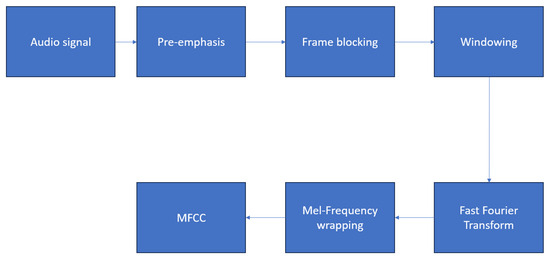

Speech Emotions Recognition (SER) has gained significant attention in the fields of human–computer interaction and speech processing. In this article, we present a novel approach to improve SER performance by interpreting the Mel Frequency Cepstral Coefficients (MFCC) as a multivariate functional data object,

[...] Read more.

Speech Emotions Recognition (SER) has gained significant attention in the fields of human–computer interaction and speech processing. In this article, we present a novel approach to improve SER performance by interpreting the Mel Frequency Cepstral Coefficients (MFCC) as a multivariate functional data object, which accelerates learning while maintaining high accuracy. To treat MFCCs as functional data, we preprocess them as images and apply resizing techniques. By representing MFCCs as functional data, we leverage the temporal dynamics of speech, capturing essential emotional cues more effectively. Consequently, this enhancement significantly contributes to the learning process of SER methods without compromising performance. Subsequently, we employ a supervised learning model, specifically a functional Support Vector Machine (SVM), directly on the MFCC represented as functional data. This enables the utilization of the full functional information, allowing for more accurate emotion recognition. The proposed approach is rigorously evaluated on two distinct databases, EMO-DB and IEMOCAP, serving as benchmarks for SER evaluation. Our method demonstrates competitive results in terms of accuracy, showcasing its effectiveness in emotion recognition. Furthermore, our approach significantly reduces the learning time, making it computationally efficient and practical for real-world applications. In conclusion, our novel approach of treating MFCCs as multivariate functional data objects exhibits superior performance in SER tasks, delivering both improved accuracy and substantial time savings during the learning process. This advancement holds great potential for enhancing human–computer interaction and enabling more sophisticated emotion-aware applications.

Full article

Figure 1

Open AccessArticle

Applied Digital Twin Concepts Contributing to Heat Transition in Building, Campus, Neighborhood, and Urban Scale

by

, , , , , , , and

Big Data Cogn. Comput. 2023, 7(3), 145; https://doi.org/10.3390/bdcc7030145 - 25 Aug 2023

Abstract

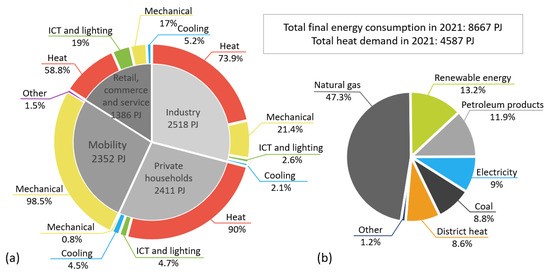

The heat transition is a central pillar of the energy transition, aiming to decarbonize and improve the energy efficiency of the heat supply in both the private and industrial sectors. On the one hand, this is achieved by substituting fossil fuels with renewable

[...] Read more.

The heat transition is a central pillar of the energy transition, aiming to decarbonize and improve the energy efficiency of the heat supply in both the private and industrial sectors. On the one hand, this is achieved by substituting fossil fuels with renewable energy. On the other hand, it involves reducing overall heat consumption and associated transmission and ventilation losses. In addition to refurbishment, digitalization contributes significantly. Despite substantial research on Digital Twins (DTs) for heat transition at different scales, a cross-scale perspective on heat optimization still needs to be developed. In response to this research gap, the present study examines four instances of applied DTs across various scales: building, campus, neighborhood, and urban. The study compares their objectives and conceptual frameworks while also identifying common challenges and potential synergies. The study’s findings indicate that all DT scales face similar data-related challenges, such as gathering, ownership, connectivity, and reliability. Also, hierarchical synergy is identified among the DTs, implying the need for collaboration and exchange. In response to this, the “Wärmewende” data platform, whose objectives and concepts are presented in the paper, promotes research data and knowledge exchange with internal and external stakeholders.

Full article

(This article belongs to the Special Issue Digital Twins for Complex Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Enhancing the Early Detection of Chronic Kidney Disease: A Robust Machine Learning Model

Big Data Cogn. Comput. 2023, 7(3), 144; https://doi.org/10.3390/bdcc7030144 - 16 Aug 2023

Abstract

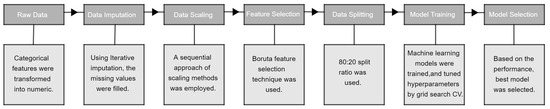

Clinical decision-making in chronic disorder prognosis is often hampered by high variance, leading to uncertainty and negative outcomes, especially in cases such as chronic kidney disease (CKD). Machine learning (ML) techniques have emerged as valuable tools for reducing randomness and enhancing clinical decision-making.

[...] Read more.

Clinical decision-making in chronic disorder prognosis is often hampered by high variance, leading to uncertainty and negative outcomes, especially in cases such as chronic kidney disease (CKD). Machine learning (ML) techniques have emerged as valuable tools for reducing randomness and enhancing clinical decision-making. However, conventional methods for CKD detection often lack accuracy due to their reliance on limited sets of biological attributes. This research proposes a novel ML model for predicting CKD, incorporating various preprocessing steps, feature selection, a hyperparameter optimization technique, and ML algorithms. To address challenges in medical datasets, we employ iterative imputation for missing values and a novel sequential approach for data scaling, combining robust scaling, z-standardization, and min-max scaling. Feature selection is performed using the Boruta algorithm, and the model is developed using ML algorithms. The proposed model was validated on the UCI CKD dataset, achieving outstanding performance with 100% accuracy. Our approach, combining innovative preprocessing steps, the Boruta feature selection, and the k-nearest neighbors algorithm, along with a hyperparameter optimization using grid-search cross-validation (CV), demonstrates its effectiveness in enhancing the early detection of CKD. This research highlights the potential of ML techniques in improving clinical support systems and reducing the impact of uncertainty in chronic disorder prognosis.

Full article

(This article belongs to the Special Issue Big Data in Health Care Information Systems)

►▼

Show Figures

Figure 1

Open AccessReview

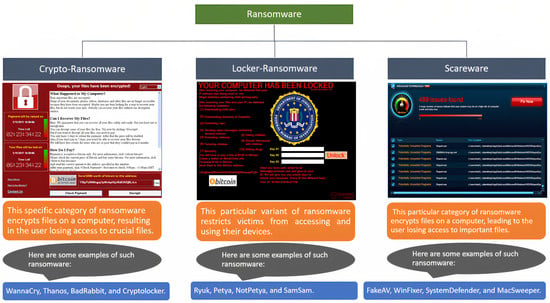

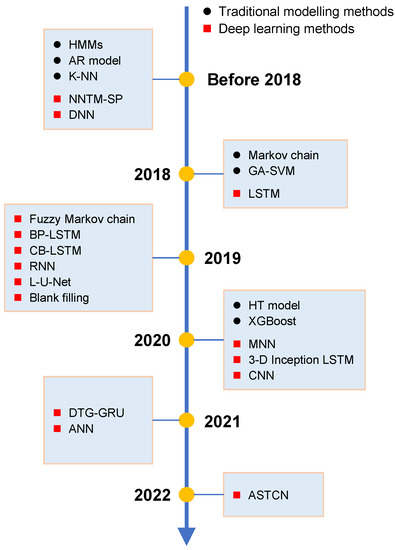

Ransomware Detection Using Machine Learning: A Survey

Big Data Cogn. Comput. 2023, 7(3), 143; https://doi.org/10.3390/bdcc7030143 - 16 Aug 2023

Abstract

Ransomware attacks pose significant security threats to personal and corporate data and information. The owners of computer-based resources suffer from verification and privacy violations, monetary losses, and reputational damage due to successful ransomware assaults. As a result, it is critical to accurately and

[...] Read more.

Ransomware attacks pose significant security threats to personal and corporate data and information. The owners of computer-based resources suffer from verification and privacy violations, monetary losses, and reputational damage due to successful ransomware assaults. As a result, it is critical to accurately and swiftly identify ransomware. Numerous methods have been proposed for identifying ransomware, each with its own advantages and disadvantages. The main objective of this research is to discuss current trends in and potential future debates on automated ransomware detection. This document includes an overview of ransomware, a timeline of assaults, and details on their background. It also provides comprehensive research on existing methods for identifying, avoiding, minimizing, and recovering from ransomware attacks. An analysis of studies between 2017 and 2022 is another advantage of this research. This provides readers with up-to-date knowledge of the most recent developments in ransomware detection and highlights advancements in methods for combating ransomware attacks. In conclusion, this research highlights unanswered concerns and potential research challenges in ransomware detection.

Full article

(This article belongs to the Special Issue Managing Cybersecurity Threats and Increasing Organizational Resilience)

►▼

Show Figures

Figure 1

Open AccessArticle

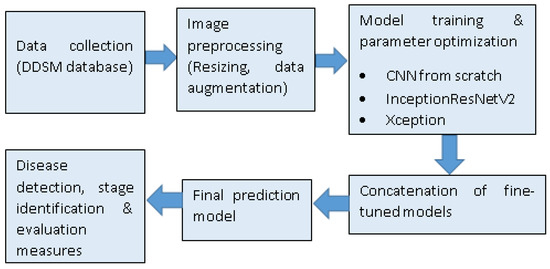

Breast Cancer Classification Using Concatenated Triple Convolutional Neural Networks Model

Big Data Cogn. Comput. 2023, 7(3), 142; https://doi.org/10.3390/bdcc7030142 - 16 Aug 2023

Abstract

►▼

Show Figures

Improved disease prediction accuracy and reliability are the main concerns in the development of models for the medical field. This study examined methods for increasing classification accuracy and proposed a precise and reliable framework for categorizing breast cancers using mammography scans. Concatenated Convolutional

[...] Read more.

Improved disease prediction accuracy and reliability are the main concerns in the development of models for the medical field. This study examined methods for increasing classification accuracy and proposed a precise and reliable framework for categorizing breast cancers using mammography scans. Concatenated Convolutional Neural Networks (CNN) were developed based on three models: Two by transfer learning and one entirely from scratch. Misclassification of lesions from mammography images can also be reduced using this approach. Bayesian optimization performs hyperparameter tuning of the layers, and data augmentation will refine the model by using more training samples. Analysis of the model’s accuracy revealed that it can accurately predict disease with 97.26% accuracy in binary cases and 99.13% accuracy in multi-classification cases. These findings are in contrast with recent studies on the same issue using the same dataset and demonstrated a 16% increase in multi-classification accuracy. In addition, an accuracy improvement of 6.4% was achieved after hyperparameter modification and augmentation. Thus, the model tested in this study was deemed superior to those presented in the extant literature. Hence, the concatenation of three different CNNs from scratch and transfer learning allows the extraction of distinct and significant features without leaving them out, enabling the model to make exact diagnoses.

Full article

Figure 1

Open AccessArticle

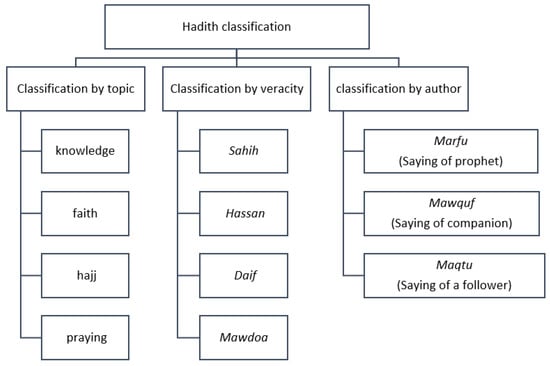

Hadiths Classification Using a Novel Author-Based Hadith Classification Dataset (ABCD)

by

, , , , , and

Big Data Cogn. Comput. 2023, 7(3), 141; https://doi.org/10.3390/bdcc7030141 - 14 Aug 2023

Abstract

►▼

Show Figures

Religious studies are a rich land for Natural Language Processing (NLP). The reason is that all religions have their instructions as written texts. In this paper, we apply NLP to Islamic Hadiths, which are the written traditions, sayings, actions, approvals, and discussions of

[...] Read more.

Religious studies are a rich land for Natural Language Processing (NLP). The reason is that all religions have their instructions as written texts. In this paper, we apply NLP to Islamic Hadiths, which are the written traditions, sayings, actions, approvals, and discussions of the Prophet Muhammad, his companions, or his followers. A Hadith is composed of two parts: the chain of narrators (Sanad) and the content of the Hadith (Matn). A Hadith is transmitted from its author to a Hadith book author using a chain of narrators. The problem we solve focuses on the classification of Hadiths based on their origin of narration. This is important for several reasons. First, it helps determine the authenticity and reliability of the Hadiths. Second, it helps trace the chain of narration and identify the narrators involved in transmitting Hadiths. Finally, it helps understand the historical and cultural contexts in which Hadiths were transmitted, and the different levels of authority attributed to the narrators. To the best of our knowledge, and based on our literature review, this problem is not solved before using machine/deep learning approaches. To solve this classification problem, we created a novel Author-Based Hadith Classification Dataset (ABCD) collected from classical Hadiths’ books. The ABCD size is 29 K Hadiths and it contains unique 18 K narrators, with all their information. We applied machine learning (ML), and deep learning (DL) approaches. ML was applied on Sanad and Matn separately; then, we did the same with DL. The results revealed that ML performs better than DL using the Matn input data, with a 77% F1-score. DL performed better than ML using the Sanad input data, with a 92% F1-score. We used precision and recall alongside the F1-score; details of the results are explained at the end of the paper. We claim that the ABCD and the reported results will motivate the community to work in this new area. Our dataset and results will represent a baseline for further research on the same problem.

Full article

Figure 1

Open AccessArticle

An Intelligent Bat Algorithm for Web Service Selection with QoS Uncertainty

Big Data Cogn. Comput. 2023, 7(3), 140; https://doi.org/10.3390/bdcc7030140 - 10 Aug 2023

Abstract

►▼

Show Figures

Currently, the selection of web services with an uncertain quality of service (QoS) is gaining much attention in the service-oriented computing paradigm (SOC). In fact, searching for a service composition that fulfills a complex user’s request is known to be NP-complete. The search

[...] Read more.

Currently, the selection of web services with an uncertain quality of service (QoS) is gaining much attention in the service-oriented computing paradigm (SOC). In fact, searching for a service composition that fulfills a complex user’s request is known to be NP-complete. The search time is mainly dependent on the number of requested tasks, the size of the available services, and the size of the QoS realizations (i.e., sample size). To handle this problem, we propose a two-stage approach that reduces the search space using heuristics for ranking the task services and a bat algorithm metaheuristic for selecting the final near-optimal compositions. The fitness used by the metaheuristic aims to fulfil all the global constraints of the user. The experimental study showed that the ranking heuristics, termed “fuzzy Pareto dominance” and “Zero-order stochastic dominance”, are highly effective compared to the other heuristics and most of the existing state-of-the-art methods.

Full article

Figure 1

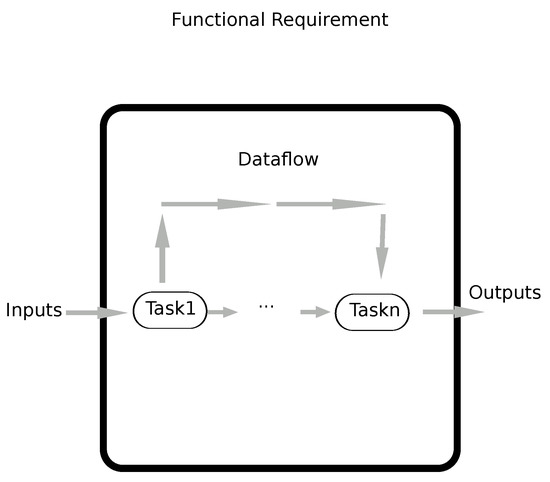

Open AccessArticle

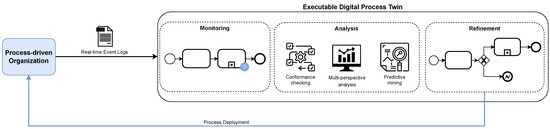

Executable Digital Process Twins: Towards the Enhancement of Process-Driven Systems

Big Data Cogn. Comput. 2023, 7(3), 139; https://doi.org/10.3390/bdcc7030139 - 08 Aug 2023

Abstract

The development of process-driven systems and the advancements in digital twins have led to the birth of new ways of monitoring and analyzing systems, i.e., digital process twins. Specifically, a digital process twin can allow the monitoring of system behavior and the analysis

[...] Read more.

The development of process-driven systems and the advancements in digital twins have led to the birth of new ways of monitoring and analyzing systems, i.e., digital process twins. Specifically, a digital process twin can allow the monitoring of system behavior and the analysis of the execution status to improve the whole system. However, the concept of the digital process twin is still theoretical, and process-driven systems cannot really benefit from them. In this regard, this work discusses how to effectively exploit a digital process twin and proposes an implementation that combines the monitoring, refinement, and enactment of system behavior. We demonstrated the proposed solution in a multi-robot scenario.

Full article

(This article belongs to the Special Issue Digital Twins for Complex Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

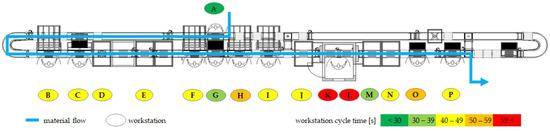

Cumulative and Rolling Horizon Prediction of Overall Equipment Effectiveness (OEE) with Machine Learning

by

and

Big Data Cogn. Comput. 2023, 7(3), 138; https://doi.org/10.3390/bdcc7030138 - 02 Aug 2023

Abstract

►▼

Show Figures

Nowadays, one of the important and indispensable conditions for the effectiveness and competitiveness of industrial companies is the high efficiency of manufacturing and assembly. These enterprises based on different methods and tools systematically monitor their efficiency metrics with Key Performance Indicators (KPIs). One

[...] Read more.

Nowadays, one of the important and indispensable conditions for the effectiveness and competitiveness of industrial companies is the high efficiency of manufacturing and assembly. These enterprises based on different methods and tools systematically monitor their efficiency metrics with Key Performance Indicators (KPIs). One of these most frequently used metrics is Overall Equipment Effectiveness (OEE), the product of availability, performance and quality. In addition to monitoring, it is also necessary to predict efficiency, which can be implemented with the support of machine learning techniques. This paper presents and compares several supervised machine learning techniques amongst other polynomial regression, lasso regression, ridge regression and gradient boost regression. The aim of this article is to determine the best estimation method for semiautomatic assembly line and large batch size. The case study presented with a real industrial example gives the answer as to which of the cumulative or rolling horizon prediction methods is more accurate.

Full article

Figure 1

Open AccessArticle

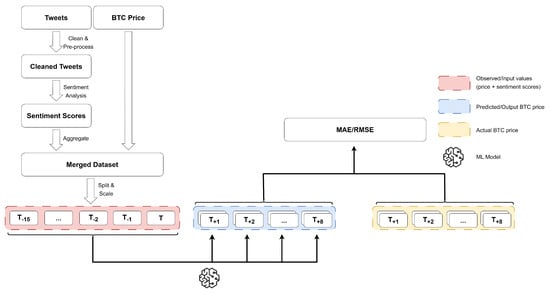

Predicting the Price of Bitcoin Using Sentiment-Enriched Time Series Forecasting

Big Data Cogn. Comput. 2023, 7(3), 137; https://doi.org/10.3390/bdcc7030137 - 31 Jul 2023

Abstract

Recently, various methods to predict the future price of financial assets have emerged. One promising approach is to combine the historic price with sentiment scores derived via sentiment analysis techniques. In this article, we focus on predicting the future price of Bitcoin, which

[...] Read more.

Recently, various methods to predict the future price of financial assets have emerged. One promising approach is to combine the historic price with sentiment scores derived via sentiment analysis techniques. In this article, we focus on predicting the future price of Bitcoin, which is currently the most popular cryptocurrency. More precisely, we propose a hybrid approach, combining time series forecasting and sentiment prediction from microblogs, to predict the intraday price of Bitcoin. Moreover, in addition to standard sentiment analysis methods, we are the first to employ a fine-tuned BERT model for this task. We also introduce a novel weighting scheme in which the weight of the sentiment of each tweet depends on the number of its creator’s followers. For evaluation, we consider periods with strongly varying ranges of Bitcoin prices. This enables us to assess the models w.r.t. robustness and generalization to varied market conditions. Our experiments demonstrate that BERT-based sentiment analysis and the proposed weighting scheme improve upon previous methods. Specifically, our hybrid models that use linear regression as the underlying forecasting algorithm perform best in terms of the mean absolute error (MAE of 2.67) and root mean squared error (RMSE of 3.28). However, more complicated models, particularly long short-term memory networks and temporal convolutional networks, tend to have generalization and overfitting issues, resulting in considerably higher MAE and RMSE scores.

Full article

(This article belongs to the Topic Artificial Intelligence Applications in Financial Technology)

►▼

Show Figures

Figure 1

Open AccessArticle

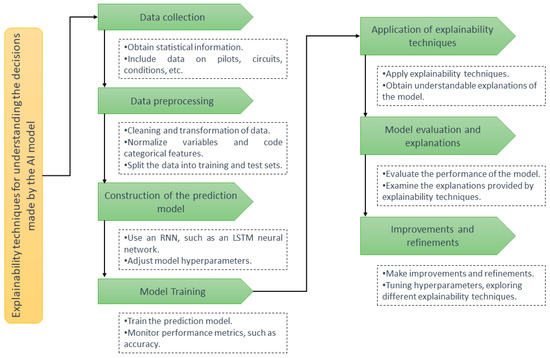

An Approach Based on Recurrent Neural Networks and Interactive Visualization to Improve Explainability in AI Systems

Big Data Cogn. Comput. 2023, 7(3), 136; https://doi.org/10.3390/bdcc7030136 - 31 Jul 2023

Abstract

This paper investigated the importance of explainability in artificial intelligence models and its application in the context of prediction in Formula (1). A step-by-step analysis was carried out, including collecting and preparing data from previous races, training an AI model to make predictions,

[...] Read more.

This paper investigated the importance of explainability in artificial intelligence models and its application in the context of prediction in Formula (1). A step-by-step analysis was carried out, including collecting and preparing data from previous races, training an AI model to make predictions, and applying explainability techniques in the said model. Two approaches were used: the attention technique, which allowed visualizing the most relevant parts of the input data using heat maps, and the permutation importance technique, which evaluated the relative importance of features. The results revealed that feature length and qualifying performance are crucial variables for position predictions in Formula (1). These findings highlight the relevance of explainability in AI models, not only in Formula (1) but also in other fields and sectors, by ensuring fairness, transparency, and accountability in AI-based decision making. The results highlight the importance of considering explainability in AI models and provide a practical methodology for its implementation in Formula (1) and other domains.

Full article

(This article belongs to the Special Issue Deep Network Learning and Its Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

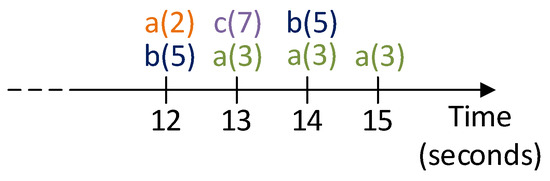

EnviroStream: A Stream Reasoning Benchmark for Environmental and Climate Monitoring

by

, , , , and

Big Data Cogn. Comput. 2023, 7(3), 135; https://doi.org/10.3390/bdcc7030135 - 31 Jul 2023

Abstract

Stream Reasoning (SR) focuses on developing advanced approaches for applying inference to dynamic data streams; it has become increasingly relevant in various application scenarios such as IoT, Smart Cities, Emergency Management, and Healthcare, despite being a relatively new field of research. The current

[...] Read more.

Stream Reasoning (SR) focuses on developing advanced approaches for applying inference to dynamic data streams; it has become increasingly relevant in various application scenarios such as IoT, Smart Cities, Emergency Management, and Healthcare, despite being a relatively new field of research. The current lack of standardized formalisms and benchmarks has been hindering the comparison between different SR approaches. We proposed a new benchmark, called EnviroStream, for evaluating SR systems on weather and environmental data. The benchmark includes queries and datasets of different sizes. We adopted I-DLV-sr, a recently released SR system based on Answer Set Programming, as a baseline for query modelling and experimentation. We also showcased continuous online reasoning via a web application.

Full article

(This article belongs to the Special Issue Big Data and Cognitive Computing in 2023)

►▼

Show Figures

Figure 1

Open AccessArticle

Driving Excellence in Official Statistics: Unleashing the Potential of Comprehensive Digital Data Governance

by

and

Big Data Cogn. Comput. 2023, 7(3), 134; https://doi.org/10.3390/bdcc7030134 - 29 Jul 2023

Abstract

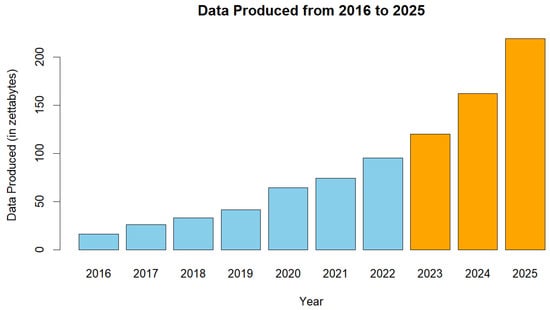

With the ubiquitous use of digital technologies and the consequent data deluge, official statistics faces new challenges and opportunities. In this context, strengthening official statistics through effective data governance will be crucial to ensure reliability, quality, and access to data. This paper presents

[...] Read more.

With the ubiquitous use of digital technologies and the consequent data deluge, official statistics faces new challenges and opportunities. In this context, strengthening official statistics through effective data governance will be crucial to ensure reliability, quality, and access to data. This paper presents a comprehensive framework for digital data governance for official statistics, addressing key components, such as data collection and management, processing and analysis, data sharing and dissemination, as well as privacy and ethical considerations. The framework integrates principles of data governance into digital statistical processes, enabling statistical organizations to navigate the complexities of the digital environment. Drawing on case studies and best practices, the paper highlights successful implementations of digital data governance in official statistics. The paper concludes by discussing future trends and directions, including emerging technologies and opportunities for advancing digital data governance.

Full article

(This article belongs to the Special Issue Revolutionizing Healthcare: Exploring the Latest Advances in Digital Health Technology)

►▼

Show Figures

Figure 1

Open AccessArticle

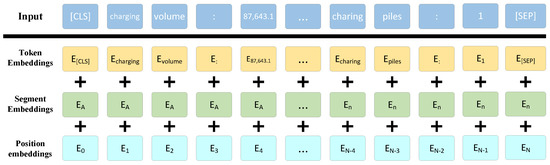

Evaluation Method of Electric Vehicle Charging Station Operation Based on Contrastive Learning

Big Data Cogn. Comput. 2023, 7(3), 133; https://doi.org/10.3390/bdcc7030133 - 24 Jul 2023

Abstract

This paper aims to address the issue of evaluating the operation of electric vehicle charging stations (EVCSs). Previous studies have commonly employed the method of constructing comprehensive evaluation systems, which greatly relies on manual experience for index selection and weight allocation. To overcome

[...] Read more.

This paper aims to address the issue of evaluating the operation of electric vehicle charging stations (EVCSs). Previous studies have commonly employed the method of constructing comprehensive evaluation systems, which greatly relies on manual experience for index selection and weight allocation. To overcome this limitation, this paper proposes an evaluation method based on natural language models for assessing the operation of charging stations. By utilizing the proposed SimCSEBERT model, this study analyzes the operational data, user charging data, and basic information of charging stations to predict the operational status and identify influential factors. Additionally, this study compared the evaluation accuracy and impact factor analysis accuracy of the baseline and the proposed model. The experimental results demonstrate that our model achieves a higher evaluation accuracy (operation evaluation accuracy = 0.9464; impact factor analysis accuracy = 0.9492) and effectively assesses the operation of EVCSs. Compared with traditional evaluation methods, this approach exhibits improved universality and a higher level of intelligence. It provides insights into the operation of EVCSs and user demands, allowing for the resolution of supply–demand contradictions that are caused by power supply constraints and the uneven distribution of charging demands. Furthermore, it offers guidance for more efficient and targeted strategies for the operation of charging stations.

Full article

(This article belongs to the Topic Application of Big Data and Deep Learning in Engineering Analysis and Design)

►▼

Show Figures

Figure 1

Open AccessArticle

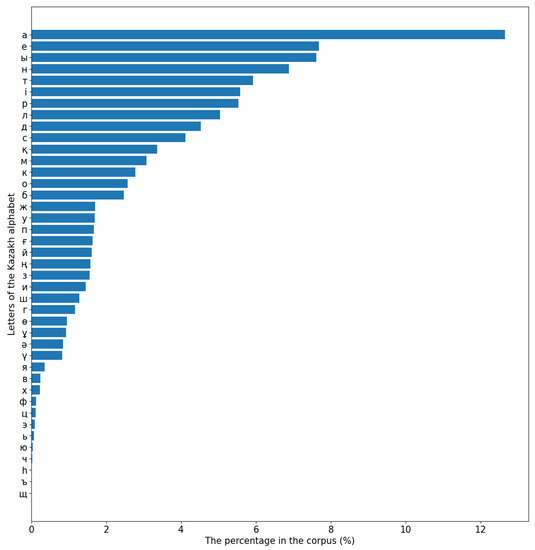

The Development of a Kazakh Speech Recognition Model Using a Convolutional Neural Network with Fixed Character Level Filters

Big Data Cogn. Comput. 2023, 7(3), 132; https://doi.org/10.3390/bdcc7030132 - 20 Jul 2023

Abstract

This study is devoted to the transcription of human speech in the Kazakh language in dynamically changing conditions. It discusses key aspects related to the phonetic structure of the Kazakh language, technical considerations in collecting the transcribed audio corpus, and the use of

[...] Read more.

This study is devoted to the transcription of human speech in the Kazakh language in dynamically changing conditions. It discusses key aspects related to the phonetic structure of the Kazakh language, technical considerations in collecting the transcribed audio corpus, and the use of deep neural networks for speech modeling. A high-quality decoded audio corpus was collected, containing 554 h of data, giving an idea of the frequencies of letters and syllables, as well as demographic parameters such as the gender, age, and region of residence of native speakers. The corpus contains a universal vocabulary and serves as a valuable resource for the development of modules related to speech. Machine learning experiments were conducted using the DeepSpeech2 model, which includes a sequence-to-sequence architecture with an encoder, decoder, and attention mechanism. To increase the reliability of the model, filters initialized with symbol-level embeddings were introduced to reduce the dependence on accurate positioning on object maps. The training process included simultaneous preparation of convolutional filters for spectrograms and symbolic objects. The proposed approach, using a combination of supervised and unsupervised learning methods, resulted in a 66.7% reduction in the weight of the model while maintaining relative accuracy. The evaluation on the test sample showed a 7.6% lower character error rate (CER) compared to existing models, demonstrating its most modern characteristics. The proposed architecture provides deployment on platforms with limited resources. Overall, this study presents a high-quality audio corpus, an improved speech recognition model, and promising results applicable to speech-related applications and languages beyond Kazakh.

Full article

(This article belongs to the Special Issue Advances in Natural Language Processing and Text Mining)

►▼

Show Figures

Figure 1

Open AccessArticle

A Real-Time Vehicle Speed Prediction Method Based on a Lightweight Informer Driven by Big Temporal Data

by

, , , , , , and

Big Data Cogn. Comput. 2023, 7(3), 131; https://doi.org/10.3390/bdcc7030131 - 15 Jul 2023

Abstract

►▼

Show Figures

At present, the design of modern vehicles requires improving driving performance while meeting emission standards, leading to increasingly complex power systems. In autonomous driving systems, accurate, real-time vehicle speed prediction is one of the key factors in achieving automated driving. Accurate prediction and

[...] Read more.

At present, the design of modern vehicles requires improving driving performance while meeting emission standards, leading to increasingly complex power systems. In autonomous driving systems, accurate, real-time vehicle speed prediction is one of the key factors in achieving automated driving. Accurate prediction and optimal control based on future vehicle speeds are key strategies for dealing with ever-changing and complex actual driving environments. However, predicting driver behavior is uncertain and may be influenced by the surrounding driving environment, such as weather and road conditions. To overcome these limitations, we propose a real-time vehicle speed prediction method based on a lightweight deep learning model driven by big temporal data. Firstly, the temporal data collected by automotive sensors are decomposed into a feature matrix through empirical mode decomposition (EMD). Then, an informer model based on the attention mechanism is designed to extract key information for learning and prediction. During the iterative training process of the informer, redundant parameters are removed through importance measurement criteria to achieve real-time inference. Finally, experimental results demonstrate that the proposed method achieves superior speed prediction performance through comparing it with state-of-the-art statistical modelling methods and deep learning models. Tests on edge computing devices also confirmed that the designed model can meet the requirements of actual tasks.

Full article

Figure 1

Open AccessArticle

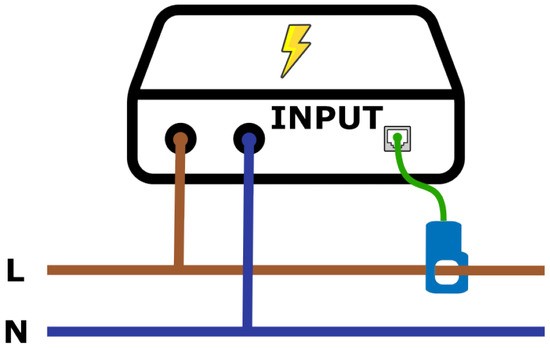

A Guide to Data Collection for Computation and Monitoring of Node Energy Consumption

by

, , , , and

Big Data Cogn. Comput. 2023, 7(3), 130; https://doi.org/10.3390/bdcc7030130 - 11 Jul 2023

Abstract

The digital transition that drives the new industrial revolution is largely driven by the application of intelligence and data. This boost leads to an increase in energy consumption, much of it associated with computing in data centers. This fact clashes with the growing

[...] Read more.

The digital transition that drives the new industrial revolution is largely driven by the application of intelligence and data. This boost leads to an increase in energy consumption, much of it associated with computing in data centers. This fact clashes with the growing need to save and improve energy efficiency and requires a more optimized use of resources. The deployment of new services in edge and cloud computing, virtualization, and software-defined networks requires a better understanding of consumption patterns aimed at more efficient and sustainable models and a reduction in carbon footprints. These patterns are suitable to be exploited by machine, deep, and reinforced learning techniques in pursuit of energy consumption optimization, which can ideally improve the energy efficiency of data centers and big computing servers providing these kinds of services. For the application of these techniques, it is essential to investigate data collection processes to create initial information points. Datasets also need to be created to analyze how to diagnose systems and sort out new ways of optimization. This work describes a data collection methodology used to create datasets that collect consumption data from a real-world work environment dedicated to data centers, server farms, or similar architectures. Specifically, it covers the entire process of energy stimuli generation, data extraction, and data preprocessing. The evaluation and reproduction of this method is offered to the scientific community through an online repository created for this work, which hosts all the code available for its download.

Full article

(This article belongs to the Special Issue Energy-Efficient IoT (Internet of Things) and Big Data Challenges for Connected Intelligence)

►▼

Show Figures

Figure 1

Open AccessArticle

An End-to-End Online Traffic-Risk Incident Prediction in First-Person Dash Camera Videos

Big Data Cogn. Comput. 2023, 7(3), 129; https://doi.org/10.3390/bdcc7030129 - 06 Jul 2023

Abstract

Predicting traffic risk incidents in first-person helps to ensure a safety reaction can occur before the incident happens for a wide range of driving scenarios and conditions. One challenge to building advanced driver assistance systems is to create an early warning system for

[...] Read more.

Predicting traffic risk incidents in first-person helps to ensure a safety reaction can occur before the incident happens for a wide range of driving scenarios and conditions. One challenge to building advanced driver assistance systems is to create an early warning system for the driver to react safely and accurately while perceiving the diversity of traffic-risk predictions in real-world applications. In this paper, we aim to bridge the gap by investigating two key research questions regarding the driver’s current status of driving through online videos and the types of other moving objects that lead to dangerous situations. To address these problems, we proposed an end-to-end two-stage architecture: in the first stage, unsupervised learning is applied to collect all suspicious events on actual driving; in the second stage, supervised learning is used to classify all suspicious event results from the first stage to a common event type. To enrich the classification type, the metadata from the result of the first stage is sent to the second stage to handle the data limitation while training our classification model. Through the online situation, our method runs

(This article belongs to the Special Issue Deep Network Learning and Its Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

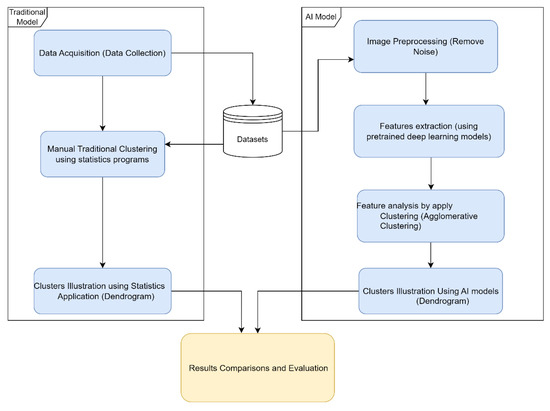

Transfer Learning Approach to Seed Taxonomy: A Wild Plant Case Study

by

, , , , and

Big Data Cogn. Comput. 2023, 7(3), 128; https://doi.org/10.3390/bdcc7030128 - 04 Jul 2023

Cited by 2

Abstract

Plant taxonomy is the scientific study of the classification and naming of various plant species. It is a branch of biology that aims to categorize and organize the diverse variety of plant life on earth. Traditionally, plant taxonomy has been performed using morphological

[...] Read more.

Plant taxonomy is the scientific study of the classification and naming of various plant species. It is a branch of biology that aims to categorize and organize the diverse variety of plant life on earth. Traditionally, plant taxonomy has been performed using morphological and anatomical characteristics, such as leaf shape, flower structure, and seed and fruit characters. Artificial intelligence (AI), machine learning, and especially deep learning can also play an instrumental role in plant taxonomy by automating the process of categorizing plant species based on the available features. This study investigated transfer learning techniques to analyze images of plants and extract features that can be used to cluster the species hierarchically using the k-means clustering algorithm. Several pretrained deep learning models were employed and evaluated. In this regard, two separate datasets were used in the study comprising of seed images of wild plants collected from Egypt. Extensive experiments using the transfer learning method (DenseNet201) demonstrated that the proposed methods achieved superior accuracy compared to traditional methods with the highest accuracy of 93% and F1-score and area under the curve (AUC) of 95%, respectively. That is considerable in contrast to the state-of-the-art approaches in the literature.

Full article

(This article belongs to the Special Issue Recent Advances in Deep Transfer Learning Applications for Image Processing Problems and Big Data)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- BDCC Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Topical Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

AI, Applied Sciences, BDCC, Remote Sensing, Sensors

Deep Learning and Transformers’ Methods Applied to Remotely Captured Data

Topic Editors: Moulay A. Akhloufi, Mozhdeh ShahbaziDeadline: 30 September 2023

Topic in

AI, Algorithms, Applied Sciences, BDCC, MAKE, Sensors

Artificial Intelligence and Fuzzy Systems

Topic Editors: Amelia Zafra, Jose Manuel Soto HidalgoDeadline: 30 November 2023

Topic in

Applied Sciences, BDCC, Photonics, Processes, Remote Sensing, Automation

Advances in AI-Empowered Beamline Automation and Data Science in Advanced Photon Sources

Topic Editors: Yi Zhang, Xiaogang Yang, Chunpeng Wang, Junrong ZhangDeadline: 20 December 2023

Topic in

AI, Applied Sciences, BDCC, Sensors, Information

Applied Computing and Machine Intelligence (ACMI)

Topic Editors: Chuan-Ming Liu, Wei-Shinn KuDeadline: 31 December 2023

Conferences

Special Issues

Special Issue in

BDCC

Managing Cybersecurity Threats and Increasing Organizational Resilience

Guest Editors: Peter R.J. Trim, Yang-Im LeeDeadline: 30 September 2023

Special Issue in

BDCC

Data Science in Health Care

Guest Editors: Nadav Rappoport, Yuval Shahar, Hyojung PaikDeadline: 20 October 2023

Special Issue in

BDCC

Cyber Security in Big Data Era

Guest Editor: Fabrizio BaiardiDeadline: 27 October 2023

Special Issue in

BDCC

Research Progress in Artificial Intelligence and Social Network Analysis

Guest Editors: Yong Tang, Chaobo He, Chengzhou FuDeadline: 10 November 2023

Topical Collections

Topical Collection in

BDCC

Machine Learning and Artificial Intelligence for Health Applications on Social Networks

Collection Editor: Carmela Comito