Journal Description

Data

Data

is a peer-reviewed, open access journal on data in science, with the aim of enhancing data transparency and reusability. The journal publishes in two sections: a section on the collection, treatment and analysis methods of data in science; a section publishing descriptions of scientific and scholarly datasets (one dataset per paper). The journal is published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), dblp, Inspec, RePEc, and other databases.

- Journal Rank: CiteScore - Q2 (Information Systems and Management)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 21 days after submission; acceptance to publication is undertaken in 4.3 days (median values for papers published in this journal in the first half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

2.6 (2022);

5-Year Impact Factor:

3.0 (2022)

Latest Articles

Employing Source Code Quality Analytics for Enriching Code Snippets Data

Data 2023, 8(9), 140; https://doi.org/10.3390/data8090140 (registering DOI) - 31 Aug 2023

Abstract

The availability of code snippets in online repositories like GitHub has led to an uptick in code reuse, this way further supporting an open-source component-based development paradigm. The likelihood of code reuse rises when the code components or snippets are of high quality,

[...] Read more.

The availability of code snippets in online repositories like GitHub has led to an uptick in code reuse, this way further supporting an open-source component-based development paradigm. The likelihood of code reuse rises when the code components or snippets are of high quality, especially in terms of readability, making their integration and upkeep simpler. Toward this direction, we have developed a dataset of code snippets that takes into account both the functional and the quality characteristics of the snippets. The dataset is based on the CodeSearchNet corpus and comprises additional information, including static analysis metrics, code violations, readability assessments, and source code similarity metrics. Thus, using this dataset, both software researchers and practitioners can conveniently find and employ code snippets that satisfy diverse functional needs while also demonstrating excellent readability and maintainability.

Full article

(This article belongs to the Section Information Systems and Data Management)

►

Show Figures

Open AccessData Descriptor

Dataset of Multi-Aspect Integrated Migration Indicators

Data 2023, 8(9), 139; https://doi.org/10.3390/data8090139 - 31 Aug 2023

Abstract

►▼

Show Figures

Nowadays, new branches of research are proposing the use of non-traditional data sources for the study of migration trends in order to find an original methodology to answer open questions about cross-border human mobility. New knowledge extracted from these data must be validated

[...] Read more.

Nowadays, new branches of research are proposing the use of non-traditional data sources for the study of migration trends in order to find an original methodology to answer open questions about cross-border human mobility. New knowledge extracted from these data must be validated using traditional data, which are however distributed across different sources and difficult to integrate. In this context we present the Multi-aspect Integrated Migration Indicators (MIMI) dataset, a new dataset of migration indicators (flows and stocks) and possible migration drivers (cultural, economic, demographic and geographic indicators). This was obtained through acquisition, transformation and integration of disparate traditional datasets together with social network data from Facebook (Social Connectedness Index). This article describes the process of gathering, embedding and merging traditional and novel variables, resulting in this new multidisciplinary dataset that we believe could significantly contribute to nowcast/forecast bilateral migration trends and migration drivers.

Full article

Figure 1

Open AccessArticle

Using Landsat-5 for Accurate Historical LULC Classification: A Comparison of Machine Learning Models

Data 2023, 8(9), 138; https://doi.org/10.3390/data8090138 - 30 Aug 2023

Abstract

►▼

Show Figures

This study investigates the application of various machine learning models for land use and land cover (LULC) classification in the Kerch Peninsula. The study utilizes archival field data, cadastral data, and published scientific literature for model training and testing, using Landsat-5 imagery from

[...] Read more.

This study investigates the application of various machine learning models for land use and land cover (LULC) classification in the Kerch Peninsula. The study utilizes archival field data, cadastral data, and published scientific literature for model training and testing, using Landsat-5 imagery from 1990 as input data. Four machine learning models (deep neural network, Random Forest, support vector machine (SVM), and AdaBoost) are employed, and their hyperparameters are tuned using random search and grid search. Model performance is evaluated through cross-validation and confusion matrices. The deep neural network achieves the highest accuracy (96.2%) and performs well in classifying water, urban lands, open soils, and high vegetation. However, it faces challenges in classifying grasslands, bare lands, and agricultural areas. The Random Forest model achieves an accuracy of 90.5% but struggles with differentiating high vegetation from agricultural lands. The SVM model achieves an accuracy of 86.1%, while the AdaBoost model performs the lowest with an accuracy of 58.4%. The novel contributions of this study include the comparison and evaluation of multiple machine learning models for land use classification in the Kerch Peninsula. The deep neural network and Random Forest models outperform SVM and AdaBoost in terms of accuracy. However, the use of limited data sources such as cadastral data and scientific articles may introduce limitations and potential errors. Future research should consider incorporating field studies and additional data sources for improved accuracy. This study provides valuable insights for land use classification, facilitating the assessment and management of natural resources in the Kerch Peninsula. The findings contribute to informed decision-making processes and lay the groundwork for further research in the field.

Full article

Figure 1

Open AccessArticle

A Framework for Evaluating Renewable Energy for Decision-Making Integrating a Hybrid FAHP-TOPSIS Approach: A Case Study in Valle del Cauca, Colombia

Data 2023, 8(9), 137; https://doi.org/10.3390/data8090137 - 30 Aug 2023

Abstract

►▼

Show Figures

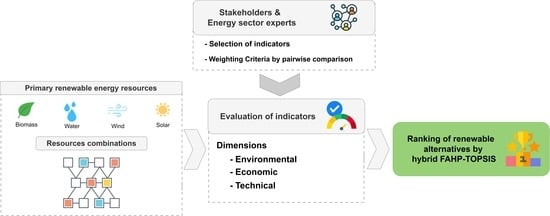

At present, the energy landscape of many countries faces transformational challenges driven by sustainable development objectives, supported by the implementation of clean technologies, such as renewable energy sources, to meet the flexibility and diversification needs of the traditional energy mix. However, integrating these

[...] Read more.

At present, the energy landscape of many countries faces transformational challenges driven by sustainable development objectives, supported by the implementation of clean technologies, such as renewable energy sources, to meet the flexibility and diversification needs of the traditional energy mix. However, integrating these technologies requires a thorough study of the context in which they are developed. Furthermore, it is necessary to carry out an analysis from a sustainable approach that quantifies the impact of proposals on multiple objectives established by stakeholders. This article presents a framework for analysis that integrates a method for evaluating the technical feasibility of resources for photovoltaic solar, wind, small hydroelectric power, and biomass generation. These resources are used to construct a set of alternatives and are evaluated using a hybrid FAHP-TOPSIS approach. FAHP-TOPSIS is used as a comparison technique among a collection of technical, economic, and environmental criteria, ranking the alternatives considering their level of trade-off between criteria. The results of a case study in Valle del Cauca (Colombia) offer a wide range of alternatives and indicate a combination of 50% biomass, and 50% solar as the best, assisting in decision-making for the correct use of available resources and maximizing the benefits for stakeholders.

Full article

Graphical abstract

Open AccessData Descriptor

Knowledge Graph Dataset for Semantic Enrichment of Picture Description in NAPS Database

Data 2023, 8(9), 136; https://doi.org/10.3390/data8090136 - 24 Aug 2023

Abstract

►▼

Show Figures

This data description introduces a comprehensive knowledge graph (KG) dataset with detailed information about the relevant high-level semantics of visual stimuli used to induce emotional states stored in the Nencki Affective Picture System (NAPS) repository. The dataset contains 6808 systematically manually assigned annotations

[...] Read more.

This data description introduces a comprehensive knowledge graph (KG) dataset with detailed information about the relevant high-level semantics of visual stimuli used to induce emotional states stored in the Nencki Affective Picture System (NAPS) repository. The dataset contains 6808 systematically manually assigned annotations for 1356 NAPS pictures in 5 categories, linked to WordNet synsets and Suggested Upper Merged Ontology (SUMO) concepts presented in a tabular format. Both knowledge databases provide an extensive and supervised taxonomy glossary suitable for describing picture semantics. The annotation glossary consists of 935 WordNet and 513 SUMO entities. A description of the dataset and the specific processes used to collect, process, review, and publish the dataset as open data are also provided. This dataset is unique in that it captures complex objects, scenes, actions, and the overall context of emotional stimuli with knowledge taxonomies at a high level of quality. It provides a valuable resource for a variety of projects investigating emotion, attention, and related phenomena. In addition, researchers can use this dataset to explore the relationship between emotions and high-level semantics or to develop data-retrieval tools to generate personalized stimuli sequences. The dataset is freely available in common formats (Excel and CSV).

Full article

Figure 1

Open AccessArticle

Enhancing Small Tabular Clinical Trial Dataset through Hybrid Data Augmentation: Combining SMOTE and WCGAN-GP

by

and

Data 2023, 8(9), 135; https://doi.org/10.3390/data8090135 - 23 Aug 2023

Abstract

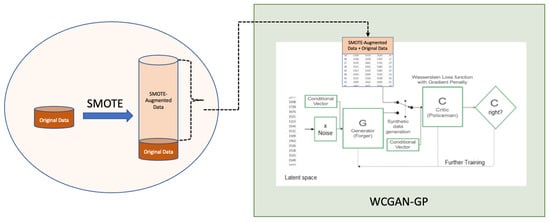

This study addressed the challenge of training generative adversarial networks (GANs) on small tabular clinical trial datasets for data augmentation, which are known to pose difficulties in training due to limited sample sizes. To overcome this obstacle, a hybrid approach is proposed, combining

[...] Read more.

This study addressed the challenge of training generative adversarial networks (GANs) on small tabular clinical trial datasets for data augmentation, which are known to pose difficulties in training due to limited sample sizes. To overcome this obstacle, a hybrid approach is proposed, combining the synthetic minority oversampling technique (SMOTE) to initially augment the original data to a more substantial size for improving the subsequent GAN training with a Wasserstein conditional generative adversarial network with gradient penalty (WCGAN-GP), proven for its state-of-art performance and enhanced stability. The ultimate objective of this research was to demonstrate that the quality of synthetic tabular data generated by the final WCGAN-GP model maintains the structural integrity and statistical representation of the original small dataset using this hybrid approach. This focus is particularly relevant for clinical trials, where limited data availability due to privacy concerns and restricted accessibility to subject enrollment pose common challenges. Despite the limitation of data, the findings demonstrate that the hybrid approach successfully generates synthetic data that closely preserved the characteristics of the original small dataset. By harnessing the power of this hybrid approach to generate faithful synthetic data, the potential for enhancing data-driven research in drug clinical trials become evident. This includes enabling a robust analysis on small datasets, supplementing the lack of clinical trial data, facilitating its utility in machine learning tasks, even extending to using the model for anomaly detection to ensure better quality control during clinical trial data collection, all while prioritizing data privacy and implementing strict data protection measures.

Full article

(This article belongs to the Topic Machine Learning Techniques Driven Medicine Analysis)

►▼

Show Figures

Figure 1

Open AccessArticle

Quantifying Webpage Performance: A Comparative Analysis of TCP/IP and QUIC Communication Protocols for Improved Efficiency

by

, , and

Data 2023, 8(8), 134; https://doi.org/10.3390/data8080134 - 19 Aug 2023

Abstract

►▼

Show Figures

Browsing is a prevalent activity on the World Wide Web, and users usually demonstrate significant expectations for expeditious information retrieval and seamless transactions. This article presents a comprehensive performance evaluation of the most frequently accessed webpages in recent years using Data Envelopment Analysis

[...] Read more.

Browsing is a prevalent activity on the World Wide Web, and users usually demonstrate significant expectations for expeditious information retrieval and seamless transactions. This article presents a comprehensive performance evaluation of the most frequently accessed webpages in recent years using Data Envelopment Analysis (DEA) adapted to the context (inverse DEA), comparing their performance under two distinct communication protocols: TCP/IP and QUIC. To assess performance disparities, parametric and non-parametric hypothesis tests are employed to investigate the appropriateness of each website’s communication protocols. We provide data on the inputs, outputs, and efficiency scores for 82 out of the world’s top 100 most-accessed websites, describing how experiments and analyses were conducted. The evaluation yields quantitative metrics pertaining to the technical efficiency of the websites and efficient benchmarks for best practices. Nine websites are considered efficient from the point of view of at least one of the communication protocols. Considering TCP/IP, about 80.5% of all units (66 webpages) need to reduce more than 50% of their page load time to be competitive, while this number is 28.05% (23 webpages), considering QUIC communication protocol. In addition, results suggest that TCP/IP protocol has an unfavorable effect on the overall distribution of inefficiencies.

Full article

Figure 1

Open AccessArticle

Leveraging Return Prediction Approaches for Improved Value-at-Risk Estimation

Data 2023, 8(8), 133; https://doi.org/10.3390/data8080133 - 17 Aug 2023

Abstract

Value at risk is a statistic used to anticipate the largest possible losses over a specific time frame and within some level of confidence, usually 95% or 99%. For risk management and regulators, it offers a solution for trustworthy quantitative risk management tools.

[...] Read more.

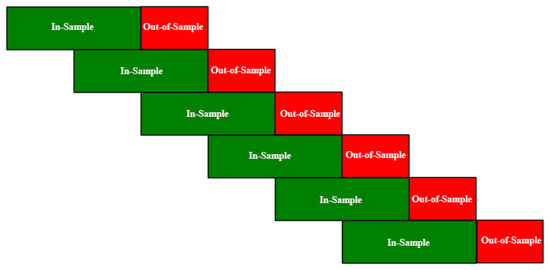

Value at risk is a statistic used to anticipate the largest possible losses over a specific time frame and within some level of confidence, usually 95% or 99%. For risk management and regulators, it offers a solution for trustworthy quantitative risk management tools. VaR has become the most widely used and accepted indicator of downside risk. Today, commercial banks and financial institutions utilize it as a tool to estimate the size and probability of upcoming losses in portfolios and, as a result, to estimate and manage the degree of risk exposure. The goal is to obtain the average number of VaR “failures” or “breaches” (losses that are more than the VaR) as near to the target rate as possible. It is also desired that the losses be evenly distributed as possible. VaR can be modeled in a variety of ways. The simplest method is to estimate volatility based on prior returns according to the assumption that volatility is constant. Otherwise, the volatility process can be modeled using the GARCH model. Machine learning techniques have been used in recent years to carry out stock market forecasts based on historical time series. A machine learning system is often trained on an in-sample dataset, where it can adjust and improve specific hyperparameters in accordance with the underlying metric. The trained model is tested on an out-of-sample dataset. We compared the baselines for the VaR estimation of a day (d) according to different metrics (i) to their respective variants that included stock return forecast information of d and stock return data of the days before d and (ii) to a GARCH model that included return prediction information of d and stock return data of the days before d. Various strategies such as ARIMA and a proposed ensemble of regressors have been employed to predict stock returns. We observed that the versions of the univariate techniques and GARCH integrated with return predictions outperformed the baselines in four different marketplaces.

Full article

(This article belongs to the Special Issue Advances in Text Mining Techniques and Applications for Knowledge Discovery)

►▼

Show Figures

Figure 1

Open AccessData Descriptor

VR Traffic Dataset on Broad Range of End-User Activities

Data 2023, 8(8), 132; https://doi.org/10.3390/data8080132 - 17 Aug 2023

Abstract

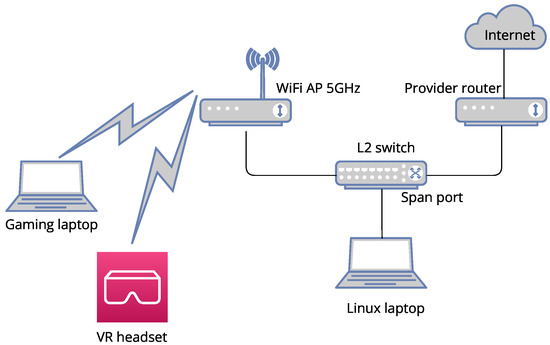

With the emergence of new internet traffic types in modern transport networks, it has become critical for service providers to understand the structure of that traffic and predict peaks of that load for planning infrastructure expansion. Several studies have investigated traffic parameters for

[...] Read more.

With the emergence of new internet traffic types in modern transport networks, it has become critical for service providers to understand the structure of that traffic and predict peaks of that load for planning infrastructure expansion. Several studies have investigated traffic parameters for Virtual Reality (VR) applications. Still, most of them test only a partial range of user activities during a limited time interval. This work creates a dataset of captures from a broader spectrum of VR activities performed with a Meta Quest 2 headset, with the duration of each real residential user session recorded for at least half an hour. Newly collected data helped show that some gaming VR traffic activities have a high share of uplink traffic and require symmetric user links. Also, we have figured out that the gaming phase of the overall gameplay is more sensitive to the channel resources reduction than the higher bitrate game launch phase. Hence, we recommend it as a source of traffic distribution for channel sizing model creation. From the gaming phase, capture intervals of more than 100 s contain the most representative information for modeling activity.

Full article

(This article belongs to the Section Information Systems and Data Management)

►▼

Show Figures

Figure 1

Open AccessData Descriptor

Draft Genome Sequence Data of Streptomyces anulatus, Strain K-31

by

, , , , and

Data 2023, 8(8), 131; https://doi.org/10.3390/data8080131 - 10 Aug 2023

Abstract

Streptomyces anulatus is a typical representative of the Streptomyces genus synthesizing a large number of biologically active compounds. In this study, the draft genome of Streptomyces anulatus, strain K-31 is presented, generated from Illumina reads by SPAdes software. The size of the

[...] Read more.

Streptomyces anulatus is a typical representative of the Streptomyces genus synthesizing a large number of biologically active compounds. In this study, the draft genome of Streptomyces anulatus, strain K-31 is presented, generated from Illumina reads by SPAdes software. The size of the assembled genome was 8.548838 Mb. Annotation of the S. anulatus genome assembly identified C. hemipterus genome 7749 genes, including 7149 protein-coding genes and 92 RNA genes. This genome will be helpful to further understand Streptomyces genetics and evolution and can be useful for obtained biological active compounds.

Full article

(This article belongs to the Section Computational Biology, Bioinformatics, and Biomedical Data Science)

►▼

Show Figures

Figure 1

Open AccessArticle

Towards Action-State Process Model Discovery

by

, , , , and

Data 2023, 8(8), 130; https://doi.org/10.3390/data8080130 - 09 Aug 2023

Abstract

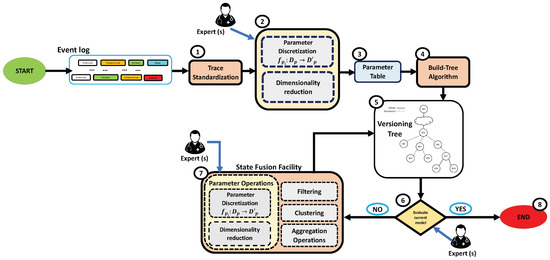

Process model discovery covers the different methodologies used to mine a process model from traces of process executions, and it has an important role in artificial intelligence research. Current approaches in this area, with a few exceptions, focus on determining a model of

[...] Read more.

Process model discovery covers the different methodologies used to mine a process model from traces of process executions, and it has an important role in artificial intelligence research. Current approaches in this area, with a few exceptions, focus on determining a model of the flow of actions only. However, in several contexts, (i) restricting the attention to actions is quite limiting, since the effects of such actions also have to be analyzed, and (ii) traces provide additional pieces of information in the form of states (i.e., values of parameters possibly affected by the actions); for instance, in several medical domains, the traces include both actions and measurements of patient parameters. In this paper, we propose AS-SIM (Action-State SIM), the first approach able to mine a process model that comprehends two distinct classes of nodes, to capture both actions and states.

Full article

(This article belongs to the Special Issue Advances in Text Mining Techniques and Applications for Knowledge Discovery)

►▼

Show Figures

Figure 1

Open AccessData Descriptor

Anomaly Detection in Student Activity in Solving Unique Programming Exercises: Motivated Students against Suspicious Ones

Data 2023, 8(8), 129; https://doi.org/10.3390/data8080129 - 08 Aug 2023

Abstract

►▼

Show Figures

This article presents a dataset containing messages from the Digital Teaching Assistant (DTA) system, which records the results from the automatic verification of students’ solutions to unique programming exercises of 11 various types. These results are automatically generated by the system, which automates

[...] Read more.

This article presents a dataset containing messages from the Digital Teaching Assistant (DTA) system, which records the results from the automatic verification of students’ solutions to unique programming exercises of 11 various types. These results are automatically generated by the system, which automates a massive Python programming course at MIREA—Russian Technological University (RTU MIREA). The DTA system is trained to distinguish between approaches to solve programming exercises, as well as to identify correct and incorrect solutions, using intelligent algorithms responsible for analyzing the source code in the DTA system using vector representations of programs based on Markov chains, calculating pairwise Jensen–Shannon distances for programs and using a hierarchical clustering algorithm to detect high-level approaches used by students in solving unique programming exercises. In the process of learning, each student must correctly solve 11 unique exercises in order to receive admission to the intermediate certification in the form of a test. In addition, a motivated student may try to find additional approaches to solve exercises they have already solved. At the same time, not all students are able or willing to solve the 11 unique exercises proposed to them; some will resort to outside help in solving all or part of the exercises. Since all information about the interactions of the students with the DTA system is recorded, it is possible to identify different types of students. First of all, the students can be classified into 2 classes: those who failed to solve 11 exercises and those who received admission to the intermediate certification in the form of a test, having solved the 11 unique exercises correctly. However, it is possible to identify classes of typical, motivated and suspicious students among the latter group based on the proposed dataset. The proposed dataset can be used to develop regression models that will predict outbursts of student activity when interacting with the DTA system, to solve clustering problems, to identify groups of students with a similar behavior model in the learning process and to develop intelligent data classifiers that predict the students’ behavior model and draw appropriate conclusions, not only at the end of the learning process but also during the course of it in order to motivate all students, even those who are classified as suspicious, to visualize the results of the learning process using various tools.

Full article

Figure 1

Open AccessData Descriptor

VEPL Dataset: A Vegetation Encroachment in Power Line Corridors Dataset for Semantic Segmentation of Drone Aerial Orthomosaics

Data 2023, 8(8), 128; https://doi.org/10.3390/data8080128 - 04 Aug 2023

Abstract

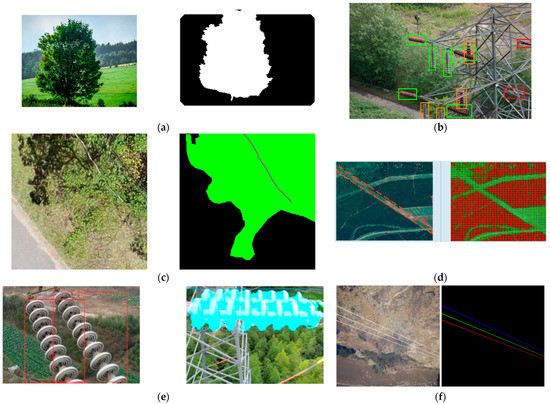

Vegetation encroachment in power line corridors has multiple problems for modern energy-dependent societies. Failures due to the contact between power lines and vegetation can result in power outages and millions of dollars in losses. To address this problem, UAVs have emerged as a

[...] Read more.

Vegetation encroachment in power line corridors has multiple problems for modern energy-dependent societies. Failures due to the contact between power lines and vegetation can result in power outages and millions of dollars in losses. To address this problem, UAVs have emerged as a promising solution due to their ability to quickly and affordably monitor long corridors through autonomous flights or being remotely piloted. However, the extensive and manual task that requires analyzing every image acquired by the UAVs when searching for the existence of vegetation encroachment has led many authors to propose the use of Deep Learning to automate the detection process. Despite the advantages of using a combination of UAV imagery and Deep Learning, there is currently a lack of datasets that help to train Deep Learning models for this specific problem. This paper presents a dataset for the semantic segmentation of vegetation encroachment in power line corridors. RGB orthomosaics were obtained for a rural road area using a commercial UAV. The dataset is composed of pairs of tessellated RGB images, coming from the orthomosaic and corresponding multi-color masks representing three different classes: vegetation, power lines, and the background. A detailed description of the image acquisition process is provided, as well as the labeling task and the data augmentation techniques, among other relevant details to produce the dataset. Researchers would benefit from using the proposed dataset by developing and improving strategies for vegetation encroachment monitoring using UAVs and Deep Learning.

Full article

(This article belongs to the Section Spatial Data Science and Digital Earth)

►▼

Show Figures

Figure 1

Open AccessData Descriptor

eMailMe: A Method to Build Datasets of Corporate Emails in Portuguese

Data 2023, 8(8), 127; https://doi.org/10.3390/data8080127 - 31 Jul 2023

Abstract

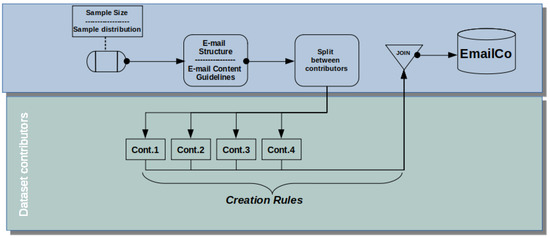

One of the areas in which knowledge management has application is in companies that are concerned with maintaining and disseminating their practices among their members. However, studies involving these two domains may end up suffering from the issue of data confidentiality. Furthermore, it

[...] Read more.

One of the areas in which knowledge management has application is in companies that are concerned with maintaining and disseminating their practices among their members. However, studies involving these two domains may end up suffering from the issue of data confidentiality. Furthermore, it is difficult to find data regarding organizations processes and associated knowledge. Therefore, this paper presents a method to support the generation of a labeled dataset composed of texts that simulate corporate emails containing sensitive information regarding disclosure, written in Portuguese. The method begins with the definition of the dataset’s size and content distribution; the structure of its emails’ texts; and the guidelines for specialists to build the emails’ texts. It aims to create datasets that can be used in the validation of a tacit knowledge extraction process considering the 5W1H approach for the resulting base. The method was applied to create a dataset with content related to several domains, such as Federal Court and Registry Office and Marketing, giving it diversity and realism, while simulating real-world situations in the specialists’ professional life. The dataset generated is available in an open-access repository so that it can be downloaded and, eventually, expanded.

Full article

(This article belongs to the Topic Methods for Data Labelling for Intelligent Systems)

►▼

Show Figures

Figure 1

Open AccessData Descriptor

Datasets of Simulated Exhaled Aerosol Images from Normal and Diseased Lungs with Multi-Level Similarities for Neural Network Training/Testing and Continuous Learning

Data 2023, 8(8), 126; https://doi.org/10.3390/data8080126 - 31 Jul 2023

Abstract

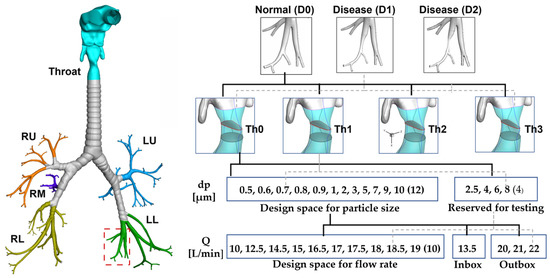

Although exhaled aerosols and their patterns may seem chaotic in appearance, they inherently contain information related to the underlying respiratory physiology and anatomy. This study presented a multi-level database of simulated exhaled aerosol images from both normal and diseased lungs. An anatomically accurate

[...] Read more.

Although exhaled aerosols and their patterns may seem chaotic in appearance, they inherently contain information related to the underlying respiratory physiology and anatomy. This study presented a multi-level database of simulated exhaled aerosol images from both normal and diseased lungs. An anatomically accurate mouth-lung geometry extending to G9 was modified to model two stages of obstructions in small airways and physiology-based simulations were utilized to capture the fluid-particle dynamics and exhaled aerosol images from varying breath tests. The dataset was designed to test two performance metrics of convolutional neural network (CNN) models when used for transfer learning: interpolation and extrapolation. To this aim, three testing datasets with decreasing image similarities were developed (i.e., level 1, inbox, and outbox). Four network models (AlexNet, ResNet-50, MobileNet, and EfficientNet) were tested and the performances of all models decreased for the outbox test images, which were outside the design space. The effect of continuous learning was also assessed for each model by adding new images into the training dataset and the newly trained network was tested at multiple levels. Among the four network models, ResNet-50 excelled in performance in both multi-level testing and continuous learning, the latter of which enhanced the accuracy of the most challenging classification task (i.e., 3-class with outbox test images) from 60.65% to 98.92%. The datasets can serve as a benchmark training/testing database for validating existent CNN models or quantifying the performance metrics of new CNN models.

Full article

(This article belongs to the Special Issue Artificial Intelligence and Big Data Applications in Diagnostics)

►▼

Show Figures

Figure 1

Open AccessData Descriptor

Quantitative Metabolomic Dataset of Avian Eye Lenses

by

, , , , , and

Data 2023, 8(8), 125; https://doi.org/10.3390/data8080125 - 31 Jul 2023

Abstract

Metabolomics is a powerful set of methods that uses analytical techniques to identify and quantify metabolites in biological samples, providing a snapshot of the metabolic state of a biological system. In medicine, metabolomics may help to reveal the molecular basis of a disease,

[...] Read more.

Metabolomics is a powerful set of methods that uses analytical techniques to identify and quantify metabolites in biological samples, providing a snapshot of the metabolic state of a biological system. In medicine, metabolomics may help to reveal the molecular basis of a disease, make a diagnosis, and monitor treatment responses, while in agriculture, it can improve crop yields and plant breeding. However, animal metabolomics faces several challenges due to the complexity and diversity of animal metabolomes, the lack of standardized protocols, and the difficulty in interpreting metabolomic data. The current dataset includes quantitative metabolomic profiles of eye lenses from 26 bird species (111 specimens) that can aid researchers in developing new experiments, mathematical models, and integrating with other “-omics” data. The dataset includes raw 1H NMR spectra, protocols for sample preparation, and data preprocessing, with the final table containing information on the abundance of 89 reliably identified and quantified metabolites. The dataset is quantitative, making it relevant for supplementing with new specimens or comparison groups, followed by data mining and expected new interpretations. The data were obtained using the bird specimens collected in compliance with ethical standards and revealed potential differences in metabolic pathways due to phylogenetic differences or environmental exposure.

Full article

(This article belongs to the Section Computational Biology, Bioinformatics, and Biomedical Data Science)

►▼

Show Figures

Figure 1

Open AccessArticle

Measuring the Effect of Fraud on Data-Quality Dimensions

by

and

Data 2023, 8(8), 124; https://doi.org/10.3390/data8080124 - 30 Jul 2023

Abstract

►▼

Show Figures

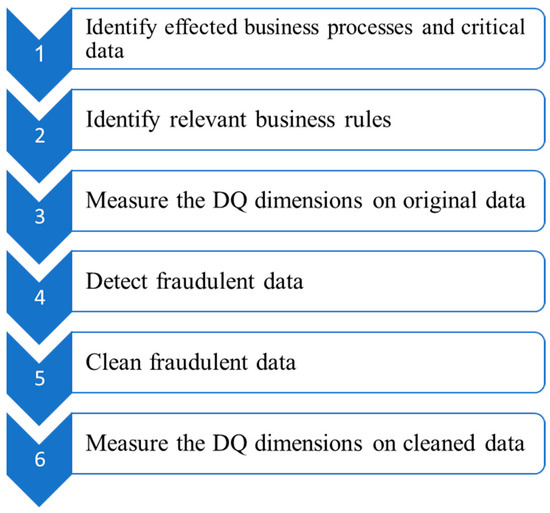

Data preprocessing moves the data from raw to ready for analysis. Data resulting from fraud compromises the quality of the data and the resulting analysis. It can exist in datasets such that it goes undetected since it is included in the analysis. This

[...] Read more.

Data preprocessing moves the data from raw to ready for analysis. Data resulting from fraud compromises the quality of the data and the resulting analysis. It can exist in datasets such that it goes undetected since it is included in the analysis. This study proposed a process for measuring the effect of fraudulent data during data preparation and its possible influence on quality. The five-step process begins with identifying the business rules related to the business process(s) affected by fraud and their associated quality dimensions. This is followed by measuring the business rules in the specified timeframe, detecting fraudulent data, cleaning them, and measuring their quality after cleaning. The process was implemented in the case of occupational fraud within a hospital context and the illegal issuance of underserved sick leave. The aim of the application is to identify the quality dimensions that are influenced by the injected fraudulent data and how these dimensions are affected. This study agrees with the existing literature and confirms its effects on timeliness, coherence, believability, and interpretability. However, this did not show any effect on consistency. Further studies are needed to arrive at a generalizable list of the quality dimensions that fraud can affect.

Full article

Figure 1

Open AccessArticle

Blockchain Payment Services in the Hospitality Sector: The Mediating Role of Data Security on Utilisation Efficiency of the Customer

Data 2023, 8(8), 123; https://doi.org/10.3390/data8080123 - 30 Jul 2023

Abstract

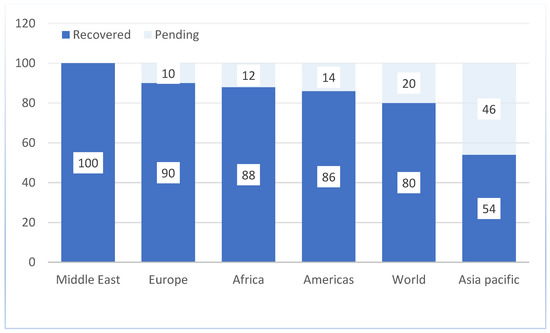

Blockchain technology has the potential to completely transform the hospitality sector by offering a safe, open, and effective method of payment. Increased customer utilisation efficiency may result from this. This study looks into how blockchain payment methods affect hotel customers’ intentions to stay

[...] Read more.

Blockchain technology has the potential to completely transform the hospitality sector by offering a safe, open, and effective method of payment. Increased customer utilisation efficiency may result from this. This study looks into how blockchain payment methods affect hotel customers’ intentions to stay loyal by devising four hypotheses. A questionnaire was specifically created and self-administered for this study as a data-gathering tool and distributed to hotel customers. The I.B.M. SPSS and Amos software packages were used to analyse the data of the 301 valid responses. Findings show that hospitality customers may use blockchain payment services if the customer is satisfied with the data security of this payment system. The study also highlighted that customer data security mediated the association between utilisation efficiency and blockchain payment systems. Blockchain payment services can affect visitors’ intentions to stay loyal by impacting data security and consumer happiness. Results suggest that blockchain payment systems can be useful for hospitality firms looking to increase client utilisation efficiency. Blockchain can simplify visitor booking and payment processes by providing a safe, open, and effective transacting method. This may result in a satisfying encounter that visitors are more inclined to recall and repeat.

Full article

(This article belongs to the Special Issue Blockchain Applications in Data Management and Governance)

►▼

Show Figures

Figure 1

Open AccessArticle

A Wavelet-Decomposed WD-ARMA-GARCH-EVT Model Approach to Comparing the Riskiness of the BitCoin and South African Rand Exchange Rates

by

and

Data 2023, 8(7), 122; https://doi.org/10.3390/data8070122 - 24 Jul 2023

Abstract

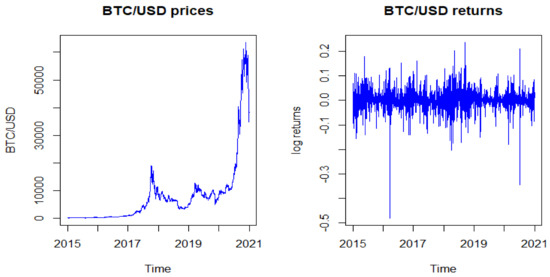

In this paper, a hybrid of a Wavelet Decomposition–Generalised Auto-Regressive Conditional Heteroscedasticity–Extreme Value Theory (WD-ARMA-GARCH-EVT) model is applied to estimate the Value at Risk (VaR) of BitCoin (BTC/USD) and the South African Rand (ZAR/USD). The aim is to measure and compare the riskiness

[...] Read more.

In this paper, a hybrid of a Wavelet Decomposition–Generalised Auto-Regressive Conditional Heteroscedasticity–Extreme Value Theory (WD-ARMA-GARCH-EVT) model is applied to estimate the Value at Risk (VaR) of BitCoin (BTC/USD) and the South African Rand (ZAR/USD). The aim is to measure and compare the riskiness of the two currencies. New and improved estimation techniques for VaR have been suggested in the last decade in the aftermath of the global financial crisis of 2008. This paper aims to provide an improved alternative to the already existing statistical tools in estimating a currency VaR empirically. Maximal Overlap Discrete Wavelet Transform (MODWT) and two mother wavelet filters on the returns series are considered in this paper, viz., the Haar and Daubechies (d4). The findings show that BitCoin/USD is riskier than ZAR/USD since it has a higher VaR per unit invested in each currency. At the 99% significance level, BitCoin/USD has average values of VaR of 2.71% and 4.98% for the WD-ARMA-GARCH-GPD and WD-ARMA-GARCH-GEVD models, respectively; and this is slightly higher than the respective 2.69% and 3.59% for the ZAR/USD. The average BitCoin/USD returns of 0.001990 are higher than ZAR/USD returns of −0.000125. These findings are consistent with the mean-variance portfolio theory, which suggests a higher yield for riskier assets. Based on the p-values of the Kupiec likelihood ratio test, the hybrid model adequacy is largely accepted, as p-values are greater than 0.05, except for the WD-ARMA-GARCH-GEVD models at a 99% significance level for both currencies. The findings are helpful to financial risk practitioners and forex traders in formulating their diversification and hedging strategies and ascertaining the risk-adjusted capital requirement to be set aside as a cushion in the event of the occurrence of an actual loss.

Full article

(This article belongs to the Special Issue Information Systems Innovation for Business: Change, Growth and Future Impact)

►▼

Show Figures

Figure 1

Open AccessData Descriptor

Knowledge Discovery and Dataset for the Improvement of Digital Literacy Skills in Undergraduate Students

Data 2023, 8(7), 121; https://doi.org/10.3390/data8070121 - 20 Jul 2023

Abstract

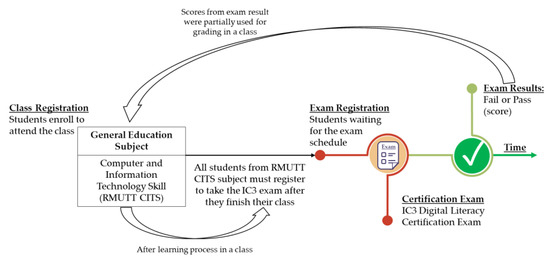

For over two decades, scholars and practitioners have emphasized the importance of digital literacy, yet the existing datasets are insufficient for establishing learning analytics in Thailand. Learning analytics focuses on gathering and analyzing student data to optimize learning tools and activities to improve

[...] Read more.

For over two decades, scholars and practitioners have emphasized the importance of digital literacy, yet the existing datasets are insufficient for establishing learning analytics in Thailand. Learning analytics focuses on gathering and analyzing student data to optimize learning tools and activities to improve students’ learning experiences. The main problem is that the ICT skill levels of the youth are rather low in Thailand. To facilitate research in this field, this study has compiled a dataset containing information from the IC3 digital literacy certification delivered at the Rajamangala University of Technology Thanyaburi (RMUTT) in Thailand between 2016 and 2023. This dataset is unique since it includes demographic and academic records about undergraduate students. The dataset was collected and underwent a preparation process, including data cleansing, anonymization, and release. This data enables the examination of student learning outcomes, represented by a dataset containing information about 45,603 records with students’ certification assessment scores. This compiled dataset provides a rich resource for researchers studying digital literacy and learning analytics. It offers researchers the opportunity to gain valuable insights, inform evidence-based educational practices, and contribute to the ongoing efforts to improve digital literacy education in Thailand and beyond.

Full article

(This article belongs to the Special Issue Data Mining and Computational Intelligence for E-learning and Education)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Data Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Guidelines for Reviewers

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Data, Future Internet, Information, Mathematics, Symmetry

Application of Deep Learning Method in 6G Communication Technology

Topic Editors: Mohamed Abouhawwash, K. VenkatachalamDeadline: 31 March 2024

Topic in

Applied Sciences, Batteries, Buildings, Data, Electricity, Electronics, Energies, Smart Cities

Smart Energy Systems, 2nd Edition

Topic Editors: Hugo Morais, Rui Castro, Cindy GuzmanDeadline: 31 May 2024

Topic in

Algorithms, Data, Information, Mathematics, Symmetry

Decision-Making and Data Mining for Sustainable Computing

Topic Editors: Sunil Jha, Malgorzata Rataj, Xiaorui ZhangDeadline: 30 November 2024

Topic in

BDCC, Data, Environments, Geosciences, Remote Sensing

Database, Mechanism and Risk Assessment of Slope Geologic Hazards

Topic Editors: Chong Xu, Yingying Tian, Xiaoyi Shao, Zikang Xiao, Yulong CuiDeadline: 28 February 2025

Conferences

Special Issues

Special Issue in

Data

Data Science in Fintech

Guest Editors: Qiannong (Chan) Gu, Diane Li, Tie Wei, Jeffrey Yi-Lin Forrest, Henry HanDeadline: 10 October 2023

Special Issue in

Data

Privacy and Trust in Smart Cities

Guest Editors: Anirban Basu, M Shahriar RahmanDeadline: 16 November 2023

Special Issue in

Data

Information Systems Innovation for Business: Change, Growth and Future Impact

Guest Editors: Varun Gupta, Chetna Gupta, Leandro Ferreira Pereira, Lawrence Peters, Antonio FerrerasDeadline: 30 November 2023

Special Issue in

Data

Sentiment Analysis in Social Media Data

Guest Editor: Hiram CalvoDeadline: 18 December 2023

Topical Collections

Topical Collection in

Data

Modern Geophysical and Climate Data Analysis: Tools and Methods

Collection Editors: Zoran Mijic, Vladimir Sreckovic