-

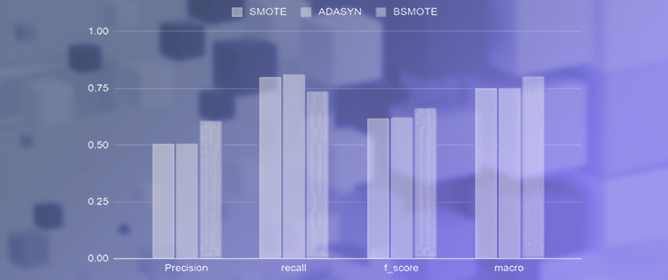

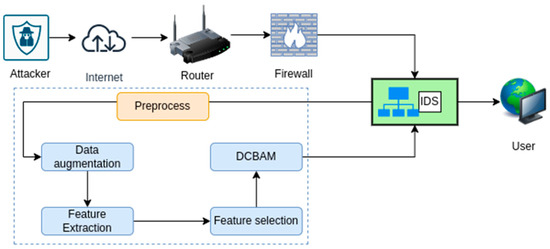

Resampling Imbalanced Network Intrusion Datasets to Identify Rare Attacks

Resampling Imbalanced Network Intrusion Datasets to Identify Rare Attacks -

A Multiverse Graph to Help Scientific Reasoning from Web Usage: Interpretable Patterns of Assessor Shifts in GRAPHYP

A Multiverse Graph to Help Scientific Reasoning from Web Usage: Interpretable Patterns of Assessor Shifts in GRAPHYP -

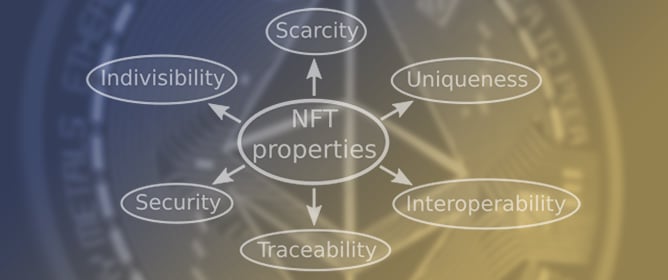

From NFT 1.0 to NFT 2.0: A Review of the Evolution of Non-Fungible Tokens

From NFT 1.0 to NFT 2.0: A Review of the Evolution of Non-Fungible Tokens -

Performance Evaluation of a Lane Correction Module Stress Test: A Field Test of Tesla Model 3

Performance Evaluation of a Lane Correction Module Stress Test: A Field Test of Tesla Model 3 -

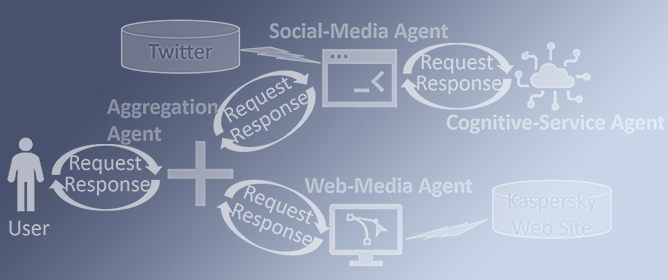

A New AI-Based Semantic Cyber Intelligence Agent

A New AI-Based Semantic Cyber Intelligence Agent

Journal Description

Future Internet

Future Internet

is a scholarly, peer-reviewed, open access journal on Internet technologies and the information society, published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), Ei Compendex, dblp, Inspec, and other databases.

- Journal Rank: CiteScore - Q1 (Computer Networks and Communications)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 13.6 days after submission; acceptance to publication is undertaken in 2.7 days (median values for papers published in this journal in the first half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.4 (2022);

5-Year Impact Factor:

3.4 (2022)

Latest Articles

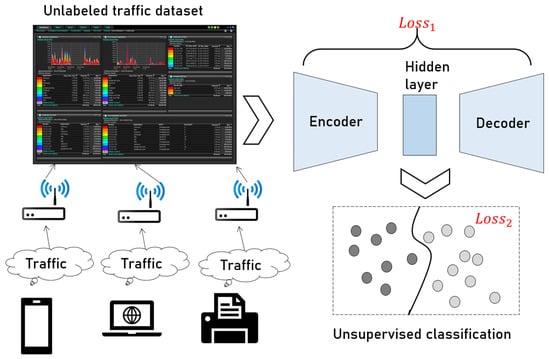

Intelligent Unsupervised Network Traffic Classification Method Using Adversarial Training and Deep Clustering for Secure Internet of Things

Future Internet 2023, 15(9), 298; https://doi.org/10.3390/fi15090298 (registering DOI) - 01 Sep 2023

Abstract

Network traffic classification (NTC) has attracted great attention in many applications such as secure communications, intrusion detection systems. The existing NTC methods based on supervised learning rely on sufficient labeled datasets in the training phase, but for most traffic datasets, it is difficult

[...] Read more.

Network traffic classification (NTC) has attracted great attention in many applications such as secure communications, intrusion detection systems. The existing NTC methods based on supervised learning rely on sufficient labeled datasets in the training phase, but for most traffic datasets, it is difficult to obtain label information in practical applications. Although unsupervised learning does not rely on labels, its classification accuracy is not high, and the number of data classes is difficult to determine. This paper proposes an unsupervised NTC method based on adversarial training and deep clustering with improved network traffic classification (NTC) and lower computational complexity in comparison with the traditional clustering algorithms. Here, the training process does not require data labels, which greatly reduce the computational complexity of the network traffic classification through pretraining. In the pretraining stage, an autoencoder (AE) is used to reduce the dimension of features and reduce the complexity of the initial high-dimensional network traffic data features. Moreover, we employ the adversarial training model and a deep clustering structure to further optimize the extracted features. The experimental results show that our proposed method has robust performance, with a multiclassification accuracy of 92.2%, which is suitable for classification with a large number of unlabeled data in actual application scenarios. This paper only focuses on breakthroughs in the algorithm stage, and future work can be focused on the deployment and adaptation in practical environments.

Full article

(This article belongs to the Special Issue Information and Future Internet Security, Trust and Privacy II)

►

Show Figures

Open AccessArticle

Explainable Lightweight Block Attention Module Framework for Network-Based IoT Attack Detection

Future Internet 2023, 15(9), 297; https://doi.org/10.3390/fi15090297 (registering DOI) - 01 Sep 2023

Abstract

In the rapidly evolving landscape of internet usage, ensuring robust cybersecurity measures has become a paramount concern across diverse fields. Among the numerous cyber threats, denial of service (DoS) and distributed denial of service (DDoS) attacks pose significant risks, as they can render

[...] Read more.

In the rapidly evolving landscape of internet usage, ensuring robust cybersecurity measures has become a paramount concern across diverse fields. Among the numerous cyber threats, denial of service (DoS) and distributed denial of service (DDoS) attacks pose significant risks, as they can render websites and servers inaccessible to their intended users. Conventional intrusion detection methods encounter substantial challenges in effectively identifying and mitigating these attacks due to their widespread nature, intricate patterns, and computational complexities. However, by harnessing the power of deep learning-based techniques, our proposed dense channel-spatial attention model exhibits exceptional accuracy in detecting and classifying DoS and DDoS attacks. The successful implementation of our proposed framework addresses the challenges posed by imbalanced data and exhibits its potential for real-world applications. By leveraging the dense channel-spatial attention mechanism, our model can precisely identify and classify DoS and DDoS attacks, bolstering the cybersecurity defenses of websites and servers. The high accuracy rates achieved across different datasets reinforce the robustness of our approach, underscoring its efficacy in enhancing intrusion detection capabilities. As a result, our framework holds promise in bolstering cybersecurity measures in real-world scenarios, contributing to the ongoing efforts to safeguard against cyber threats in an increasingly interconnected digital landscape. Comparative analysis with current intrusion detection methods reveals the superior performance of our model. We achieved accuracy rates of 99.38%, 99.26%, and 99.43% for Bot-IoT, CICIDS2017, and UNSW_NB15 datasets, respectively. These remarkable results demonstrate the capability of our approach to accurately detect and classify various types of DoS and DDoS assaults. By leveraging the inherent strengths of deep learning, such as pattern recognition and feature extraction, our model effectively overcomes the limitations of traditional methods, enhancing the accuracy and efficiency of intrusion detection systems.

Full article

(This article belongs to the Special Issue Security Mechanisms for Wireless Sensor Networks in Cyber-Physical Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

FREDY: Federated Resilience Enhanced with Differential Privacy

by

, , , , , and

Future Internet 2023, 15(9), 296; https://doi.org/10.3390/fi15090296 (registering DOI) - 01 Sep 2023

Abstract

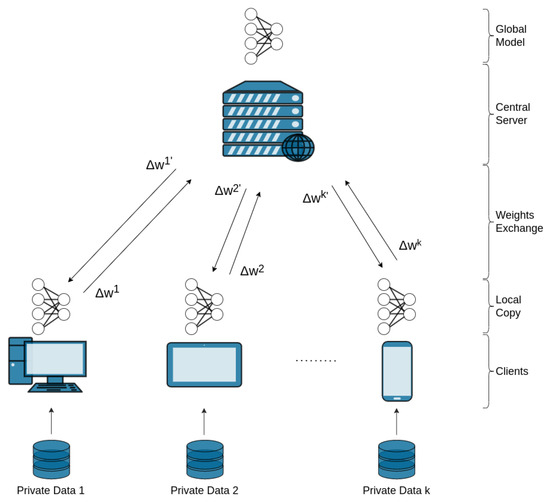

Federated Learning is identified as a reliable technique for distributed training of ML models. Specifically, a set of dispersed nodes may collaborate through a federation in producing a jointly trained ML model without disclosing their data to each other. Each node performs local

[...] Read more.

Federated Learning is identified as a reliable technique for distributed training of ML models. Specifically, a set of dispersed nodes may collaborate through a federation in producing a jointly trained ML model without disclosing their data to each other. Each node performs local model training and then shares its trained model weights with a server node, usually called Aggregator in federated learning, as it aggregates the trained weights and then sends them back to its clients for another round of local training. Despite the data protection and security that FL provides to each client, there are still well-studied attacks such as membership inference attacks that can detect potential vulnerabilities of the FL system and thus expose sensitive data. In this paper, in order to prevent this kind of attack and address private data leakage, we introduce FREDY, a differential private federated learning framework that enables knowledge transfer from private data. Particularly, our approach has a teachers–student scheme. Each teacher model is trained on sensitive, disjoint data in a federated manner, and the student model is trained on the most voted predictions of the teachers on public unlabeled data which are noisy aggregated in order to guarantee the privacy of each teacher’s sensitive data. Only the student model is publicly accessible as the teacher models contain sensitive information. We show that our proposed approach guarantees the privacy of sensitive data against model inference attacks while it combines the federated learning settings for the model training procedures.

Full article

(This article belongs to the Special Issue Privacy and Security in Computing Continuum and Data-Driven Workflows)

►▼

Show Figures

Figure 1

Open AccessArticle

FLAME-VQA: A Fuzzy Logic-Based Model for High Frame Rate Video Quality Assessment

by

and

Future Internet 2023, 15(9), 295; https://doi.org/10.3390/fi15090295 (registering DOI) - 01 Sep 2023

Abstract

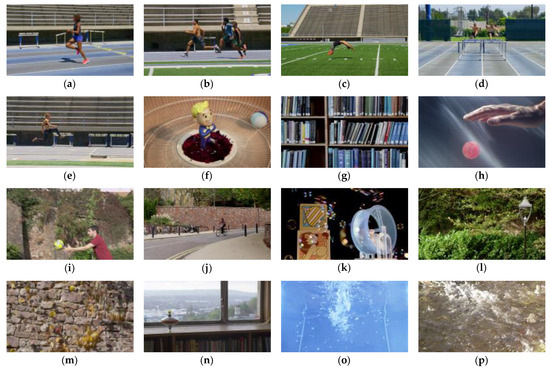

In the quest to optimize user experience, network, and service, providers continually seek to deliver high-quality content tailored to individual preferences. However, predicting user perception of quality remains a challenging task, given the subjective nature of human perception and the plethora of technical

[...] Read more.

In the quest to optimize user experience, network, and service, providers continually seek to deliver high-quality content tailored to individual preferences. However, predicting user perception of quality remains a challenging task, given the subjective nature of human perception and the plethora of technical attributes that contribute to the overall viewing experience. Thus, we introduce a Fuzzy Logic-bAsed ModEl for Video Quality Assessment (FLAME-VQA), leveraging the LIVE-YT-HFR database containing 480 video sequences and subjective ratings of their quality from 85 test subjects. The proposed model addresses the challenges of assessing user perception by capturing the intricacies of individual preferences and video attributes using fuzzy logic. It operates with four input parameters: video frame rate, compression rate, and spatio-temporal information. The Spearman Rank–Order Correlation Coefficient (SROCC) and Pearson Correlation Coefficient (PCC) show a high correlation between the output and the ground truth. For the training, test, and complete dataset, SROCC equals 0.8977, 0.8455, and 0.8961, respectively, while PCC equals 0.9096, 0.8632, and 0.9086, respectively. The model outperforms comparative models tested on the same dataset.

Full article

(This article belongs to the Special Issue QoS in Wireless Sensor Network for IoT Applications)

►▼

Show Figures

Figure 1

Open AccessEditorial

Advances Techniques in Computer Vision and Multimedia

by

Future Internet 2023, 15(9), 294; https://doi.org/10.3390/fi15090294 (registering DOI) - 01 Sep 2023

Abstract

Computer vision has experienced significant advancements and great success in areas closely related to human society, which aims to enable computer systems to automatically see, recognize, and understand the visual world by simulating the mechanism of human vision [...]

Full article

(This article belongs to the Special Issue Advances Techniques in Computer Vision and Multimedia)

Open AccessReview

Enhancing E-Learning with Blockchain: Characteristics, Projects, and Emerging Trends

Future Internet 2023, 15(9), 293; https://doi.org/10.3390/fi15090293 - 28 Aug 2023

Abstract

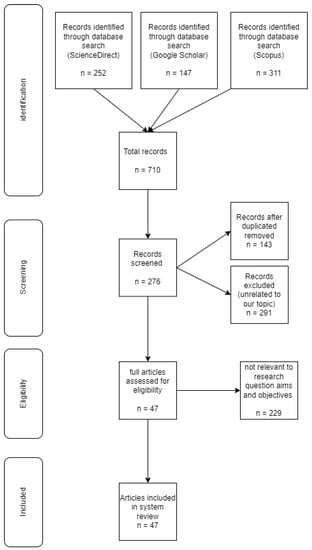

Blockchain represents a decentralized and distributed ledger technology, ensuring transparent and secure transaction recording across networks. This innovative technology offers several benefits, including increased security, trust, and transparency, making it suitable for a wide range of applications. In the last few years, there

[...] Read more.

Blockchain represents a decentralized and distributed ledger technology, ensuring transparent and secure transaction recording across networks. This innovative technology offers several benefits, including increased security, trust, and transparency, making it suitable for a wide range of applications. In the last few years, there has been a growing interest in investigating the potential of Blockchain technology to enhance diverse fields, such as e-learning. In this research, we undertook a systematic literature review to explore the potential of Blockchain technology in enhancing the e-learning domain. Our research focused on four main questions: (1) What potential characteristics of Blockchain can contribute to enhancing e-learning? (2) What are the existing Blockchain projects dedicated to e-learning? (3) What are the limitations of existing projects? (4) What are the future trends in Blockchain-related research that will impact e-learning? The results showed that Blockchain technology has several characteristics that could benefit e-learning. We also discussed immutability, transparency, decentralization, security, and traceability. We also identified several existing Blockchain projects dedicated to e-learning and discussed their potential to revolutionize learning by providing more transparency, security, and effectiveness. However, our research also revealed many limitations and challenges that could be addressed to achieve Blockchain technology’s potential in e-learning.

Full article

(This article belongs to the Special Issue Future Prospects and Advancements in Blockchain Technology)

►▼

Show Figures

Figure 1

Open AccessArticle

Autism Screening in Toddlers and Adults Using Deep Learning and Fair AI Techniques

Future Internet 2023, 15(9), 292; https://doi.org/10.3390/fi15090292 - 28 Aug 2023

Abstract

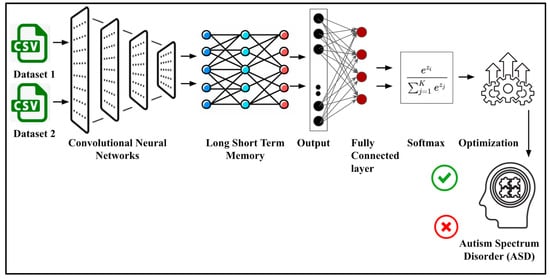

Autism spectrum disorder (ASD) has been associated with conditions like depression, anxiety, epilepsy, etc., due to its impact on an individual’s educational, social, and employment. Since diagnosis is challenging and there is no cure, the goal is to maximize an individual’s ability by

[...] Read more.

Autism spectrum disorder (ASD) has been associated with conditions like depression, anxiety, epilepsy, etc., due to its impact on an individual’s educational, social, and employment. Since diagnosis is challenging and there is no cure, the goal is to maximize an individual’s ability by reducing the symptoms, and early diagnosis plays a role in improving behavior and language development. In this paper, an autism screening analysis for toddlers and adults has been performed using fair AI (feature engineering, SMOTE, optimizations, etc.) and deep learning methods. The analysis considers traditional deep learning methods like Multilayer Perceptron (MLP), Artificial Neural Networks (ANN), Convolutional Neural Networks (CNN), and Long Short-Term Memory (LSTM), and also proposes two hybrid deep learning models, i.e., CNN–LSTM with Particle Swarm Optimization (PSO), and a CNN model combined with Gated Recurrent Units (GRU–CNN). The models have been validated using multiple performance metrics, and the analysis confirms that the proposed models perform better than the traditional models.

Full article

(This article belongs to the Special Issue Machine Learning Perspective in the Convolutional Neural Network Era)

►▼

Show Figures

Figure 1

Open AccessArticle

A Novel SDWSN-Based Testbed for IoT Smart Applications

Future Internet 2023, 15(9), 291; https://doi.org/10.3390/fi15090291 - 28 Aug 2023

Abstract

Wireless sensor network (WSN) environment monitoring and smart city applications present challenges for maintaining network connectivity when, for example, dynamic events occur. Such applications can benefit from recent technologies such as software-defined networks (SDNs) and network virtualization to support network flexibility and offer

[...] Read more.

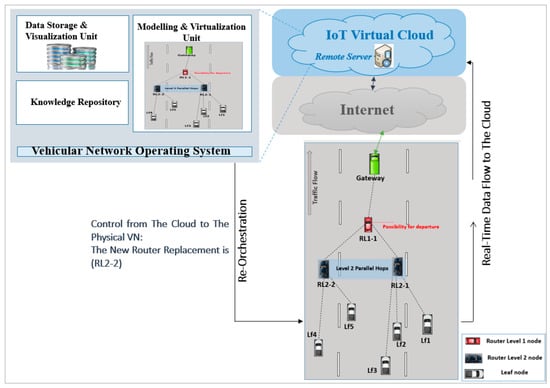

Wireless sensor network (WSN) environment monitoring and smart city applications present challenges for maintaining network connectivity when, for example, dynamic events occur. Such applications can benefit from recent technologies such as software-defined networks (SDNs) and network virtualization to support network flexibility and offer validation for a physical network. This paper aims to present a testbed-based, software-defined wireless sensor network (SDWSN) for IoT applications with a focus on promoting the approach of virtual network testing and analysis prior to physical network implementation to monitor and repair any network failures. Herein, physical network implementation employing hardware boards such as Texas Instruments CC2538 (TI CC2538) and TI CC1352R sensor nodes is presented and designed based on virtual WSN- based clustering for stationary and dynamic networks use cases. The key performance indicators such as evaluating node (such as a gateway node to the Internet) connection capability based on packet drop and energy consumption virtually and physically are discussed. According to the test findings, the proposed software-defined physical network benefited from “prior-to-implementation” analysis via virtualization, as the performance of both virtual and physical networks is comparable.

Full article

(This article belongs to the Special Issue QoS in Wireless Sensor Network for IoT Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Short-Term Mobile Network Traffic Forecasting Using Seasonal ARIMA and Holt-Winters Models

Future Internet 2023, 15(9), 290; https://doi.org/10.3390/fi15090290 - 28 Aug 2023

Abstract

Fifth-generation (5G) networks require efficient radio resource management (RRM) which should dynamically adapt to the current network load and user needs. Monitoring and forecasting network performance requirements and metrics helps with this task. One of the parameters that highly influences radio resource management

[...] Read more.

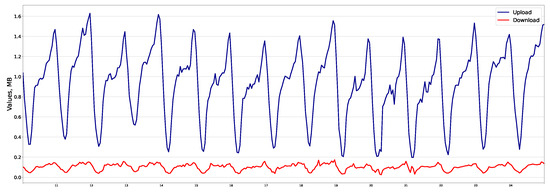

Fifth-generation (5G) networks require efficient radio resource management (RRM) which should dynamically adapt to the current network load and user needs. Monitoring and forecasting network performance requirements and metrics helps with this task. One of the parameters that highly influences radio resource management is the profile of user traffic generated by various 5G applications. Forecasting such mobile network profiles helps with numerous RRM tasks such as network slicing and load balancing. In this paper, we analyze a dataset from a mobile network operator in Portugal that contains information about volumes of traffic in download and upload directions in one-hour time slots. We apply two statistical models for forecasting download and upload traffic profiles, namely, seasonal autoregressive integrated moving average (SARIMA) and Holt-Winters models. We demonstrate that both models are suitable for forecasting mobile network traffic. Nevertheless, the SARIMA model is more appropriate for download traffic (e.g., MAPE [mean absolute percentage error] of 11.2% vs. 15% for Holt-Winters), while the Holt-Winters model is better suited for upload traffic (e.g., MAPE of 4.17% vs. 9.9% for SARIMA and Holt-Winters, respectively).

Full article

(This article belongs to the Special Issue 5G Wireless Communication Networks II)

►▼

Show Figures

Figure 1

Open AccessArticle

3D Path Planning Algorithms in UAV-Enabled Communications Systems: A Mapping Study

Future Internet 2023, 15(9), 289; https://doi.org/10.3390/fi15090289 - 27 Aug 2023

Abstract

►▼

Show Figures

Unmanned Aerial Vehicles (UAVs) equipped with communication technologies have gained significant attention as a promising solution for providing wireless connectivity in remote, disaster-stricken areas lacking communication infrastructure. However, enabling UAVs to provide communications (e.g., UAVs acting as flying base stations) in real scenarios

[...] Read more.

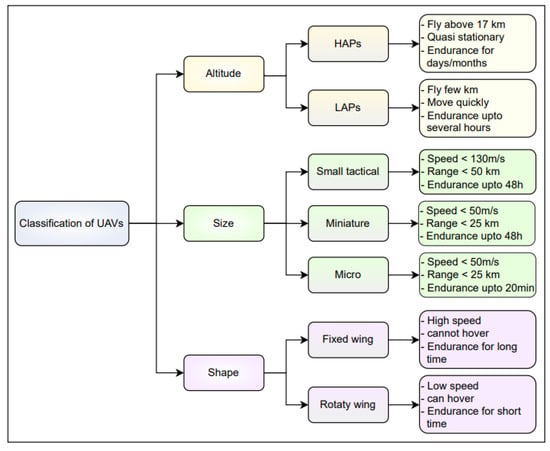

Unmanned Aerial Vehicles (UAVs) equipped with communication technologies have gained significant attention as a promising solution for providing wireless connectivity in remote, disaster-stricken areas lacking communication infrastructure. However, enabling UAVs to provide communications (e.g., UAVs acting as flying base stations) in real scenarios requires the integration of various technologies and algorithms. In particular 3D path planning algorithms are crucial in determining the optimal path free of obstacles so that UAVs in isolation or forming networks can provide wireless coverage in a specific region. Considering that most of the existing proposals in the literature only address path planning in a 2D environment, this paper systematically studies existing path-planning solutions in UAVs in a 3D environment in which optimization models (optimal and heuristics) have been applied. This paper analyzes 37 articles selected from 631 documents from a search in the Scopus database. This paper also presents an overview of UAV-enabled communications systems, the research questions, and the methodology for the systematic mapping study. In the end, this paper provides information about the objectives to be minimized or maximized, the optimization variables used, and the algorithmic strategies employed to solve the 3D path planning problem.

Full article

Figure 1

Open AccessArticle

Spot Market Cloud Orchestration Using Task-Based Redundancy and Dynamic Costing

by

and

Future Internet 2023, 15(9), 288; https://doi.org/10.3390/fi15090288 - 27 Aug 2023

Abstract

Cloud computing has become ubiquitous in the enterprise environment as its on-demand model realizes technical and economic benefits for users. Cloud users demand a level of reliability, availability, and quality of service. Improvements to reliability generally come at the cost of additional replication.

[...] Read more.

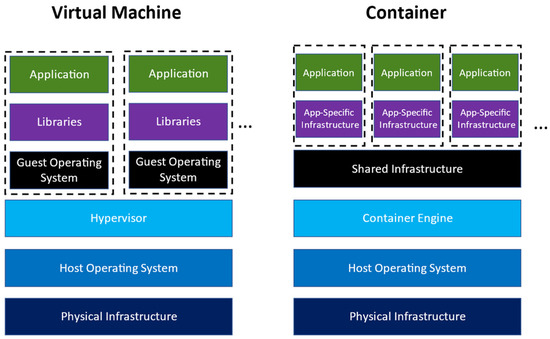

Cloud computing has become ubiquitous in the enterprise environment as its on-demand model realizes technical and economic benefits for users. Cloud users demand a level of reliability, availability, and quality of service. Improvements to reliability generally come at the cost of additional replication. Existing approaches have focused on the replication of virtual environments as a method of improving the reliability of cloud services. As cloud systems move towards microservices-based architectures, a more granular approach to replication is now possible. In this paper, we propose a cloud orchestration approach that balances the potential cost of failure with the spot market running cost, optimizing the resource usage of the cloud system. We present the results of empirical testing we carried out using a simulator to compare the outcome of our proposed approach to a control algorithm based on a static reliability requirement. Our empirical testing showed an improvement of between 37% and 72% in total cost over the control, depending on the specific characteristics of the cloud models tested. We thus propose that in clouds where the cost of failure can be reasonably approximated, our approach may be used to optimize the cloud redundancy configuration to achieve a lower total cost.

Full article

(This article belongs to the Special Issue Cloud Computing and High Performance Computing (HPC) Advances for Next Generation Internet)

►▼

Show Figures

Figure 1

Open AccessArticle

Non-Invasive Monitoring of Vital Signs for the Elderly Using Low-Cost Wireless Sensor Networks: Exploring the Impact on Sleep and Home Security

by

, , , and

Future Internet 2023, 15(9), 287; https://doi.org/10.3390/fi15090287 - 24 Aug 2023

Abstract

Wireless sensor networks (WSN) are useful in medicine for monitoring the vital signs of elderly patients. These sensors allow for remote monitoring of a patient’s state of health, making it easier for elderly patients, and allowing to avoid or at least to extend

[...] Read more.

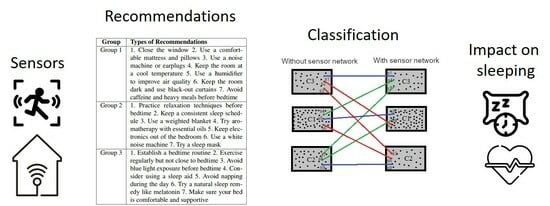

Wireless sensor networks (WSN) are useful in medicine for monitoring the vital signs of elderly patients. These sensors allow for remote monitoring of a patient’s state of health, making it easier for elderly patients, and allowing to avoid or at least to extend the interval between visits to specialized health centers. The proposed system is a low-cost WSN deployed at the elderly patient’s home, monitoring the main areas of the house and sending daily recommendations to the patient. This study measures the impact of the proposed sensor network on nine vital sign metrics based on a person’s sleep patterns. These metrics were taken from 30 adults over a period of four weeks, the first two weeks without the sensor system while the remaining two weeks with continuous monitoring of the patients, providing security for their homes and a perception of well-being. This work aims to identify relationships between parameters impacted by the sensor system and predictive trends about the level of improvement in vital sign metrics. Moreover, this work focuses on adapting a reactive algorithm for energy and performance optimization for the sensor monitoring system. Results show that sleep metrics improved statistically based on the recommendations for use of the sensor network; the elderly adults slept more and more continuously, and the higher their heart rate, respiratory rate, and temperature, the greater the likelihood of the impact of the network on the sleep metrics. The proposed energy-saving algorithm for the WSN succeeded in reducing energy consumption and improving resilience of the network.

Full article

(This article belongs to the Special Issue Applications of Wireless Sensor Networks and Internet of Things)

►▼

Show Figures

Graphical abstract

Open AccessReview

Generative AI in Medicine and Healthcare: Promises, Opportunities and Challenges

by

and

Future Internet 2023, 15(9), 286; https://doi.org/10.3390/fi15090286 - 24 Aug 2023

Abstract

Generative AI (artificial intelligence) refers to algorithms and models, such as OpenAI’s ChatGPT, that can be prompted to generate various types of content. In this narrative review, we present a selection of representative examples of generative AI applications in medicine and healthcare. We

[...] Read more.

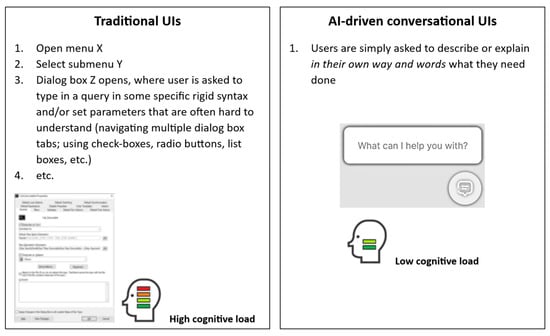

Generative AI (artificial intelligence) refers to algorithms and models, such as OpenAI’s ChatGPT, that can be prompted to generate various types of content. In this narrative review, we present a selection of representative examples of generative AI applications in medicine and healthcare. We then briefly discuss some associated issues, such as trust, veracity, clinical safety and reliability, privacy, copyrights, ownership, and opportunities, e.g., AI-driven conversational user interfaces for friendlier human-computer interaction. We conclude that generative AI will play an increasingly important role in medicine and healthcare as it further evolves and gets better tailored to the unique settings and requirements of the medical domain and as the laws, policies and regulatory frameworks surrounding its use start taking shape.

Full article

(This article belongs to the Special Issue The Future Internet of Medical Things II)

►▼

Show Figures

Figure 1

Open AccessArticle

Prospects of Cybersecurity in Smart Cities

Future Internet 2023, 15(9), 285; https://doi.org/10.3390/fi15090285 - 23 Aug 2023

Abstract

The complex and interconnected infrastructure of smart cities offers several opportunities for attackers to exploit vulnerabilities and carry out cyberattacks that can have serious consequences for the functioning of cities’ critical infrastructures. This study aims to address this phenomenon and characterize the dimensions

[...] Read more.

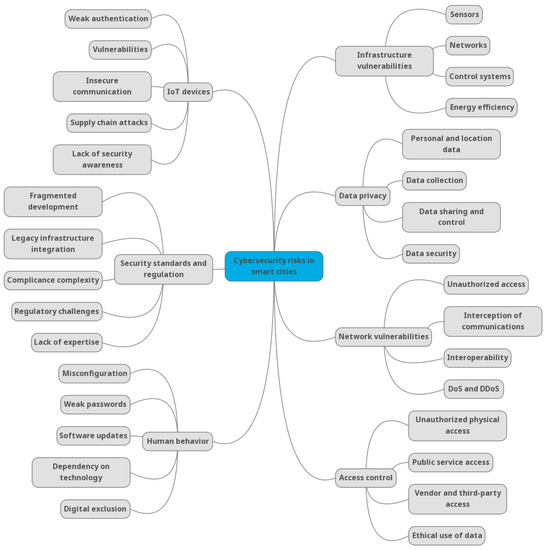

The complex and interconnected infrastructure of smart cities offers several opportunities for attackers to exploit vulnerabilities and carry out cyberattacks that can have serious consequences for the functioning of cities’ critical infrastructures. This study aims to address this phenomenon and characterize the dimensions of security risks in smart cities and present mitigation proposals to address these risks. The study adopts a qualitative methodology through the identification of 62 European research projects in the field of cybersecurity in smart cities, which are underway during the period from 2022 to 2027. Compared to previous studies, this work provides a comprehensive view of security risks from the perspective of multiple universities, research centers, and companies participating in European projects. The findings of this study offer relevant scientific contributions by identifying 7 dimensions and 31 sub-dimensions of cybersecurity risks in smart cities and proposing 24 mitigation strategies to face these security challenges. Furthermore, this study explores emerging cybersecurity issues to which smart cities are exposed by the increasing proliferation of new technologies and standards.

Full article

(This article belongs to the Special Issue Cyber Security Challenges in the New Smart Worlds)

►▼

Show Figures

Figure 1

Open AccessArticle

3D Visualization in Digital Medicine Using XR Technology

Future Internet 2023, 15(9), 284; https://doi.org/10.3390/fi15090284 - 22 Aug 2023

Abstract

Nowadays, virtual reality is a new and rapidly developing technology that provides the opportunity for a new, more immersive form of data visualization. Evaluating digitized pathological serial sections and establishing the appropriate diagnosis is one of the key task of the pathologist in

[...] Read more.

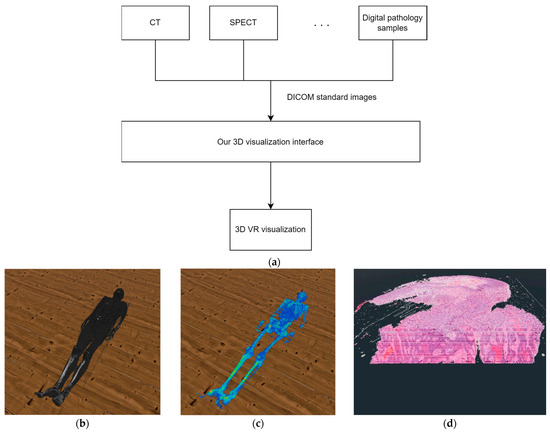

Nowadays, virtual reality is a new and rapidly developing technology that provides the opportunity for a new, more immersive form of data visualization. Evaluating digitized pathological serial sections and establishing the appropriate diagnosis is one of the key task of the pathologist in the daily work. The type of tools used by pathologists in the evaluation of samples has not changed much in recent decades. On the other hand, the amount of information required to establish an accurate diagnosis has been significantly increased. Nowadays, pathologists are working with the help of multiple high-resolution desktop monitors. Instead of the large screens, the use of virtual reality can serve as an alternative solution, which provides virtualized working space for pathologists during routine sample evaluation. In our research, we defined a new immersive working environment for pathologists. In our proposed solution we visualize several type of digitized medical image data with the corresponding meta data in 3D, and we also defined virtualized functions that support the evaluation process. The main aim of this paper is to present the new possibilities provided by 3D visualization and virtual reality in digital pathology. The paper presents a new virtual reality-based examination environment, as well as software functionalities that are essential for 3D pathological tissue evaluation.

Full article

(This article belongs to the Special Issue Virtual Reality and Metaverse: Impact on the Digital Transformation of Society)

►▼

Show Figures

Figure 1

Open AccessArticle

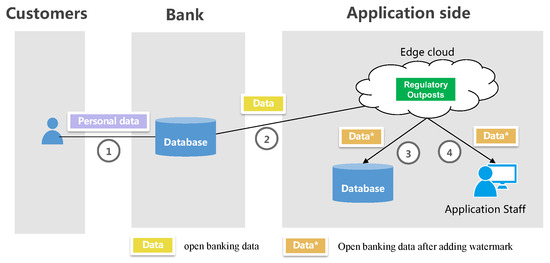

E-SAWM: A Semantic Analysis-Based ODF Watermarking Algorithm for Edge Cloud Scenarios

Future Internet 2023, 15(9), 283; https://doi.org/10.3390/fi15090283 - 22 Aug 2023

Abstract

With the growing demand for data sharing file formats in financial applications driven by open banking, the use of the OFD (open fixed-layout document) format has become widespread. However, ensuring data security, traceability, and accountability poses significant challenges. To address these concerns, we

[...] Read more.

With the growing demand for data sharing file formats in financial applications driven by open banking, the use of the OFD (open fixed-layout document) format has become widespread. However, ensuring data security, traceability, and accountability poses significant challenges. To address these concerns, we propose E-SAWM, a dynamic watermarking service framework designed for edge cloud scenarios. This framework incorporates dynamic watermark information at the edge, allowing for precise tracking of data leakage throughout the data-sharing process. By utilizing semantic analysis, E-SAWM generates highly realistic pseudostatements that exploit the structural characteristics of documents within OFD files. These pseudostatements are strategically distributed to embed redundant bits into the structural documents, ensuring that the watermark remains resistant to removal or complete destruction. Experimental results demonstrate that our algorithm has a minimal impact on the original file size, with the watermarked text occupying less than 15%, indicating a high capacity for carrying the watermark. Additionally, compared to existing explicit watermarking schemes for OFD files based on annotation structure, our proposed watermarking scheme is suitable for the technical requirements of complex dynamic watermarking in edge cloud scenario deployment. It effectively overcomes vulnerabilities associated with easy deletion and tampering, providing high concealment and robustness.

Full article

(This article belongs to the Special Issue Edge-Cloud Computing and Federated-Split Learning in the Internet of Things)

►▼

Show Figures

Figure 1

Open AccessArticle

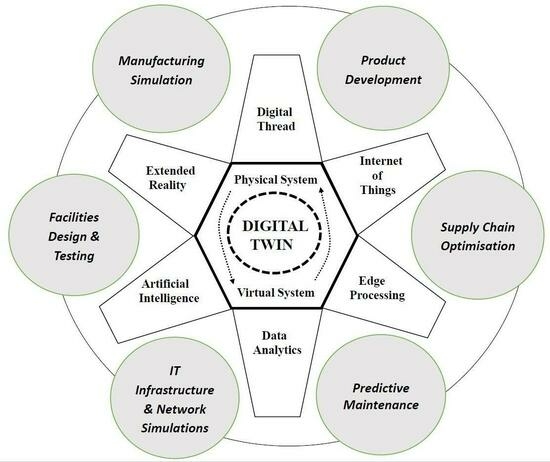

Digital Twin Applications in Manufacturing Industry: A Case Study from a German Multi-National

by

and

Future Internet 2023, 15(9), 282; https://doi.org/10.3390/fi15090282 - 22 Aug 2023

Abstract

This article examines how digital twins have been used in a multi-national corporation, what technologies have been used, what benefits have been delivered, and the significance of people- and process-related issues in achieving successful implementation. A qualitative, inductive research method is used, based

[...] Read more.

This article examines how digital twins have been used in a multi-national corporation, what technologies have been used, what benefits have been delivered, and the significance of people- and process-related issues in achieving successful implementation. A qualitative, inductive research method is used, based on interviews provided by key personnel involved in three digital twin projects. The article concludes that digital twin projects are likely to involve incremental rather than disruptive change, and that successful implementation is usually underpinned by ensuring technology, people, and process change factors are progressed in a balanced and integrated fashion. Building upon existing frameworks, three “properties” are identified as being of particular value in digital twin projects—workforce adaptability, technology manageability, and process agility—and a related set of steps and actions is put forward as a template and point of reference for future digital twin implementations. The combination of assessing digital properties and following a set of key actions represents a novel approach to digital twin project planning, and overall the findings are a contribution to the developing theory around digital twins and digitalization, in general, and are also of relevance to professionals embarking on DT projects.

Full article

(This article belongs to the Special Issue Big Data Analytics for the Industrial Internet of Things)

►▼

Show Figures

Graphical abstract

Open AccessArticle

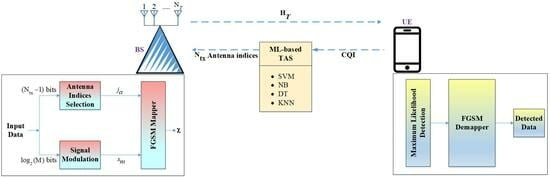

Intelligent Transmit Antenna Selection Schemes for High-Rate Fully Generalized Spatial Modulation

by

, , , , and

Future Internet 2023, 15(8), 281; https://doi.org/10.3390/fi15080281 - 21 Aug 2023

Abstract

The sixth-generation (6G) network is supposed to transmit significantly more data at much quicker rates than existing networks while meeting severe energy efficiency (EE) targets. The high-rate spatial modulation (SM) methods can be used to deal with these design metrics. SM uses transmit

[...] Read more.

The sixth-generation (6G) network is supposed to transmit significantly more data at much quicker rates than existing networks while meeting severe energy efficiency (EE) targets. The high-rate spatial modulation (SM) methods can be used to deal with these design metrics. SM uses transmit antenna selection (TAS) practices to improve the EE of the network. Although it is computationally intensive, free distance optimized TAS (FD-TAS) is the best for performing the average bit error rate (ABER). The present investigation aims to examine the effectiveness of various machine learning (ML)-assisted TAS practices, such as support vector machine (SVM), naïve Bayes (NB), K-nearest neighbor (KNN), and decision tree (DT), to the small-scale multiple-input multiple-output (MIMO)-based fully generalized spatial modulation (FGSM) system. To the best of our knowledge, there is no ML-based antenna selection schemes for high-rate FGSM. SVM-based TAS schemes achieve ∼71.1% classification accuracy, outperforming all other approaches. The ABER performance of each scheme is evaluated using a higher constellation order, along with various transmit antennas to achieve the target ABER of

(This article belongs to the Special Issue AI, Machine Learning and Data Analytics for Wireless Communications II)

►▼

Show Figures

Graphical abstract

Open AccessArticle

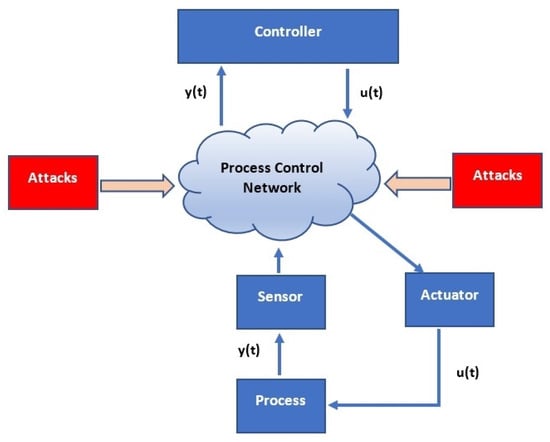

Detection of Man-in-the-Middle (MitM) Cyber-Attacks in Oil and Gas Process Control Networks Using Machine Learning Algorithms

by

, , , , , and

Future Internet 2023, 15(8), 280; https://doi.org/10.3390/fi15080280 - 21 Aug 2023

Abstract

Recently, the process control network (PCN) of oil and gas installation has been subjected to amorphous cyber-attacks. Examples include the denial-of-service (DoS), distributed denial-of-service (DDoS), and man-in-the-middle (MitM) attacks, and this may have largely been caused by the integration of open network to

[...] Read more.

Recently, the process control network (PCN) of oil and gas installation has been subjected to amorphous cyber-attacks. Examples include the denial-of-service (DoS), distributed denial-of-service (DDoS), and man-in-the-middle (MitM) attacks, and this may have largely been caused by the integration of open network to operation technology (OT) as a result of low-cost network expansion. The connection of OT to the internet for firmware updates, third-party support, or the intervention of vendors has exposed the industry to attacks. The inability to detect these unpredictable cyber-attacks exposes the PCN, and a successful attack can lead to devastating effects. This paper reviews the different forms of cyber-attacks in PCN of oil and gas installations while proposing the use of machine learning algorithms to monitor data exchanges between the sensors, controllers, processes, and the final control elements on the network to detect anomalies in such data exchanges. Python 3.0 Libraries, Deep-Learning Toolkit, MATLAB, and Allen Bradley RSLogic 5000 PLC Emulator software were used in simulating the process control. The outcomes of the experiments show the reliability and functionality of the different machine learning algorithms in detecting these anomalies with significant precise attack detections identified using tree algorithms (bagged or coarse ) for man-in-the-middle (MitM) attacks while taking note of accuracy-computation complexity trade-offs.

Full article

(This article belongs to the Special Issue Security in the Internet of Things (IoT))

►▼

Show Figures

Figure 1

Open AccessArticle

Cluster-Based Data Aggregation in Flying Sensor Networks Enabled Internet of Things

Future Internet 2023, 15(8), 279; https://doi.org/10.3390/fi15080279 - 20 Aug 2023

Abstract

Multiple unmanned aerial vehicles (UAVs) are organized into clusters in a flying sensor network (FSNet) to achieve scalability and prolong the network lifetime. There are a variety of optimization schemes that can be adapted to determine the cluster head (CH) and to form

[...] Read more.

Multiple unmanned aerial vehicles (UAVs) are organized into clusters in a flying sensor network (FSNet) to achieve scalability and prolong the network lifetime. There are a variety of optimization schemes that can be adapted to determine the cluster head (CH) and to form stable and balanced clusters. Similarly, in FSNet, duplicated data may be transmitted to the CHs when multiple UAVs monitor activities in the vicinity where an event of interest occurs. The communication of duplicate data may consume more energy and bandwidth than computation for data aggregation. This paper proposes a honey-bee algorithm (HBA) to select the optimal CH set and form stable and balanced clusters. The modified HBA determines CHs based on the residual energy, UAV degree, and relative mobility. To transmit data, the UAV joins the nearest CH. The re-affiliation rate decreases with the proposed stable clustering procedure. Once the cluster is formed, ordinary UAVs transmit data to their UAVs-CH. An aggregation method based on dynamic programming is proposed to save energy consumption and bandwidth. The data aggregation procedure is applied at the cluster level to minimize communication and save bandwidth and energy. Simulation experiments validated the proposed scheme. The simulation results are compared with recent cluster-based data aggregation schemes. The results show that our proposed scheme outperforms state-of-the-art cluster-based data aggregation schemes in FSNet.

Full article

(This article belongs to the Special Issue Wireless Communications and Networking for Unmanned Aerial Vehicles, Ground Mobile Robots, and Marine Vehicles)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Future Internet Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Entropy, Future Internet, Healthcare, MAKE, Sensors

Communications Challenges in Health and Well-Being

Topic Editors: Dragana Bajic, Konstantinos Katzis, Gordana GardasevicDeadline: 30 November 2023

Topic in

Algorithms, Entropy, Future Internet, Mathematics, Symmetry

Complex Systems and Network Science

Topic Editors: Massimo Marchiori, Latora VitoDeadline: 31 December 2023

Topic in

Administrative Sciences, Future Internet, Information, Smart Cities, Social Sciences, Technologies, Urban Science

From ChatGPT to GovGPT: The Future of Digital Government

Topic Editors: Liang Ma, Yueping Zheng, Ziteng FanDeadline: 31 January 2024

Topic in

Computers, Energies, Future Internet, Information, Mathematics

Research on Blockchain Technology for Peer-to-Peer (P2P) Energy Trading

Topic Editors: Pierre-Martin Tardif, Brahim El Bhiri, Bilal Abu-Salih, Kenji TanakaDeadline: 29 February 2024

Conferences

Special Issues

Special Issue in

Future Internet

6G Wireless Communication Systems: Applications, Opportunities and Challenges II

Guest Editors: Raed A. Abd-Alhameed, Kelvin Anoh, Yousef Dama, Simeon Keates, Chan Hwang SeeDeadline: 20 September 2023

Special Issue in

Future Internet

6G Wireless Channel Measurements and Models: Trends and Challenges

Guest Editor: Seong Ki YooDeadline: 30 September 2023

Special Issue in

Future Internet

Securing Big Data Analytics for Cyber-Physical Systems

Guest Editors: Wei Yu, Weixian Liao, Fan LiangDeadline: 20 October 2023

Special Issue in

Future Internet

Key Enabling Technologies for Beyond 5G Networks

Guest Editors: Dania Marabissi, Lorenzo MucchiDeadline: 31 October 2023

Topical Collections

Topical Collection in

Future Internet

Featured Reviews of Future Internet Research

Collection Editor: Dino Giuli

Topical Collection in

Future Internet

5G/6G Networks for the Internet of Things: Communication Technologies and Challenges

Collection Editor: Sachin Sharma

Topical Collection in

Future Internet

Computer Vision, Deep Learning and Machine Learning with Applications

Collection Editors: Remus Bard, Arpad Gellert

Topical Collection in

Future Internet

Innovative People-Centered Solutions Applied to Industries, Cities and Societies

Collection Editors: Dino Giuli, Filipe Portela

.jpg)

_3x3_2.jpg)

.jpg)