-

AR/VR Teaching-Learning Experiences in Higher Education Institutions (HEI): A Systematic Literature Review

AR/VR Teaching-Learning Experiences in Higher Education Institutions (HEI): A Systematic Literature Review -

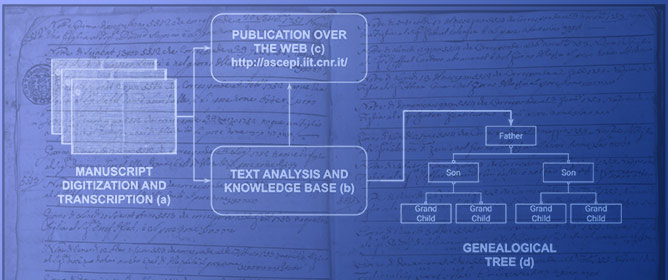

Genealogical Data Mining from Historical Archives: The Case of the Jewish Community in Pisa

Genealogical Data Mining from Historical Archives: The Case of the Jewish Community in Pisa -

Meeting Ourselves or Other Sides of Us?—Meta-Analysis of the Metaverse

Meeting Ourselves or Other Sides of Us?—Meta-Analysis of the Metaverse -

Low-Code Machine Learning Platforms: A Fastlane to Digitalization

Low-Code Machine Learning Platforms: A Fastlane to Digitalization

Journal Description

Informatics

Informatics

is an international, peer-reviewed, open access journal on information and communication technologies, human–computer interaction, and social informatics, and is published quarterly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), dblp, and other databases.

- Journal Rank: CiteScore - Q1 (Communication)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 25.5 days after submission; acceptance to publication is undertaken in 4.4 days (median values for papers published in this journal in the first half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.1 (2022);

5-Year Impact Factor:

2.7 (2022)

Latest Articles

A Comprehensive Analysis of the Worst Cybersecurity Vulnerabilities in Latin America

Informatics 2023, 10(3), 71; https://doi.org/10.3390/informatics10030071 (registering DOI) - 31 Aug 2023

Abstract

►

Show Figures

Vulnerabilities in cyber defense in the countries of the Latin American region have favored the activities of cybercriminals from different parts of the world who have carried out a growing number of cyberattacks that affect public and private services and compromise the integrity

[...] Read more.

Vulnerabilities in cyber defense in the countries of the Latin American region have favored the activities of cybercriminals from different parts of the world who have carried out a growing number of cyberattacks that affect public and private services and compromise the integrity of users and organizations. This article describes the most representative vulnerabilities related to cyberattacks that have affected different sectors of countries in the Latin American region. A systematic review of repositories and the scientific literature was conducted, considering journal articles, conference proceedings, and reports from official bodies and leading brands of cybersecurity systems. The cybersecurity vulnerabilities identified in the countries of the Latin American region are low cybersecurity awareness, lack of standards and regulations, use of outdated software, security gaps in critical infrastructure, and lack of training and professional specialization.

Full article

Open AccessEssay

What Is It Like to Make a Prototype? Practitioner Reflections on the Intersection of User Experience and Digital Humanities/Social Sciences during the Design and Delivery of the “Getting to Mount Resilience” Prototype

by

Informatics 2023, 10(3), 70; https://doi.org/10.3390/informatics10030070 - 28 Aug 2023

Abstract

The digital humanities and social sciences are critical for addressing societal challenges such as climate change and disaster risk reduction. One way in which the digital humanities and social sciences add value, particularly in an increasingly digitised society, is by engaging different communities

[...] Read more.

The digital humanities and social sciences are critical for addressing societal challenges such as climate change and disaster risk reduction. One way in which the digital humanities and social sciences add value, particularly in an increasingly digitised society, is by engaging different communities through digital services and products. Alongside this observation, the field of user experience (UX) has also become popular in industrial settings. UX specifically concerns designing and developing digital products and solutions, and, while it is popular in business and other academic domains, there is disquiet in the digital humanities/social sciences towards UX and a general lack of engagement. This paper shares the reflections and insights of a digital humanities/social science practitioner working on a UX project to build a prototype demonstrator for disaster risk reduction. Insights come from formal developmental and participatory evaluation activities, as well as qualitative self-reflection. The paper identifies lessons learnt, noting challenges experienced—including feelings of uncertainty and platform dependency—and reflects on the hesitancy practitioners may have and potential barriers in participation between UX and the digital humanities/social science. It concludes that digital humanities/social science practitioners have few skill barriers and offer a valued perspective, but unclear opportunities for critical engagement may present a barrier.

Full article

(This article belongs to the Section Digital Humanities)

Open AccessArticle

Theoretical Models for Acceptance of Human Implantable Technologies: A Narrative Review

Informatics 2023, 10(3), 69; https://doi.org/10.3390/informatics10030069 - 26 Aug 2023

Abstract

Theoretical models play a vital role in understanding the barriers and facilitators for the acceptance or rejection of emerging technologies. We conducted a narrative review of theoretical models predicting acceptance and adoption of human enhancement embeddable technologies to assess how well those models

[...] Read more.

Theoretical models play a vital role in understanding the barriers and facilitators for the acceptance or rejection of emerging technologies. We conducted a narrative review of theoretical models predicting acceptance and adoption of human enhancement embeddable technologies to assess how well those models have studied unique attributes and qualities of embeddables and to identify gaps in the literature. Our broad search across multiple databases and Google Scholar identified 15 relevant articles published since 2016. We discovered that three main theoretical models: the technology acceptance model (TAM), unified theory of acceptance and use of technology (UTAUT), and cognitive–affective–normative (CAN) model have been consistently used and refined to explain the acceptance of human enhancement embeddable technology. Psychological constructs such as self-efficacy, motivation, self-determination, and demographic factors were also explored as mediating and moderating variables. Based on our analysis, we collated the verified determinants into a comprehensive model, modifying the CAN model. We also identified gaps in the literature and recommended a further exploration of design elements and psychological constructs. Additionally, we suggest investigating other models such as the matching person and technology model (MPTM), the hedonic-motivation system adoption model (HMSAM), and the value-based adoption model (VAM) to provide a more nuanced understanding of embeddable technologies’ adoption. Our study not only synthesizes the current state of research but also provides a robust framework for future investigations. By offering insights into the complex interplay of factors influencing the adoption of embeddable technologies, we contribute to the development of more effective strategies for design, implementation, and acceptance, thereby paving the way for the successful integration of these technologies into everyday life.

Full article

(This article belongs to the Section Human-Computer Interaction)

►▼

Show Figures

Figure 1

Open AccessArticle

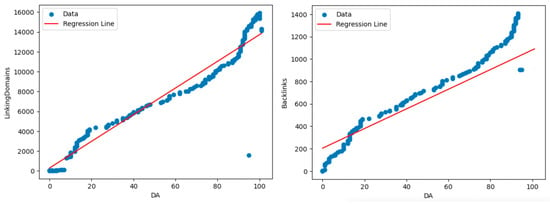

A Machine Learning Python-Based Search Engine Optimization Audit Software

Informatics 2023, 10(3), 68; https://doi.org/10.3390/informatics10030068 - 25 Aug 2023

Abstract

In the present-day digital landscape, websites have increasingly relied on digital marketing practices, notably search engine optimization (SEO), as a vital component in promoting sustainable growth. The traffic a website receives directly determines its development and success. As such, website owners frequently engage

[...] Read more.

In the present-day digital landscape, websites have increasingly relied on digital marketing practices, notably search engine optimization (SEO), as a vital component in promoting sustainable growth. The traffic a website receives directly determines its development and success. As such, website owners frequently engage the services of SEO experts to enhance their website’s visibility and increase traffic. These specialists employ premium SEO audit tools that crawl the website’s source code to identify structural changes necessary to comply with specific ranking criteria, commonly called SEO factors. Working collaboratively with developers, SEO specialists implement technical changes to the source code and await the results. The cost of purchasing premium SEO audit tools or hiring an SEO specialist typically ranges in the thousands of dollars per year. Against this backdrop, this research endeavors to provide an open-source Python-based Machine Learning SEO software tool to the general public, catering to the needs of both website owners and SEO specialists. The tool analyzes the top-ranking websites for a given search term, assessing their on-page and off-page SEO strategies, and provides recommendations to enhance a website’s performance to surpass its competition. The tool yields remarkable results, boosting average daily organic traffic from 10 to 143 visitors.

Full article

(This article belongs to the Topic Software Engineering and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

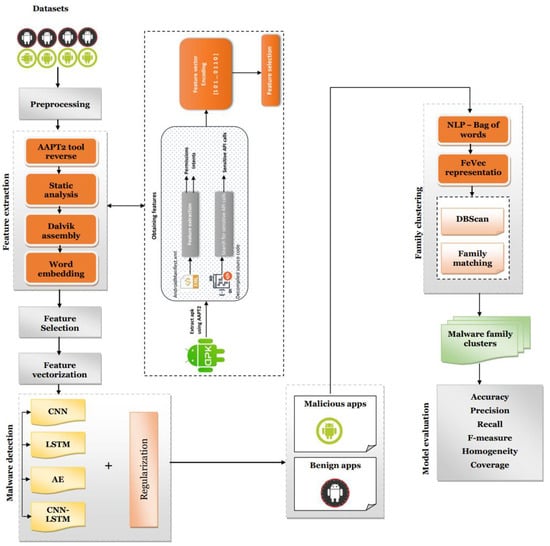

A Proposed Artificial Intelligence Model for Android-Malware Detection

Informatics 2023, 10(3), 67; https://doi.org/10.3390/informatics10030067 - 18 Aug 2023

Abstract

►▼

Show Figures

There are a variety of reasons why smartphones have grown so pervasive in our daily lives. While their benefits are undeniable, Android users must be vigilant against malicious apps. The goal of this study was to develop a broad framework for detecting Android

[...] Read more.

There are a variety of reasons why smartphones have grown so pervasive in our daily lives. While their benefits are undeniable, Android users must be vigilant against malicious apps. The goal of this study was to develop a broad framework for detecting Android malware using multiple deep learning classifiers; this framework was given the name DroidMDetection. To provide precise, dynamic, Android malware detection and clustering of different families of malware, the framework makes use of unique methodologies built based on deep learning and natural language processing (NLP) techniques. When compared to other similar works, DroidMDetection (1) uses API calls and intents in addition to the common permissions to accomplish broad malware analysis, (2) uses digests of features in which a deep auto-encoder generates to cluster the detected malware samples into malware family groups, and (3) benefits from both methods of feature extraction and selection. Numerous reference datasets were used to conduct in-depth analyses of the framework. DroidMDetection’s detection rate was high, and the created clusters were relatively consistent, no matter the evaluation parameters. DroidMDetection surpasses state-of-the-art solutions MaMaDroid, DroidMalwareDetector, MalDozer, and DroidAPIMiner across all metrics we used to measure their effectiveness.

Full article

Figure 1

Open AccessArticle

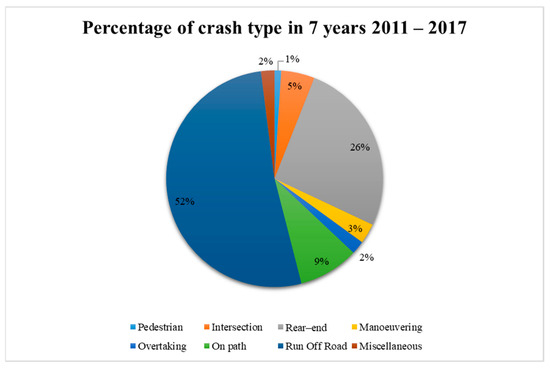

Analysis of Factors Associated with Highway Personal Car and Truck Run-Off-Road Crashes: Decision Tree and Mixed Logit Model with Heterogeneity in Means and Variances Approaches

by

, , , , and

Informatics 2023, 10(3), 66; https://doi.org/10.3390/informatics10030066 - 18 Aug 2023

Abstract

Among several approaches to analyzing crash research, the use of machine learning and econometric analysis has found potential in the analysis. This study aims to empirically examine factors influencing the single-vehicle crash for personal cars and trucks using decision trees (DT) and mixed

[...] Read more.

Among several approaches to analyzing crash research, the use of machine learning and econometric analysis has found potential in the analysis. This study aims to empirically examine factors influencing the single-vehicle crash for personal cars and trucks using decision trees (DT) and mixed binary logit with heterogeneity in means and variances (RPBLHMV) and compare model accuracy. The data in this study were obtained from the Department of Highway during 2011–2017, and the results indicated that the RPBLHMV was superior due to its higher overall prediction accuracy, sensitivity, and specificity values when compared to the DT model. According to the RPBLHMV results, car models showed that injury severity was associated with driver gender, seat belt, mount the island, defect equipment, and safety equipment. For the truck model, it was found that crashes located at intersections or medians, mounts on the island, and safety equipment have a significant influence on injury severity. DT results also showed that running off-road and hitting safety equipment can reduce the risk of death for car and truck drivers. This finding can illustrate the difference causing the dependent variable in each model. The RPBLHMV showed the ability to capture random parameters and unobserved heterogeneity. But DT can be easily used to provide variable importance and show which factor has the most significance by sequencing. Each model has advantages and disadvantages. The study findings can give relevant authorities choices for measures and policy improvement based on two analysis methods in accordance with their policy design. Therefore, whether advocating road safety or improving policy measures, the use of appropriate methods can increase operational efficiency.

Full article

(This article belongs to the Special Issue Feature Papers in Big Data)

►▼

Show Figures

Figure 1

Open AccessArticle

Exploring How Healthcare Organizations Use Twitter: A Discourse Analysis

by

and

Informatics 2023, 10(3), 65; https://doi.org/10.3390/informatics10030065 - 08 Aug 2023

Abstract

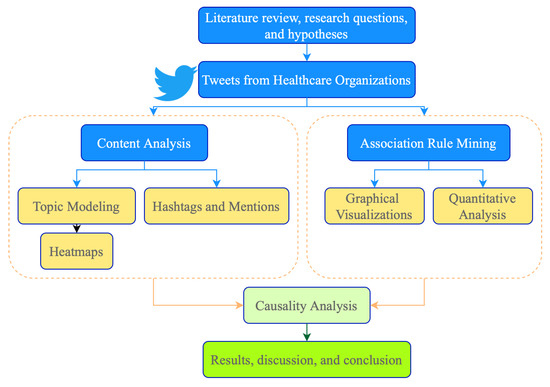

The use of Twitter by healthcare organizations is an effective means of disseminating medical information to the public. However, the content of tweets can be influenced by various factors, such as health emergencies and medical breakthroughs. In this study, we conducted a discourse

[...] Read more.

The use of Twitter by healthcare organizations is an effective means of disseminating medical information to the public. However, the content of tweets can be influenced by various factors, such as health emergencies and medical breakthroughs. In this study, we conducted a discourse analysis to better understand how public and private healthcare organizations use Twitter and the factors that influence the content of their tweets. Data were collected from the Twitter accounts of five private pharmaceutical companies, two US and two Canadian public health agencies, and the World Health Organization from 1 January 2020, to 31 December 2022. The study applied topic modeling and association rule mining to identify text patterns that influence the content of tweets across different Twitter accounts. The findings revealed that building a reputation on Twitter goes beyond just evaluating the popularity of a tweet in the online sphere. Topic modeling, when applied synchronously with hashtag and tagging analysis can provide an increase in tweet popularity. Additionally, the study showed differences in language use and style across the Twitter accounts’ categories and discussed how the impact of popular association rules could translate to significantly more user engagement. Overall, the results of this study provide insights into natural language processing for health literacy and present a way for organizations to structure their future content to ensure maximum public engagement.

Full article

(This article belongs to the Special Issue Novel Informatics Algorithms and Applications to Biomedicine)

►▼

Show Figures

Figure 1

Open AccessArticle

Reinforcement Learning for Reducing the Interruptions and Increasing Fault Tolerance in the Cloud Environment

Informatics 2023, 10(3), 64; https://doi.org/10.3390/informatics10030064 - 02 Aug 2023

Abstract

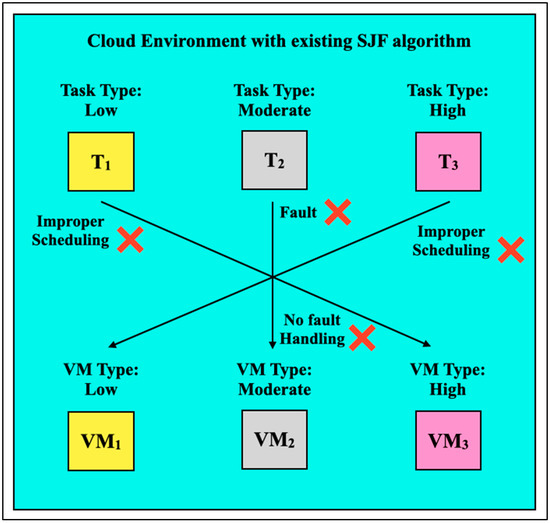

Cloud computing delivers robust computational services by processing tasks on its virtual machines (VMs) using resource-scheduling algorithms. The cloud’s existing algorithms provide limited results due to inappropriate resource scheduling. Additionally, these algorithms cannot process tasks generating faults while being computed. The primary reason

[...] Read more.

Cloud computing delivers robust computational services by processing tasks on its virtual machines (VMs) using resource-scheduling algorithms. The cloud’s existing algorithms provide limited results due to inappropriate resource scheduling. Additionally, these algorithms cannot process tasks generating faults while being computed. The primary reason for this is that these existing algorithms need an intelligence mechanism to enhance their abilities. To provide an intelligence mechanism to improve the resource-scheduling process and provision the fault-tolerance mechanism, an algorithm named reinforcement learning-shortest job first (RL-SJF) has been implemented by integrating the RL technique with the existing SJF algorithm. An experiment was conducted in a simulation platform to compare the working of RL-SJF with SJF, and challenging tasks were computed in multiple scenarios. The experimental results convey that the RL-SJF algorithm enhances the resource-scheduling process by improving the aggregate cost by 14.88% compared to the SJF algorithm. Additionally, the RL-SJF algorithm provided a fault-tolerance mechanism by computing 55.52% of the total tasks compared to 11.11% of the SJF algorithm. Thus, the RL-SJF algorithm improves the overall cloud performance and provides the ideal quality of service (QoS).

Full article

(This article belongs to the Topic Theory and Applications of High Performance Computing)

►▼

Show Figures

Figure 1

Open AccessArticle

Finding Good Attribute Subsets for Improved Decision Trees Using a Genetic Algorithm Wrapper; a Supervised Learning Application in the Food Business Sector for Wine Type Classification

Informatics 2023, 10(3), 63; https://doi.org/10.3390/informatics10030063 - 21 Jul 2023

Abstract

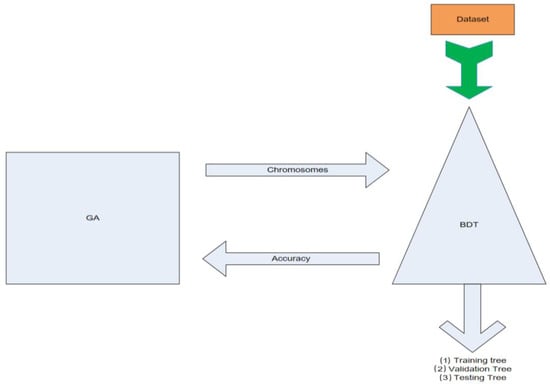

This study aims to provide a method that will assist decision makers in managing large datasets, eliminating the decision risk and highlighting significant subsets of data with certain weight. Thus, binary decision tree (BDT) and genetic algorithm (GA) methods are combined using a

[...] Read more.

This study aims to provide a method that will assist decision makers in managing large datasets, eliminating the decision risk and highlighting significant subsets of data with certain weight. Thus, binary decision tree (BDT) and genetic algorithm (GA) methods are combined using a wrapping technique. The BDT algorithm is used to classify data in a tree structure, while the GA is used to identify the best attribute combinations from a set of possible combinations, referred to as generations. The study seeks to address the problem of overfitting that may occur when classifying large datasets by reducing the number of attributes used in classification. Using the GA, the number of selected attributes is minimized, reducing the risk of overfitting. The algorithm produces many attribute sets that are classified using the BDT algorithm and are assigned a fitness number based on their accuracy. The fittest set of attributes, or chromosomes, as well as the BDTs, are then selected for further analysis. The training process uses the data of a chemical analysis of wines grown in the same region but derived from three different cultivars. The results demonstrate the effectiveness of this innovative approach in defining certain ingredients and weights of wine’s origin.

Full article

(This article belongs to the Special Issue Applications of Machine Learning and Deep Learning in Agriculture)

►▼

Show Figures

Figure 1

Open AccessArticle

Biologically Plausible Boltzmann Machine

Informatics 2023, 10(3), 62; https://doi.org/10.3390/informatics10030062 - 14 Jul 2023

Abstract

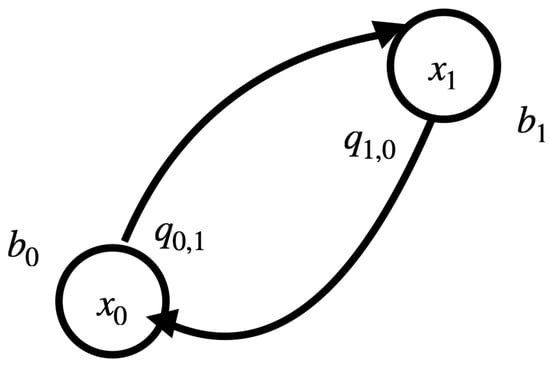

The dichotomy in power consumption between digital and biological information processing systems is an intriguing open question related at its core with the necessity for a more thorough understanding of the thermodynamics of the logic of computing. To contribute in this regard, we

[...] Read more.

The dichotomy in power consumption between digital and biological information processing systems is an intriguing open question related at its core with the necessity for a more thorough understanding of the thermodynamics of the logic of computing. To contribute in this regard, we put forward a model that implements the Boltzmann machine (BM) approach to computation through an electric substrate under thermal fluctuations and dissipation. The resulting network has precisely defined statistical properties, which are consistent with the data that are accessible to the BM. It is shown that by the proposed model, it is possible to design neural-inspired logic gates capable of universal Turing computation under similar thermal conditions to those found in biological neural networks and with information processing and storage electric potentials at comparable scales.

Full article

(This article belongs to the Section Machine Learning)

►▼

Show Figures

Figure 1

Open AccessArticle

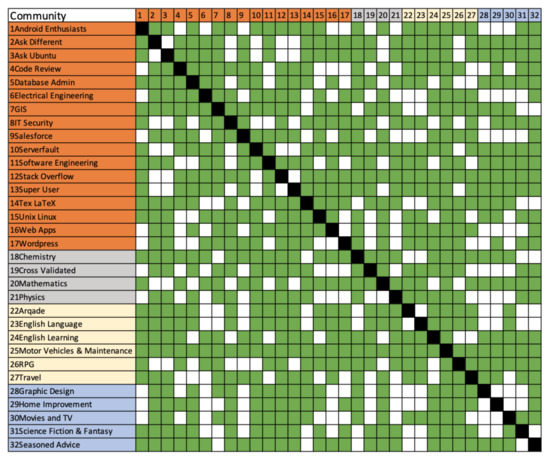

Poverty Traps in Online Knowledge-Based Peer-Production Communities

Informatics 2023, 10(3), 61; https://doi.org/10.3390/informatics10030061 - 13 Jul 2023

Abstract

Online knowledge-based peer-production communities, like question and answer sites (Q&A), often rely on gamification, e.g., through reputation points, to incentivize users to contribute frequently and effectively. These gamification techniques are important for achieving the critical mass that sustains a community and enticing new

[...] Read more.

Online knowledge-based peer-production communities, like question and answer sites (Q&A), often rely on gamification, e.g., through reputation points, to incentivize users to contribute frequently and effectively. These gamification techniques are important for achieving the critical mass that sustains a community and enticing new users to join. However, aging communities tend to build “poverty traps” that act as barriers for new users. In this paper, we present our investigation of 32 domain communities from Stack Exchange and our analysis of how different subjects impact the development of early user advantage. Our results raise important questions about the accessibility of knowledge-based peer-production communities. We consider the analysis results in the context of changing information needs and the relevance of Q&A in the future. Our findings inform policy design for building more equitable knowledge-based peer-production communities and increasing the accessibility to existing ones.

Full article

(This article belongs to the Section Human-Computer Interaction)

►▼

Show Figures

Figure 1

Open AccessArticle

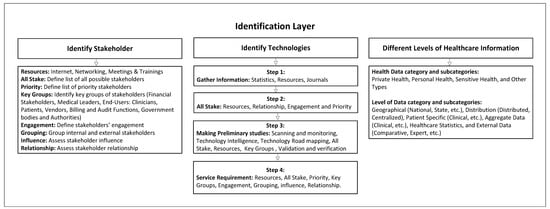

Towards a Universal Privacy Model for Electronic Health Record Systems: An Ontology and Machine Learning Approach

Informatics 2023, 10(3), 60; https://doi.org/10.3390/informatics10030060 - 11 Jul 2023

Abstract

►▼

Show Figures

This paper proposed a novel privacy model for Electronic Health Records (EHR) systems utilizing a conceptual privacy ontology and Machine Learning (ML) methodologies. It underscores the challenges currently faced by EHR systems such as balancing privacy and accessibility, user-friendliness, and legal compliance. To

[...] Read more.

This paper proposed a novel privacy model for Electronic Health Records (EHR) systems utilizing a conceptual privacy ontology and Machine Learning (ML) methodologies. It underscores the challenges currently faced by EHR systems such as balancing privacy and accessibility, user-friendliness, and legal compliance. To address these challenges, the study developed a universal privacy model designed to efficiently manage and share patients’ personal and sensitive data across different platforms, such as MHR and NHS systems. The research employed various BERT techniques to differentiate between legitimate and illegitimate privacy policies. Among them, Distil BERT emerged as the most accurate, demonstrating the potential of our ML-based approach to effectively identify inadequate privacy policies. This paper outlines future research directions, emphasizing the need for comprehensive evaluations, testing in real-world case studies, the investigation of adaptive frameworks, ethical implications, and fostering stakeholder collaboration. This research offers a pioneering approach towards enhancing healthcare information privacy, providing an innovative foundation for future work in this field.

Full article

Figure 1

Open AccessArticle

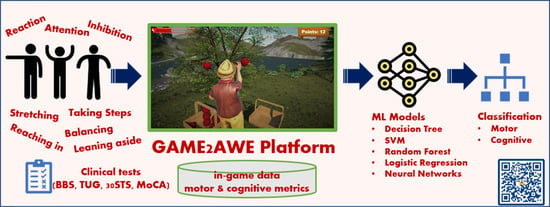

A Machine-Learning-Based Motor and Cognitive Assessment Tool Using In-Game Data from the GAME2AWE Platform

Informatics 2023, 10(3), 59; https://doi.org/10.3390/informatics10030059 - 09 Jul 2023

Abstract

With age, a decline in motor and cognitive functionality is inevitable, and it greatly affects the quality of life of the elderly and their ability to live independently. Early detection of these types of decline can enable timely interventions and support for maintaining

[...] Read more.

With age, a decline in motor and cognitive functionality is inevitable, and it greatly affects the quality of life of the elderly and their ability to live independently. Early detection of these types of decline can enable timely interventions and support for maintaining functional independence and improving overall well-being. This paper explores the potential of the GAME2AWE platform in assessing the motor and cognitive condition of seniors based on their in-game performance data. The proposed methodology involves developing machine learning models to explore the predictive power of features that are derived from the data collected during gameplay on the GAME2AWE platform. Through a study involving fifteen elderly participants, we demonstrate that utilizing in-game data can achieve a high classification performance when predicting the motor and cognitive states. Various machine learning techniques were used but Random Forest outperformed the other models, achieving a classification accuracy ranging from 93.6% for cognitive screening to 95.6% for motor assessment. These results highlight the potential of using exergames within a technology-rich environment as an effective means of capturing the health status of seniors. This approach opens up new possibilities for objective and non-invasive health assessment, facilitating early detections and interventions to improve the well-being of seniors.

Full article

(This article belongs to the Special Issue Feature Papers in Medical and Clinical Informatics)

►▼

Show Figures

Graphical abstract

Open AccessArticle

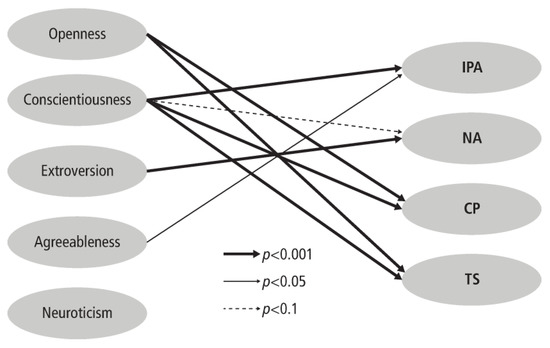

Digital Citizenship and the Big Five Personality Traits

Informatics 2023, 10(3), 58; https://doi.org/10.3390/informatics10030058 - 07 Jul 2023

Abstract

Over the past two decades, the internet has become an increasingly important venue for political expression, community building, and social activism. Scholars in a wide range of disciplines have endeavored to understand and measure how these transformations have affected individuals’ civic attitudes and

[...] Read more.

Over the past two decades, the internet has become an increasingly important venue for political expression, community building, and social activism. Scholars in a wide range of disciplines have endeavored to understand and measure how these transformations have affected individuals’ civic attitudes and behaviors. The Digital Citizenship Scale (original and revised form) has become one of the most widely used instruments for measuring and evaluating these changes, but to date, no study has investigated how digital citizenship behaviors relate to exogenous variables. Using the classic Big Five Factor model of personality (Openness to experience, Conscientiousness, Extroversion, Agreeableness, and Neuroticism), this study investigated how personality traits relate to the key components of digital citizenship. Survey results were gathered across three countries (n = 1820), and analysis revealed that personality traits map uniquely on to digital citizenship in comparison to traditional forms of civic engagement. The implications of these findings are discussed.

Full article

(This article belongs to the Section Human-Computer Interaction)

►▼

Show Figures

Figure 1

Open AccessArticle

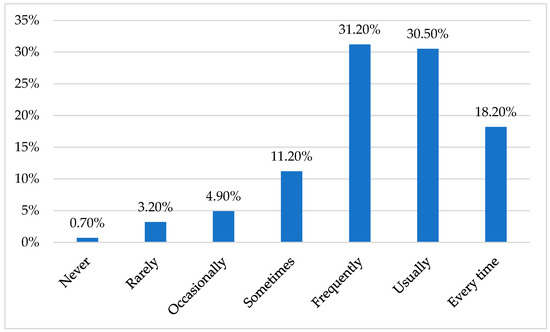

Information and Communication Technologies in Primary Education: Teachers’ Perceptions in Greece

Informatics 2023, 10(3), 57; https://doi.org/10.3390/informatics10030057 - 07 Jul 2023

Abstract

Innovative learning methods including the increasing use of Information and Communication Technologies (ICT) applications are transforming the contemporary educational process. Teachers’ perceptions of ICT, self-efficacy on computers and demographics are some of the factors that have been found to impact the use of

[...] Read more.

Innovative learning methods including the increasing use of Information and Communication Technologies (ICT) applications are transforming the contemporary educational process. Teachers’ perceptions of ICT, self-efficacy on computers and demographics are some of the factors that have been found to impact the use of ICT in the educational process. The aim of the present research is to analyze the perceptions of primary school teachers about ICT and how they affect their use in the educational process, through the case of Greece. To do so, primary research was carried out. Data from 285 valid questionnaires were statistically analyzed using descriptive statistics, principal components analysis, correlation and regression analysis. The main results were in accordance with the relevant literature, indicating the impact of teachers’ self-efficacy, perceptions and demographics on ICT use in the educational process. These results provide useful insights for the achievement of a successful implementation of ICT in education.

Full article

(This article belongs to the Section Human-Computer Interaction)

►▼

Show Figures

Figure 1

Open AccessArticle

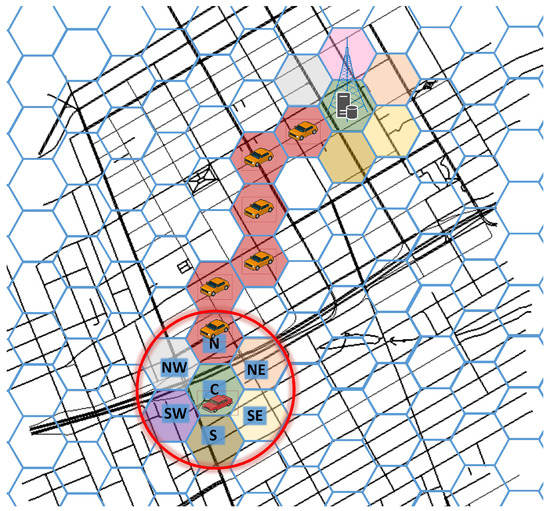

FOXS-GSC—Fast Offset Xpath Service with HexagonS Communication

Informatics 2023, 10(3), 56; https://doi.org/10.3390/informatics10030056 - 04 Jul 2023

Abstract

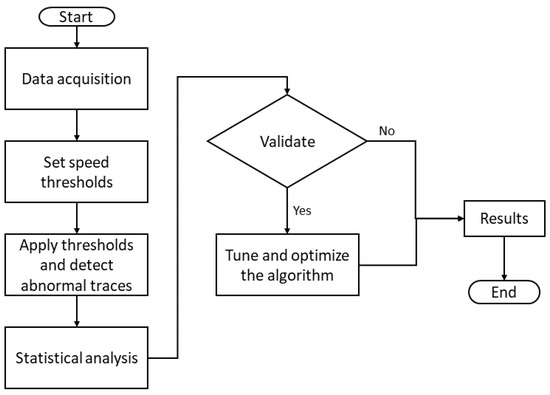

Congestion in large cities is widely recognized as a problem that impacts various aspects of society, including the economy and public health. To support the urban traffic system and to mitigate traffic congestion and the damage it causes, in this article we propose

[...] Read more.

Congestion in large cities is widely recognized as a problem that impacts various aspects of society, including the economy and public health. To support the urban traffic system and to mitigate traffic congestion and the damage it causes, in this article we propose an assistant Intelligent Transport Systems (ITS) service for traffic management in Vehicular Networks (VANET), which we name FOXS-GSC, for Fast Offset Xpath Service with hexaGonS Communication. FOXS-GSC uses a VANET communication and fog computing paradigm to detect and recommend an alternative vehicle route to avoid traffic jams. Unlike the previous solutions in the literature, the proposed service offers a versatile approach in which traffic road classification and route suggestions can be made by infrastructure or by the vehicle itself without compromising the quality of the route service. To achieve this, the service operates in a decentralized way, and the components of the service (vehicles/infrastructure) exchange messages containing vehicle information and regional traffic information. For communication, the proposed approach uses a new dedicated multi-hop protocol that has been specifically designed based on the characteristics and requirements of a vehicle routing service. Therefore, by adapting to the inherent characteristics of a vehicle routing service, such as the density of regions, the proposed communication protocol both enhances reliability and improves the overall efficiency of the vehicle routing service. Simulation results comparing FOXS-GSC with baseline solutions and other proposals from the literature demonstrate its significant impact, reducing network congestion by up to 95% while maintaining a coverage of 97% across various scenery characteristics. Concerning road traffic efficiency, the traffic quality is increasing by 29%, for a reduction in carbon emissions of 10%.

Full article

(This article belongs to the Special Issue The Smart Cities Continuum via Machine Learning and Artificial Intelligence)

►▼

Show Figures

Figure 1

Open AccessArticle

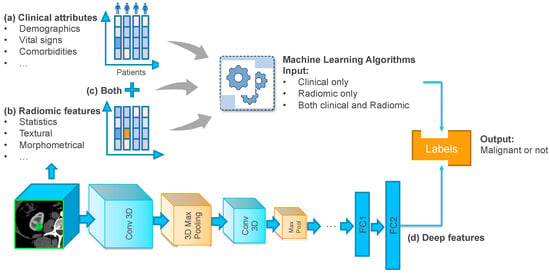

Classification of Benign and Malignant Renal Tumors Based on CT Scans and Clinical Data Using Machine Learning Methods

Informatics 2023, 10(3), 55; https://doi.org/10.3390/informatics10030055 - 03 Jul 2023

Abstract

Up to 20% of renal masses ≤4 cm is found to be benign at the time of surgical excision, raising concern for overtreatment. However, the risk of malignancy is currently unable to be accurately predicted prior to surgery using imaging alone. The objective

[...] Read more.

Up to 20% of renal masses ≤4 cm is found to be benign at the time of surgical excision, raising concern for overtreatment. However, the risk of malignancy is currently unable to be accurately predicted prior to surgery using imaging alone. The objective of this study is to propose a machine learning (ML) framework for pre-operative renal tumor classification using readily available clinical and CT imaging data. We tested both traditional ML methods (i.e., XGBoost, random forest (RF)) and deep learning (DL) methods (i.e., multilayer perceptron (MLP), 3D convolutional neural network (3DCNN)) to build the classification model. We discovered that the combination of clinical and radiomics features produced the best results (i.e., AUC [95% CI] of 0.719 [0.712–0.726], a precision [95% CI] of 0.976 [0.975–0.978], a recall [95% CI] of 0.683 [0.675–0.691], and a specificity [95% CI] of 0.827 [0.817–0.837]). Our analysis revealed that employing ML models with CT scans and clinical data holds promise for classifying the risk of renal malignancy. Future work should focus on externally validating the proposed model and features to better support clinical decision-making in renal cancer diagnosis.

Full article

(This article belongs to the Special Issue Feature Papers in Medical and Clinical Informatics)

►▼

Show Figures

Figure 1

Open AccessArticle

Risk-Based Approach for Selecting Company Key Performance Indicator in an Example of Financial Services

Informatics 2023, 10(2), 54; https://doi.org/10.3390/informatics10020054 - 19 Jun 2023

Abstract

►▼

Show Figures

Risk management is a highly important issue for Fintech companies; moreover, it is very specific and puts forward the serious requirements toward the top management of any financial institution. This study was devoted to specifying the risk factors affecting the finance and capital

[...] Read more.

Risk management is a highly important issue for Fintech companies; moreover, it is very specific and puts forward the serious requirements toward the top management of any financial institution. This study was devoted to specifying the risk factors affecting the finance and capital adequacy of financial institutions. The authors considered the different types of risks in combination, whereas other scholars usually analyze risks in isolation; however, the authors believe that it is necessary to consider their mutual impact. The risks were estimated using the PLS-SEM method in Smart PLS-4 software. The quality of the obtained model is very high according to all indicators. Five hypotheses related to finance and five hypotheses related to capital adequacy were considered. The impact of AML, cyber, and governance risks on capital adequacy was confirmed; the effect of governance and operational risks on finance was also confirmed. Other risks have no impact on finance and capital adequacy. It is interesting that risks associated with staff have no impact on finance and capital adequacy. The findings of this study can be easily applied by any financial institution for risk analysis. Moreover, this study can serve toward a better collaboration of scholars investigating the Fintech activities and practitioners working in this sphere. The authors present a novel approach for enhancing key performance indicators (KPIs) for Fintech companies, proposing utilizing metrics that are derived from the company’s specific risks, thereby introducing an innovative method for selecting KPIs based on the inherent risks associated with the Fintech’s business model. This model aligns the KPIs with the unique risk profile of the company, fostering a fresh perspective on performance measurement within the Fintech industry.

Full article

Figure 1

Open AccessArticle

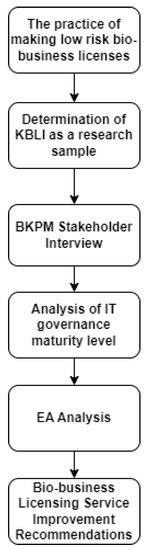

The Smart Governance Framework and Enterprise System’s Capability for Improving Bio-Business Licensing Services

Informatics 2023, 10(2), 53; https://doi.org/10.3390/informatics10020053 - 16 Jun 2023

Abstract

►▼

Show Figures

One way to improve Indonesia’s ranking in terms of ease of conducting business is by taking a closer look at the business licensing process. This study aims to carry out an assessment using a smart governance framework and recommendation capabilities from the Enterprise

[...] Read more.

One way to improve Indonesia’s ranking in terms of ease of conducting business is by taking a closer look at the business licensing process. This study aims to carry out an assessment using a smart governance framework and recommendation capabilities from the Enterprise System (ES). As a result, the recommendations for improvement with the expected priority are generated. The stages of this research are observing the process of making bio-business permits, followed by interviews related to several Enterprise Architecture (EA) capabilities, and providing recommendations based on the results of the maturity level of IT governance. These recommendations are then mapped into an impact—effort matrix for program prioritization. The recommendations for bio-business licenses can also be used to improve the process for other business licenses. Implementation of the EA framework has been proven to align technology, organization, and processes so that it can support continuous improvement processes.

Full article

Figure 1

Open AccessArticle

Detection of Abnormal Patterns in Children’s Handwriting by Using an Artificial-Intelligence-Based Method

Informatics 2023, 10(2), 52; https://doi.org/10.3390/informatics10020052 - 14 Jun 2023

Abstract

Using camera-based algorithms to detect abnormal patterns in children’s handwriting has become a promising tool in education and occupational therapy. This study analyzes the performance of a camera- and tablet-based handwriting verification algorithm to detect abnormal patterns in handwriting samples processed from 71

[...] Read more.

Using camera-based algorithms to detect abnormal patterns in children’s handwriting has become a promising tool in education and occupational therapy. This study analyzes the performance of a camera- and tablet-based handwriting verification algorithm to detect abnormal patterns in handwriting samples processed from 71 students of different grades. The study results revealed that the algorithm saw abnormal patterns in 20% of the handwriting samples processed, which included practices such as delayed typing speed, excessive pen pressure, irregular slant, and lack of word spacing. In addition, it was observed that the detection accuracy of the algorithm was 95% when comparing the camera data with the abnormal patterns detected, which indicates a high reliability in the results obtained. The highlight of the study was the feedback provided to children and teachers on the camera data and any abnormal patterns detected. This can significantly impact students’ awareness and improvement of writing skills by providing real-time feedback on their writing and allowing them to adjust to correct detected abnormal patterns.

Full article

(This article belongs to the Special Issue Digital Humanities and Visualization)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Informatics Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Applied Sciences, Electronics, Informatics, Information, Software

Software Engineering and Applications

Topic Editors: Sanjay Misra, Robertas Damaševičius, Bharti SuriDeadline: 31 October 2023

Topic in

Brain Sciences, Healthcare, Informatics, IJERPH

Applications of Virtual Reality Technology in Rehabilitation

Topic Editors: Jorge Oliveira, Pedro GamitoDeadline: 31 December 2023

Topic in

Electronics, Applied Sciences, BDCC, Mathematics, Informatics

Theory and Applications of High Performance Computing

Topic Editors: Pavel Lyakhov, Maxim DeryabinDeadline: 29 February 2024

Topic in

AI, Algorithms, BDCC, Future Internet, Informatics, Information, Languages, Publications

AI Chatbots: Threat or Opportunity?

Topic Editors: Antony Bryant, Roberto Montemanni, Min Chen, Paolo Bellavista, Kenji Suzuki, Jeanine Treffers-DallerDeadline: 30 April 2024

Conferences

Special Issues

Special Issue in

Informatics

ICT for Genealogical Data

Guest Editors: Andrea Marchetti, Angelica Lo DucaDeadline: 31 October 2023

Special Issue in

Informatics

Software Engineering Practices, Challenges and Trends

Guest Editors: Fernando Reinaldo Ribeiro, José Metrôlho, Javier Berrocal, Luis L. Fernández-SanzDeadline: 30 November 2023

Special Issue in

Informatics

Information Technology for Agri-Food

Guest Editors: Remo Pareschi, Karl Presser, Claudia ZoaniDeadline: 15 December 2023

Special Issue in

Informatics

Feature Papers in Big Data

Guest Editor: Weitian TongDeadline: 31 December 2023

Topical Collections

Topical Collection in

Informatics

Promotion of Computational Thinking and Informatics Education in Pre-University Studies

Collection Editor: Francisco José García-Peñalvo

Topical Collection in

Informatics

Uncertainty in Digital Humanities

Collection Editors: Roberto Theron, Eveline Wandl-Vogt, Jennifer Cizik Edmond, Cezary Mazurek