Journal Description

Journal of Imaging

Journal of Imaging

is an international, multi/interdisciplinary, peer-reviewed, open access journal of imaging techniques published online monthly by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), PubMed, PMC, dblp, Inspec, Ei Compendex, and other databases.

- Journal Rank: CiteScore - Q2 (Computer Graphics and Computer-Aided Design)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 21.9 days after submission; acceptance to publication is undertaken in 3.9 days (median values for papers published in this journal in the first half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.2 (2022);

5-Year Impact Factor:

3.2 (2022)

Latest Articles

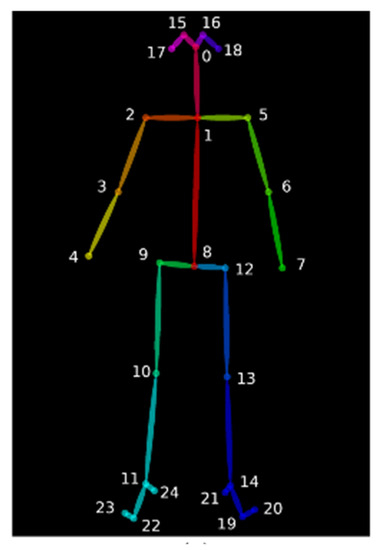

Intelligent Performance Evaluation in Rowing Sport Using a Graph-Matching Network

J. Imaging 2023, 9(9), 181; https://doi.org/10.3390/jimaging9090181 - 31 Aug 2023

Abstract

►

Show Figures

Rowing competitions require consistent rowing strokes among crew members to achieve optimal performance. However, existing motion analysis techniques often rely on wearable sensors, leading to challenges in sporter inconvenience. The aim of our work is to use a graph-matching network to analyze the

[...] Read more.

Rowing competitions require consistent rowing strokes among crew members to achieve optimal performance. However, existing motion analysis techniques often rely on wearable sensors, leading to challenges in sporter inconvenience. The aim of our work is to use a graph-matching network to analyze the similarity in rowers’ rowing posture and further pair rowers to improve the performance of their rowing team. This study proposed a novel video-based performance analysis system to analyze paired rowers using a graph-matching network. The proposed system first detected human joint points, as acquired from the OpenPose system, and then the graph embedding model and graph-matching network model were applied to analyze similarities in rowing postures between paired rowers. When analyzing the postures of the paired rowers, the proposed system detected the same starting point of their rowing postures to achieve more accurate pairing results. Finally, variations in the similarities were displayed using the proposed time-period similarity processing. The experimental results show that the proposed time-period similarity processing of the 2D graph-embedding model (GEM) had the best pairing results.

Full article

Open AccessArticle

Automatic 3D Postoperative Evaluation of Complex Orthopaedic Interventions

by

, , , , and

J. Imaging 2023, 9(9), 180; https://doi.org/10.3390/jimaging9090180 - 31 Aug 2023

Abstract

In clinical practice, image-based postoperative evaluation is still performed without state-of-the-art computer methods, as these are not sufficiently automated. In this study we propose a fully automatic 3D postoperative outcome quantification method for the relevant steps of orthopaedic interventions on the example of

[...] Read more.

In clinical practice, image-based postoperative evaluation is still performed without state-of-the-art computer methods, as these are not sufficiently automated. In this study we propose a fully automatic 3D postoperative outcome quantification method for the relevant steps of orthopaedic interventions on the example of Periacetabular Osteotomy of Ganz (PAO). A typical orthopaedic intervention involves cutting bone, anatomy manipulation and repositioning as well as implant placement. Our method includes a segmentation based deep learning approach for detection and quantification of the cuts. Furthermore, anatomy repositioning was quantified through a multi-step registration method, which entailed a coarse alignment of the pre- and postoperative CT images followed by a fine fragment alignment of the repositioned anatomy. Implant (i.e., screw) position was identified by 3D Hough transform for line detection combined with fast voxel traversal based on ray tracing. The feasibility of our approach was investigated on 27 interventions and compared against manually performed 3D outcome evaluations. The results show that our method can accurately assess the quality and accuracy of the surgery. Our evaluation of the fragment repositioning showed a cumulative error for the coarse and fine alignment of 2.1 mm. Our evaluation of screw placement accuracy resulted in a distance error of 1.32 mm for screw head location and an angular deviation of 1.1° for screw axis. As a next step we will explore generalisation capabilities by applying the method to different interventions.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

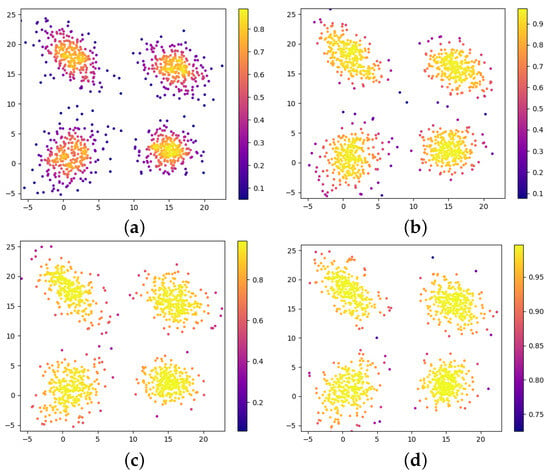

Data-Weighted Multivariate Generalized Gaussian Mixture Model: Application to Point Cloud Robust Registration

J. Imaging 2023, 9(9), 179; https://doi.org/10.3390/jimaging9090179 - 31 Aug 2023

Abstract

In this paper, a weighted multivariate generalized Gaussian mixture model combined with stochastic optimization is proposed for point cloud registration. The mixture model parameters of the target scene and the scene to be registered are updated iteratively by the fixed point method under

[...] Read more.

In this paper, a weighted multivariate generalized Gaussian mixture model combined with stochastic optimization is proposed for point cloud registration. The mixture model parameters of the target scene and the scene to be registered are updated iteratively by the fixed point method under the framework of the EM algorithm, and the number of components is determined based on the minimum message length criterion (MML). The KL divergence between these two mixture models is utilized as the loss function for stochastic optimization to find the optimal parameters of the transformation model. The self-built point clouds are used to evaluate the performance of the proposed algorithm on rigid registration. Experiments demonstrate that the algorithm dramatically reduces the impact of noise and outliers and effectively extracts the key features of the data-intensive regions.

Full article

(This article belongs to the Special Issue Feature Papers in Section AI in Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

2-[18F]FDG-PET/CT in Cancer of Unknown Primary Tumor—A Retrospective Register-Based Cohort Study

by

, , , , , , and

J. Imaging 2023, 9(9), 178; https://doi.org/10.3390/jimaging9090178 (registering DOI) - 31 Aug 2023

Abstract

We investigated the impact of 2-[18F]FDG-PET/CT on detection rate (DR) of the primary tumor and survival in patients with suspected cancer of unknown primary tumor (CUP), comparing it to the conventional diagnostic imaging method, CT. Patients who received a tentative CUP diagnosis at

[...] Read more.

We investigated the impact of 2-[18F]FDG-PET/CT on detection rate (DR) of the primary tumor and survival in patients with suspected cancer of unknown primary tumor (CUP), comparing it to the conventional diagnostic imaging method, CT. Patients who received a tentative CUP diagnosis at Odense University Hospital from 2014–2017 were included. Patients receiving a 2-[18F]FDG-PET/CT were assigned to the 2-[18F]FDG-PET/CT group and patients receiving a CT only to the CT group. DR was calculated as the proportion of true positive findings of 2-[18F]FDG-PET/CT and CT scans, separately, using biopsy of the primary tumor, autopsy, or clinical decision as reference standard. Survival analyses included Kaplan–Meier estimates and Cox proportional hazards regression adjusted for age, sex, treatment, and propensity score. We included 193 patients. Of these, 159 were in the 2-[18F]FDG-PET/CT group and 34 were in the CT group. DR was 36.5% in the 2-[18F]FDG-PET/CT group and 17.6% in the CT group, respectively (p = 0.012). Median survival was 7.4 (95% CI 0.4–98.7) months in the 2-[18F]FDG-PET/CT group and 3.8 (95% CI 0.2–98.1) in the CT group. Survival analysis showed a crude hazard ratio of 0.63 (p = 0.024) and an adjusted hazard ratio of 0.68 (p = 0.087) for the 2-[18F]FDG-PET/CT group compared with CT. This study found a significantly higher DR of the primary tumor in suspected CUP patients using 2-[18F]FDG-PET/CT compared with patients receiving only CT, with possible immense clinical importance. No significant difference in survival was found, although a possible tendency towards longer survival in the 2-[18F]FDG-PET/CT group was observed.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

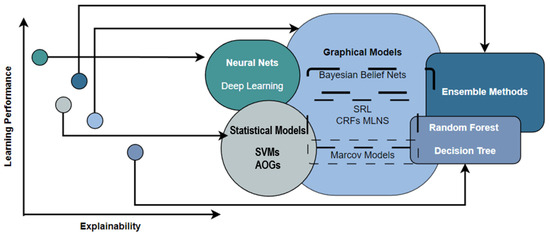

Explainable Artificial Intelligence (XAI) for Deep Learning Based Medical Imaging Classification

J. Imaging 2023, 9(9), 177; https://doi.org/10.3390/jimaging9090177 - 30 Aug 2023

Abstract

Recently, deep learning has gained significant attention as a noteworthy division of artificial intelligence (AI) due to its high accuracy and versatile applications. However, one of the major challenges of AI is the need for more interpretability, commonly referred to as the black-box

[...] Read more.

Recently, deep learning has gained significant attention as a noteworthy division of artificial intelligence (AI) due to its high accuracy and versatile applications. However, one of the major challenges of AI is the need for more interpretability, commonly referred to as the black-box problem. In this study, we introduce an explainable AI model for medical image classification to enhance the interpretability of the decision-making process. Our approach is based on segmenting the images to provide a better understanding of how the AI model arrives at its results. We evaluated our model on five datasets, including the COVID-19 and Pneumonia Chest X-ray dataset, Chest X-ray (COVID-19 and Pneumonia), COVID-19 Image Dataset (COVID-19, Viral Pneumonia, Normal), and COVID-19 Radiography Database. We achieved testing and validation accuracy of 90.6% on a relatively small dataset of 6432 images. Our proposed model improved accuracy and reduced time complexity, making it more practical for medical diagnosis. Our approach offers a more interpretable and transparent AI model that can enhance the accuracy and efficiency of medical diagnosis.

Full article

(This article belongs to the Special Issue Explainable AI for Image-Aided Diagnosis)

►▼

Show Figures

Figure 1

Open AccessArticle

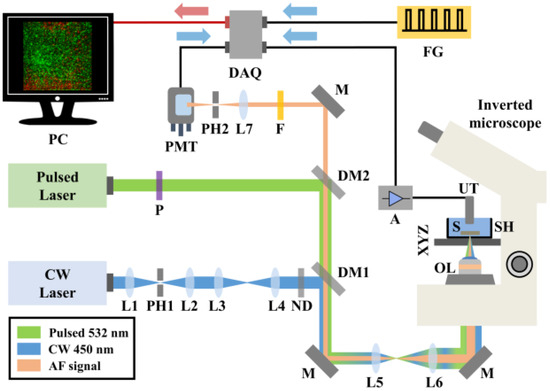

Hybrid Autofluorescence and Optoacoustic Microscopy for the Label-Free, Early and Rapid Detection of Pathogenic Infections in Vegetative Tissues

by

, , , , , and

J. Imaging 2023, 9(9), 176; https://doi.org/10.3390/jimaging9090176 - 29 Aug 2023

Abstract

Agriculture plays a pivotal role in food security and food security is challenged by pests and pathogens. Due to these challenges, the yields and quality of agricultural production are reduced and, in response, restrictions in the trade of plant products are applied. Governments

[...] Read more.

Agriculture plays a pivotal role in food security and food security is challenged by pests and pathogens. Due to these challenges, the yields and quality of agricultural production are reduced and, in response, restrictions in the trade of plant products are applied. Governments have collaborated to establish robust phytosanitary measures, promote disease surveillance, and invest in research and development to mitigate the impact on food security. Classic as well as modernized tools for disease diagnosis and pathogen surveillance do exist, but most of these are time-consuming, laborious, or are less sensitive. To that end, we propose the innovative application of a hybrid imaging approach through the combination of confocal fluorescence and optoacoustic imaging microscopy. This has allowed us to non-destructively detect the physiological changes that occur in plant tissues as a result of a pathogen-induced interaction well before visual symptoms occur. When broccoli leaves were artificially infected with Xanthomonas campestris pv. campestris (Xcc), eventually causing an economically important bacterial disease, the induced optical absorption alterations could be detected at very early stages of infection. Therefore, this innovative microscopy approach was positively utilized to detect the disease caused by a plant pathogen, showing that it can also be employed to detect quarantine pathogens such as Xylella fastidiosa.

Full article

(This article belongs to the Special Issue Fluorescence Imaging and Analysis of Cellular System)

►▼

Show Figures

Figure 1

Open AccessArticle

End-to-End Depth-Guided Relighting Using Lightweight Deep Learning-Based Method

by

and

J. Imaging 2023, 9(9), 175; https://doi.org/10.3390/jimaging9090175 - 28 Aug 2023

Abstract

Image relighting, which involves modifying the lighting conditions while preserving the visual content, is fundamental to computer vision. This study introduced a bi-modal lightweight deep learning model for depth-guided relighting. The model utilizes the Res2Net Squeezed block’s ability to capture long-range dependencies and

[...] Read more.

Image relighting, which involves modifying the lighting conditions while preserving the visual content, is fundamental to computer vision. This study introduced a bi-modal lightweight deep learning model for depth-guided relighting. The model utilizes the Res2Net Squeezed block’s ability to capture long-range dependencies and to enhance feature representation for both the input image and its corresponding depth map. The proposed model adopts an encoder–decoder structure with Res2Net Squeezed blocks integrated at each stage of encoding and decoding. The model was trained and evaluated on the VIDIT dataset, which consists of 300 triplets of images. Each triplet contains the input image, its corresponding depth map, and the relit image under diverse lighting conditions, such as different illuminant angles and color temperatures. The enhanced feature representation and improved information flow within the Res2Net Squeezed blocks enable the model to handle complex lighting variations and generate realistic relit images. The experimental results demonstrated the proposed approach’s effectiveness in relighting accuracy, measured by metrics such as the PSNR, SSIM, and visual quality.

Full article

(This article belongs to the Section AI in Imaging)

►▼

Show Figures

Figure 1

Open AccessProtocol

3D Ultrasound and MRI in Assessing Resection Margins during Tongue Cancer Surgery: A Research Protocol for a Clinical Diagnostic Accuracy Study

by

, , , , , , , , and

J. Imaging 2023, 9(9), 174; https://doi.org/10.3390/jimaging9090174 - 28 Aug 2023

Abstract

Surgery is the primary treatment for tongue cancer. The goal is a complete resection of the tumor with an adequate margin of healthy tissue around the tumor.Inadequate margins lead to a high risk of local cancer recurrence and the need for adjuvant therapies.

[...] Read more.

Surgery is the primary treatment for tongue cancer. The goal is a complete resection of the tumor with an adequate margin of healthy tissue around the tumor.Inadequate margins lead to a high risk of local cancer recurrence and the need for adjuvant therapies. Ex vivo imaging of the resected surgical specimen has been suggested for margin assessment and improved surgical results. Therefore, we have developed a novel three-dimensional (3D) ultrasound imaging technique to improve the assessment of resection margins during surgery. In this research protocol, we describe a study comparing the accuracy of 3D ultrasound, magnetic resonance imaging (MRI), and clinical examination of the surgical specimen to assess the resection margins during cancer surgery. Tumor segmentation and margin measurement will be performed using 3D ultrasound and MRI of the ex vivo specimen. We will determine the accuracy of each method by comparing the margin measurements and the proportion of correctly classified margins (positive, close, and free) obtained by each technique with respect to the gold standard histopathology.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

A Framework for Detecting Thyroid Cancer from Ultrasound and Histopathological Images Using Deep Learning, Meta-Heuristics, and MCDM Algorithms

by

, , , , , and

J. Imaging 2023, 9(9), 173; https://doi.org/10.3390/jimaging9090173 - 27 Aug 2023

Abstract

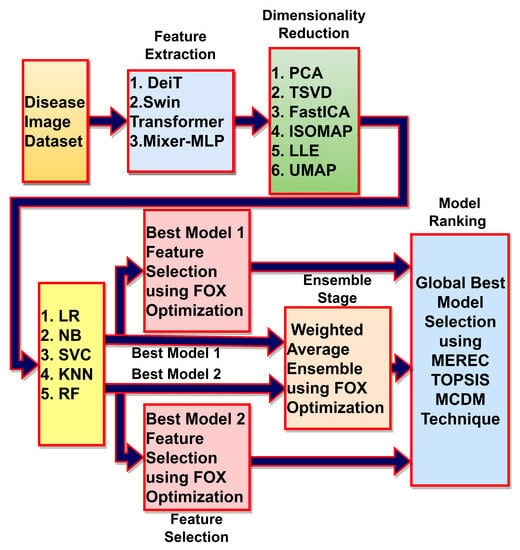

Computer-assisted diagnostic systems have been developed to aid doctors in diagnosing thyroid-related abnormalities. The aim of this research is to improve the diagnosis accuracy of thyroid abnormality detection models that can be utilized to alleviate undue pressure on healthcare professionals. In this research,

[...] Read more.

Computer-assisted diagnostic systems have been developed to aid doctors in diagnosing thyroid-related abnormalities. The aim of this research is to improve the diagnosis accuracy of thyroid abnormality detection models that can be utilized to alleviate undue pressure on healthcare professionals. In this research, we proposed deep learning, metaheuristics, and a MCDM algorithms-based framework to detect thyroid-related abnormalities from ultrasound and histopathological images. The proposed method uses three recently developed deep learning techniques (DeiT, Swin Transformer, and Mixer-MLP) to extract features from the thyroid image datasets. The feature extraction techniques are based on the Image Transformer and MLP models. There is a large number of redundant features that can overfit the classifiers and reduce the generalization capabilities of the classifiers. In order to avoid the overfitting problem, six feature transformation techniques (PCA, TSVD, FastICA, ISOMAP, LLE, and UMP) are analyzed to reduce the dimensionality of the data. There are five different classifiers (LR, NB, SVC, KNN, and RF) evaluated using the 5-fold stratified cross-validation technique on the transformed dataset. Both datasets exhibit large class imbalances and hence, the stratified cross-validation technique is used to evaluate the performance. The MEREC-TOPSIS MCDM technique is used for ranking the evaluated models at different analysis stages. In the first stage, the best feature extraction and classification techniques are chosen, whereas, in the second stage, the best dimensionality reduction method is evaluated in wrapper feature selection mode. Two best-ranked models are further selected for the weighted average ensemble learning and features selection using the recently proposed meta-heuristics FOX-optimization algorithm. The PCA+FOX optimization-based feature selection + random forest model achieved the highest TOPSIS score and performed exceptionally well with an accuracy of 99.13%, F2-score of 98.82%, and AUC-ROC score of 99.13% on the ultrasound dataset. Similarly, the model achieved an accuracy score of 90.65%, an F2-score of 92.01%, and an AUC-ROC score of 95.48% on the histopathological dataset. This study exploits the combination novelty of different algorithms in order to improve the thyroid cancer diagnosis capabilities. This proposed framework outperforms the current state-of-the-art diagnostic methods for thyroid-related abnormalities in ultrasound and histopathological datasets and can significantly aid medical professionals by reducing the excessive burden on the medical fraternity.

Full article

(This article belongs to the Special Issue Internet-of-Medical-Things-Streamed Medical-Image-Based Recommendation and Optimization Techniques Using Federated Learning)

►▼

Show Figures

Figure 1

Open AccessArticle

PP-JPEG: A Privacy-Preserving JPEG Image-Tampering Localization

J. Imaging 2023, 9(9), 172; https://doi.org/10.3390/jimaging9090172 - 27 Aug 2023

Abstract

The widespread availability of digital image-processing software has given rise to various forms of image manipulation and forgery, which can pose a significant challenge in different fields, such as law enforcement, journalism, etc. It can also lead to privacy concerns. We are proposing

[...] Read more.

The widespread availability of digital image-processing software has given rise to various forms of image manipulation and forgery, which can pose a significant challenge in different fields, such as law enforcement, journalism, etc. It can also lead to privacy concerns. We are proposing that a privacy-preserving framework to encrypt images before processing them is vital to maintain the privacy and confidentiality of sensitive images, especially those used for the purpose of investigation. To address these challenges, we propose a novel solution that detects image forgeries while preserving the privacy of the images. Our method proposes a privacy-preserving framework that encrypts the images before processing them, making it difficult for unauthorized individuals to access them. The proposed method utilizes a compression quality analysis in the encrypted domain to detect the presence of forgeries in images by determining if the forged portion (dummy image) has a compression quality different from that of the original image (featured image) in the encrypted domain. This approach effectively localizes the tampered portions of the image, even for small pixel blocks of size

(This article belongs to the Topic Computer Vision and Image Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Improving Medical Imaging with Medical Variation Diffusion Model: An Analysis and Evaluation

J. Imaging 2023, 9(9), 171; https://doi.org/10.3390/jimaging9090171 - 25 Aug 2023

Abstract

The Medical VDM is an approach for generating medical images that employs variational diffusion models (VDMs) to smooth images while preserving essential features, including edges. The primary goal of the Medical VDM is to enhance the accuracy and reliability of medical image generation.

[...] Read more.

The Medical VDM is an approach for generating medical images that employs variational diffusion models (VDMs) to smooth images while preserving essential features, including edges. The primary goal of the Medical VDM is to enhance the accuracy and reliability of medical image generation. In this paper, we present a comprehensive description of the Medical VDM approach and its mathematical foundation, as well as experimental findings that showcase its efficacy in generating high-quality medical images that accurately reflect the underlying anatomy and physiology. Our results reveal that the Medical VDM surpasses current VDM methods in terms of generating faithful medical images, with a reconstruction loss of 0.869, a diffusion loss of 0.0008, and a latent loss of

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessProject Report

Bayesian Reconstruction Algorithms for Low-Dose Computed Tomography Are Not Yet Suitable in Clinical Context

by

, , , , and

J. Imaging 2023, 9(9), 170; https://doi.org/10.3390/jimaging9090170 - 23 Aug 2023

Abstract

Computed tomography (CT) is a widely used examination technique that usually requires a compromise between image quality and radiation exposure. Reconstruction algorithms aim to reduce radiation exposure while maintaining comparable image quality. Recently, unsupervised deep learning methods have been proposed for this purpose.

[...] Read more.

Computed tomography (CT) is a widely used examination technique that usually requires a compromise between image quality and radiation exposure. Reconstruction algorithms aim to reduce radiation exposure while maintaining comparable image quality. Recently, unsupervised deep learning methods have been proposed for this purpose. In this study, a promising sparse-view reconstruction method (posterior temperature optimized Bayesian inverse model; POTOBIM) is tested for its clinical applicability. For this study, 17 whole-body CTs of deceased were performed. In addition to POTOBIM, reconstruction was performed using filtered back projection (FBP). An evaluation was conducted by simulating sinograms and comparing the reconstruction with the original CT slice for each case. A quantitative analysis was performed using peak signal-to-noise ratio (PSNR) and structural similarity index measure (SSIM). The quality was assessed visually using a modified Ludewig’s scale. In the qualitative evaluation, POTOBIM was rated worse than the reference images in most cases. A partially equivalent image quality could only be achieved with 80 projections per rotation. Quantitatively, POTOBIM does not seem to benefit from more than 60 projections. Although deep learning methods seem suitable to produce better image quality, the investigated algorithm (POTOBIM) is not yet suitable for clinical routine.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

An Innovative Faster R-CNN-Based Framework for Breast Cancer Detection in MRI

by

, , and

J. Imaging 2023, 9(9), 169; https://doi.org/10.3390/jimaging9090169 - 23 Aug 2023

Abstract

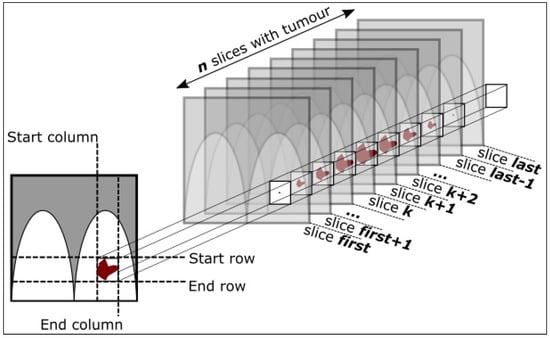

Replacing lung cancer as the most commonly diagnosed cancer globally, breast cancer (BC) today accounts for 1 in 8 cancer diagnoses and a total of 2.3 million new cases in both sexes combined. An estimated 685,000 women died from BC in 2020, corresponding

[...] Read more.

Replacing lung cancer as the most commonly diagnosed cancer globally, breast cancer (BC) today accounts for 1 in 8 cancer diagnoses and a total of 2.3 million new cases in both sexes combined. An estimated 685,000 women died from BC in 2020, corresponding to 16% or 1 in every 6 cancer deaths in women. BC represents a quarter of a total of cancer cases in females and by far the most commonly diagnosed cancer in women in 2020. However, when detected in the early stages of the disease, treatment methods have proven to be very effective in increasing life expectancy and, in many cases, patients fully recover. Several medical imaging modalities, such as X-rays Mammography (MG), Ultrasound (US), Computer Tomography (CT), Magnetic Resonance Imaging (MRI), and Digital Tomosynthesis (DT) have been explored to support radiologists/physicians in clinical decision-making workflows for the detection and diagnosis of BC. In this work, we propose a novel Faster R-CNN-based framework to automate the detection of BC pathological Lesions in MRI. As a main contribution, we have developed and experimentally (statistically) validated an innovative method improving the “breast MRI preprocessing phase” to select the patient’s slices (images) and associated bounding boxes representing pathological lesions. In this way, it is possible to create a more robust training (benchmarking) dataset to feed Deep Learning (DL) models, reducing the computation time and the dimension of the dataset, and more importantly, to identify with high accuracy the specific regions (bounding boxes) for each of the patient’s images, in which a possible pathological lesion (tumor) has been identified. As a result, in an experimental setting using a fully annotated dataset (released to the public domain) comprising a total of 922 MRI-based BC patient cases, we have achieved, as the most accurate trained model, an accuracy rate of 97.83%, and subsequently, applying a ten-fold cross-validation method, a mean accuracy on the trained models of 94.46% and an associated standard deviation of 2.43%.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

Multimodal Approach for Enhancing Biometric Authentication

J. Imaging 2023, 9(9), 168; https://doi.org/10.3390/jimaging9090168 - 22 Aug 2023

Abstract

Unimodal biometric systems rely on a single source or unique individual biological trait for measurement and examination. Fingerprint-based biometric systems are the most common, but they are vulnerable to presentation attacks or spoofing when a fake fingerprint is presented to the sensor. To

[...] Read more.

Unimodal biometric systems rely on a single source or unique individual biological trait for measurement and examination. Fingerprint-based biometric systems are the most common, but they are vulnerable to presentation attacks or spoofing when a fake fingerprint is presented to the sensor. To address this issue, we propose an enhanced biometric system based on a multimodal approach using two types of biological traits. We propose to combine fingerprint and Electrocardiogram (ECG) signals to mitigate spoofing attacks. Specifically, we design a multimodal deep learning architecture that accepts fingerprints and ECG as inputs and fuses the feature vectors using stacking and channel-wise approaches. The feature extraction backbone of the architecture is based on data-efficient transformers. The experimental results demonstrate the promising capabilities of the proposed approach in enhancing the robustness of the system to presentation attacks.

Full article

(This article belongs to the Special Issue Multi-Biometric and Multi-Modal Authentication)

►▼

Show Figures

Figure 1

Open AccessArticle

Clinical Validation Benchmark Dataset and Expert Performance Baseline for Colorectal Polyp Localization Methods

by

, , , , , , and

J. Imaging 2023, 9(9), 167; https://doi.org/10.3390/jimaging9090167 - 22 Aug 2023

Abstract

Colorectal cancer is one of the leading death causes worldwide, but, fortunately, early detection highly increases survival rates, with the adenoma detection rate being one surrogate marker for colonoscopy quality. Artificial intelligence and deep learning methods have been applied with great success to

[...] Read more.

Colorectal cancer is one of the leading death causes worldwide, but, fortunately, early detection highly increases survival rates, with the adenoma detection rate being one surrogate marker for colonoscopy quality. Artificial intelligence and deep learning methods have been applied with great success to improve polyp detection and localization and, therefore, the adenoma detection rate. In this regard, a comparison with clinical experts is required to prove the added value of the systems. Nevertheless, there is no standardized comparison in a laboratory setting before their clinical validation. The ClinExpPICCOLO comprises 65 unedited endoscopic images that represent the clinical setting. They include white light imaging and narrow band imaging, with one third of the images containing a lesion but, differently to another public datasets, the lesion does not appear well-centered in the image. Together with the dataset, an expert clinical performance baseline has been established with the performance of 146 gastroenterologists, who were required to locate the lesions in the selected images. Results shows statistically significant differences between experience groups. Expert gastroenterologists’ accuracy was 77.74, while sensitivity and specificity were 86.47 and 74.33, respectively. These values can be established as minimum values for a DL method before performing a clinical trial in the hospital setting.

Full article

(This article belongs to the Section AI in Imaging)

►▼

Show Figures

Figure 1

Open AccessBrief Report

Testicular Evaluation Using Shear Wave Elastography (SWE) in Patients with Varicocele

by

, , , , and

J. Imaging 2023, 9(9), 166; https://doi.org/10.3390/jimaging9090166 - 22 Aug 2023

Abstract

Purpose: To assess the possible influence of the presence of varicocele on the quantification of testicular stiffness. Methods: Ultrasound with shear wave elastography (SWE) was performed on 48 consecutive patients (96 testicles) referred following urology consultation for different reasons. A total of 94

[...] Read more.

Purpose: To assess the possible influence of the presence of varicocele on the quantification of testicular stiffness. Methods: Ultrasound with shear wave elastography (SWE) was performed on 48 consecutive patients (96 testicles) referred following urology consultation for different reasons. A total of 94 testes were studied and distributed in three groups: testes with varicocele (group A, n = 19), contralateral normal testes (group B; n = 13) and control group (group C, n = 62). Age, testicular volume and testicular parenchymal tissue stiffness values of the three groups were compared using the Kruskal–Wallis test. Results: The mean age of the patients was 42.1 ± 11.1 years. The main reason for consultation was infertility (64.6%). The mean SWE value was 4 ± 0.4 kPa (kilopascal) in group A, 4 ± 0.5 kPa in group B and 4.2 ± 0.7 kPa in group C or control. The testicular volume was 15.8 ± 3.8 mL in group A, 16 ± 4.3 mL in group B and 16.4 ± 5.9 mL in group C. No statistically significant differences were found between the three groups in terms of age, testicular volume and tissue stiffness values. Conclusion: Tissue stiffness values were higher in our control group (healthy testicles) than in patients with varicocele.

Full article

(This article belongs to the Special Issue Statistical Biomedical Signal and Image Processing and Understanding: 2nd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

Content-Based Image Retrieval for Traditional Indonesian Woven Fabric Images Using a Modified Convolutional Neural Network Method

J. Imaging 2023, 9(8), 165; https://doi.org/10.3390/jimaging9080165 - 18 Aug 2023

Abstract

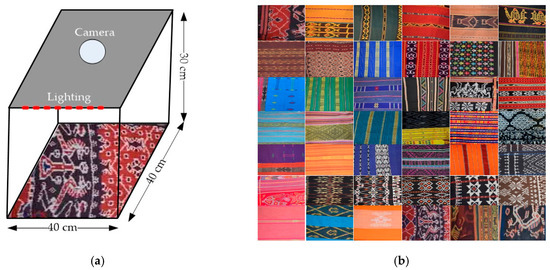

A content-based image retrieval system, as an Indonesian traditional woven fabric knowledge base, can be useful for artisans and trade promotions. However, creating an effective and efficient retrieval system is difficult due to the lack of an Indonesian traditional woven fabric dataset, and

[...] Read more.

A content-based image retrieval system, as an Indonesian traditional woven fabric knowledge base, can be useful for artisans and trade promotions. However, creating an effective and efficient retrieval system is difficult due to the lack of an Indonesian traditional woven fabric dataset, and unique characteristics are not considered simultaneously. One type of traditional Indonesian fabric is ikat woven fabric. Thus, this study collected images of this traditional Indonesian woven fabric to create the TenunIkatNet dataset. The dataset consists of 120 classes and 4800 images. The images were captured perpendicularly, and the ikat woven fabrics were placed on different backgrounds, hung, and worn on the body, according to the utilization patterns. The feature extraction method using a modified convolutional neural network (MCNN) learns the unique features of Indonesian traditional woven fabrics. The experimental results show that the modified CNN model outperforms other pretrained CNN models (i.e., ResNet101, VGG16, DenseNet201, InceptionV3, MobileNetV2, Xception, and InceptionResNetV2) in top-5, top-10, top-20, and top-50 accuracies with scores of 99.96%, 99.88%, 99.50%, and 97.60%, respectively.

Full article

(This article belongs to the Special Issue Advances in Image Analysis: Shapes, Textures and Multifractals)

►▼

Show Figures

Figure 1

Open AccessArticle

Accuracy of Intra-Oral Radiography and Cone Beam Computed Tomography in the Diagnosis of Buccal Bone Loss

J. Imaging 2023, 9(8), 164; https://doi.org/10.3390/jimaging9080164 - 17 Aug 2023

Abstract

Background: The use of cone beam computed tomography (CBCT) in dentistry started in the maxillofacial field, where it was used for complex and comprehensive treatment planning. Due to the use of reduced radiation dose compared to a computed tomography (CT) scan, CBCT has

[...] Read more.

Background: The use of cone beam computed tomography (CBCT) in dentistry started in the maxillofacial field, where it was used for complex and comprehensive treatment planning. Due to the use of reduced radiation dose compared to a computed tomography (CT) scan, CBCT has become a frequently used diagnostic tool in dental practice. However, published data on the accuracy of CBCT in the diagnosis of buccal bone level is lacking. The aim of this study was to compare the accuracy of intra-oral radiography (IOR) and CBCT in the diagnosis of the extent of buccal bone loss. Methods: A dry skull was used to create a buccal bone defect at the most coronal level of a first premolar; the defect was enlarged apically in steps of 1 mm. After each step, IOR and CBCT were taken. Based on the CBCT data, two observers jointly selected three axial slices at different levels of the buccal bone, as well as one transverse slice. Six dentists participated in the radiographic observations. First, all observers received the 10 intra-oral radiographs, and each observer was asked to rank the intra-oral radiographs on the extent of the buccal bone defect. Afterwards, the procedure was repeated with the CBCT scans based on a combination of axial and transverse information. For the second part of the study, each observer was asked to evaluate the axial and transverse CBCT slices on the presence or absence of a buccal bone defect. Results: The percentage of buccal bone defect progression rankings that were within 1 of the true rank was 32% for IOR and 42% for CBCT. On average, kappa values increased by 0.384 for CBCT compared to intra-oral radiography. The overall sensitivity and specificity of CBCT in the diagnosis of the presence or absence of a buccal bone defect was 0.89 and 0.85, respectively. The average area under the curve (AUC) of the receiver operating curve (ROC) was 0.892 for all observers. Conclusion: When CBCT images are available for justified indications, other than bone level assessment, such 3D images are more accurate and thus preferred to 2D images to assess periodontal buccal bone. For other clinical applications, intra-oral radiography remains the standard method for radiographic evaluation.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

MRI-Based Effective Ensemble Frameworks for Predicting Human Brain Tumor

by

, , , , , , and

J. Imaging 2023, 9(8), 163; https://doi.org/10.3390/jimaging9080163 - 16 Aug 2023

Abstract

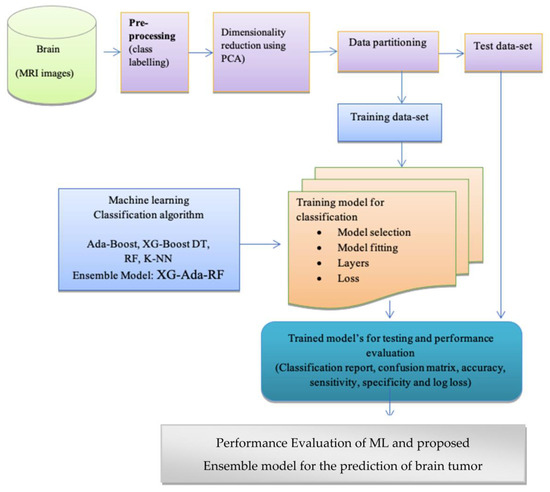

The diagnosis of brain tumors at an early stage is an exigent task for radiologists. Untreated patients rarely survive more than six months. It is a potential cause of mortality that can occur very quickly. Because of this, the early and effective diagnosis

[...] Read more.

The diagnosis of brain tumors at an early stage is an exigent task for radiologists. Untreated patients rarely survive more than six months. It is a potential cause of mortality that can occur very quickly. Because of this, the early and effective diagnosis of brain tumors requires the use of an automated method. This study aims at the early detection of brain tumors using brain magnetic resonance imaging (MRI) data and efficient learning paradigms. In visual feature extraction, convolutional neural networks (CNN) have achieved significant breakthroughs. The study involves features extraction by deep convolutional layers for the efficient classification of brain tumor victims from the normal group. The deep convolutional neural network was implemented to extract features that represent the image more comprehensively for model training. Using deep convolutional features helps to increase the precision of tumor and non-tumor patient classifications. In this paper, we experimented with five machine learnings (ML) to heighten the understanding and enhance the scope and significance of brain tumor classification. Further, we proposed an ensemble of three high-performing individual ML models, namely Extreme Gradient Boosting, Ada-Boost, and Random Forest (XG-Ada-RF), to derive binary class classification output for detecting brain tumors in images. The proposed voting classifier, along with convoluted features, produced results that showed the highest accuracy of 95.9% for tumor and 94.9% for normal. Compared to individual methods, the proposed ensemble approach demonstrated improved accuracy and outperformed the individual methods.

Full article

(This article belongs to the Special Issue Multimodal Imaging for Radiotherapy: Latest Advances and Challenges)

►▼

Show Figures

Figure 1

Open AccessArticle

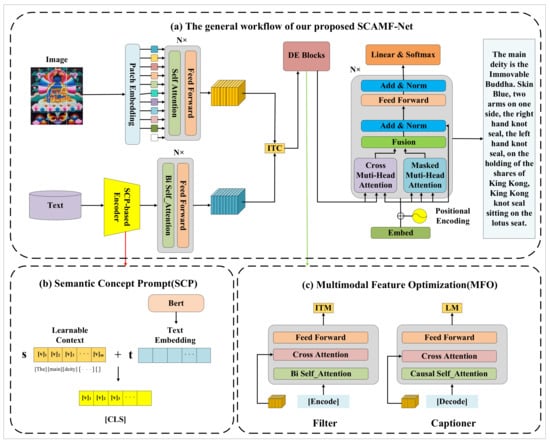

Thangka Image Captioning Based on Semantic Concept Prompt and Multimodal Feature Optimization

J. Imaging 2023, 9(8), 162; https://doi.org/10.3390/jimaging9080162 - 16 Aug 2023

Abstract

Thangka images exhibit a high level of diversity and richness, and the existing deep learning-based image captioning methods generate poor accuracy and richness of Chinese captions for Thangka images. To address this issue, this paper proposes a Semantic Concept Prompt and Multimodal Feature

[...] Read more.

Thangka images exhibit a high level of diversity and richness, and the existing deep learning-based image captioning methods generate poor accuracy and richness of Chinese captions for Thangka images. To address this issue, this paper proposes a Semantic Concept Prompt and Multimodal Feature Optimization network (SCAMF-Net). The Semantic Concept Prompt (SCP) module is introduced in the text encoding stage to obtain more semantic information about the Thangka by introducing contextual prompts, thus enhancing the richness of the description content. The Multimodal Feature Optimization (MFO) module is proposed to optimize the correlation between Thangka images and text. This module enhances the correlation between the image features and text features of the Thangka through the Captioner and Filter to more accurately describe the visual concept features of the Thangka. The experimental results demonstrate that our proposed method outperforms baseline models on the Thangka dataset in terms of BLEU-4, METEOR, ROUGE, CIDEr, and SPICE by 8.7%, 7.9%, 8.2%, 76.6%, and 5.7%, respectively. Furthermore, this method also exhibits superior performance compared to the state-of-the-art methods on the public MSCOCO dataset.

Full article

(This article belongs to the Topic Computer Vision and Image Processing)

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Applied Sciences, Electronics, MAKE, J. Imaging, Sensors

Applied Computer Vision and Pattern Recognition: 2nd Volume

Topic Editors: Antonio Fernández-Caballero, Byung-Gyu KimDeadline: 30 September 2023

Topic in

Applied Sciences, Electronics, J. Imaging, Sensors, Signals

Visual Object Tracking: Challenges and Applications

Topic Editors: Shunli Zhang, Xin Yu, Kaihua Zhang, Yang YangDeadline: 31 October 2023

Topic in

Applied Sciences, Biosensors, J. Imaging, Sensors, Signals

Bio-Inspired Systems and Signal Processing

Topic Editors: Donald Y.C. Lie, Chung-Chih Hung, Jian XuDeadline: 30 November 2023

Topic in

Applied Sciences, Computers, Information, J. Imaging, Mathematics

Research on Deep Neural Networks for Electrocardiogram Classification and Automatic Diagnosis of Arrhythmia

Topic Editors: Vidya Sudarshan, Ru San TanDeadline: 31 December 2023

Conferences

Special Issues

Special Issue in

J. Imaging

Advances in PET/CT Imaging for Diagnosis in Sarcoidosis

Guest Editor: Marco TanaDeadline: 1 September 2023

Special Issue in

J. Imaging

Explainable AI for Image-Aided Diagnosis

Guest Editors: António Cunha, Paulo A.C. Salgado, Teresa Paula PerdicoúlisDeadline: 30 September 2023

Special Issue in

J. Imaging

Brain Image Computation for Diagnosis and Treatment

Guest Editor: Jussi TohkaDeadline: 15 October 2023

Special Issue in

J. Imaging

Modelling of Human Visual System in Image Processing

Guest Editors: Alexey Mashtakov, Edoardo ProvenziDeadline: 22 October 2023